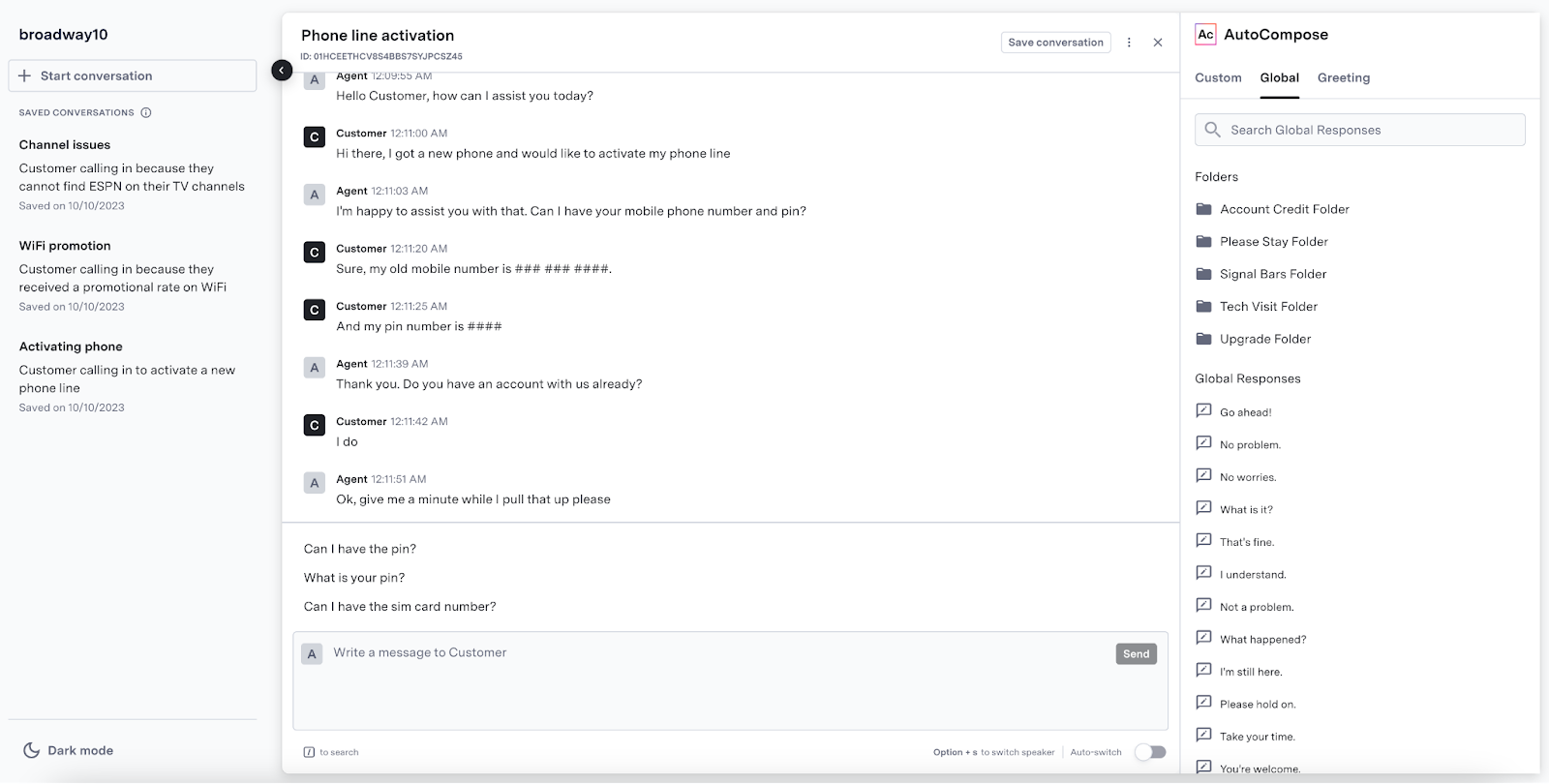

ASAPP AutoCompose helps agents compose the best response to customers, using machine learning techniques to suggest complete responses, partial sentences, key phrases and spelling fixes in real-time based on both the context of the conversation and past agent behavior.

## Features

AutoCompose provides the following features:

| Feature | Description |

| :----------------------- | :------------------------------------------------------------------------------------------------------------------------ |

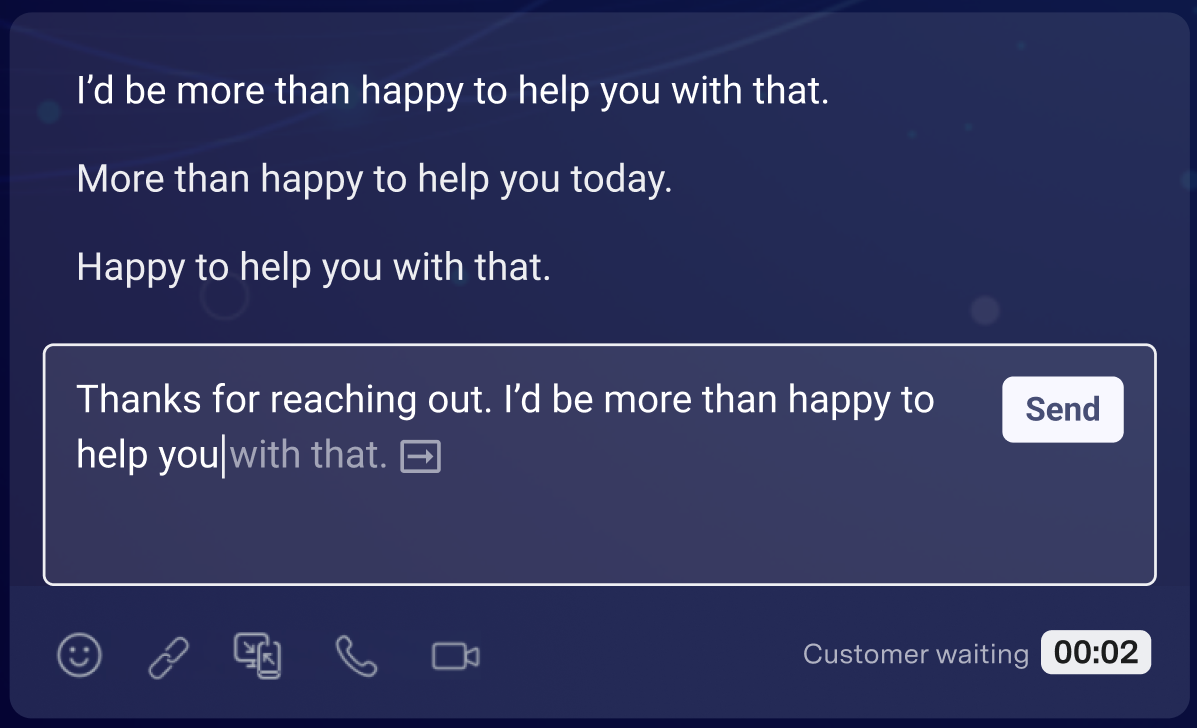

| **Autosuggest** | Provides up to three suggestions that appear in a suggestion drawer above the typing field before the agent begins typing |

| **Autocomplete** | Provides up to three suggestions that appear in a suggestion drawer above the typing field after the agent begins typing |

| **Phrase autocomplete** | Provides in-line phrase suggestions that appear while an agent is typing |

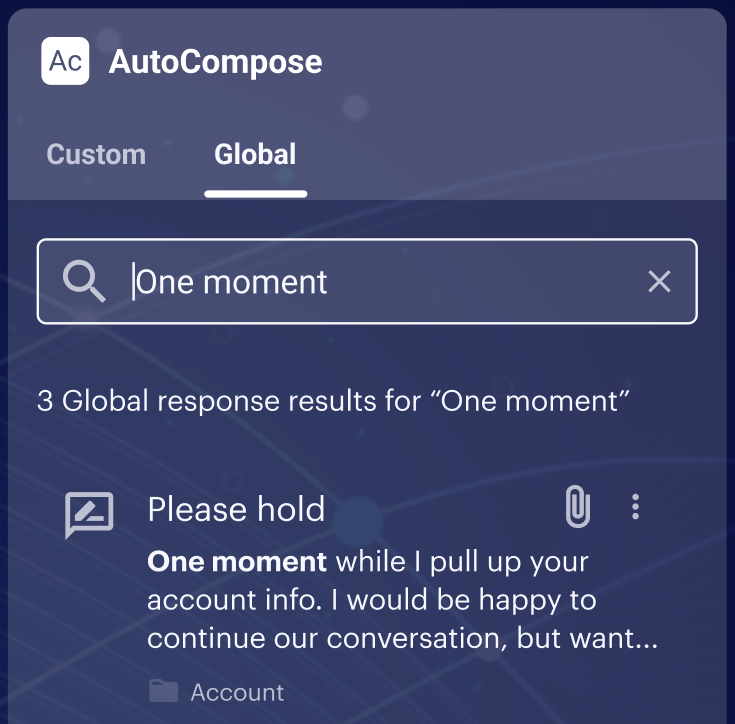

| **Response quicksearch** | Allows in-line search of global and custom responses |

| **Fluency correction** | Applies automatic grammar corrections that an agent can undo |

| **Profanity blocking** | Prevents an agent from sending a message containing profanity to the customer |

| **Custom response list** | Enables management of an individual agent’s custom responses in a simple library interface |

| **Global response list** | Enables management of global responses in a simple tooling interface |

## How it works

AutoCompose takes in a live feed of your agent's conversations, and then using our various AI models, returns a list of changes or suggested responses based on the state of conversation and currently typed message.

1. Provide Conversation data via Conversation API.

2. In your Agent Application, call the AutoCompose APIs to retrieve the list of changes or suggested responses.

3. Show the potential changes or responses to your Agent for them to incorporate.

This streamlines your agent's effeciancy while still allowing agents to review changes, ensuring only the highest quality of responses are sent to your customers.

AutoCompose has the following technical components:

| Component | Description |

| :--------------------- | :----------------------------------------------------------------------------------------------------------------------------------- |

| **Autosuggest model** | LLM Retrained by ASAPP with agent usage data |

| **Data Storage** | A storage for historical conversations, global response lists and agent historical feature usage that are used for weekly retraining |

| **Conversation API**\* | An API for creating and updating conversations and conversation data |

ASAPP AutoCompose helps agents compose the best response to customers, using machine learning techniques to suggest complete responses, partial sentences, key phrases and spelling fixes in real-time based on both the context of the conversation and past agent behavior.

## Features

AutoCompose provides the following features:

| Feature | Description |

| :----------------------- | :------------------------------------------------------------------------------------------------------------------------ |

| **Autosuggest** | Provides up to three suggestions that appear in a suggestion drawer above the typing field before the agent begins typing |

| **Autocomplete** | Provides up to three suggestions that appear in a suggestion drawer above the typing field after the agent begins typing |

| **Phrase autocomplete** | Provides in-line phrase suggestions that appear while an agent is typing |

| **Response quicksearch** | Allows in-line search of global and custom responses |

| **Fluency correction** | Applies automatic grammar corrections that an agent can undo |

| **Profanity blocking** | Prevents an agent from sending a message containing profanity to the customer |

| **Custom response list** | Enables management of an individual agent’s custom responses in a simple library interface |

| **Global response list** | Enables management of global responses in a simple tooling interface |

## How it works

AutoCompose takes in a live feed of your agent's conversations, and then using our various AI models, returns a list of changes or suggested responses based on the state of conversation and currently typed message.

1. Provide Conversation data via Conversation API.

2. In your Agent Application, call the AutoCompose APIs to retrieve the list of changes or suggested responses.

3. Show the potential changes or responses to your Agent for them to incorporate.

This streamlines your agent's effeciancy while still allowing agents to review changes, ensuring only the highest quality of responses are sent to your customers.

AutoCompose has the following technical components:

| Component | Description |

| :--------------------- | :----------------------------------------------------------------------------------------------------------------------------------- |

| **Autosuggest model** | LLM Retrained by ASAPP with agent usage data |

| **Data Storage** | A storage for historical conversations, global response lists and agent historical feature usage that are used for weekly retraining |

| **Conversation API**\* | An API for creating and updating conversations and conversation data |

## Get Started

Integrate AutoCompose into your applications and upscale your agent response rates.

### Integrate AutoCompose

AutoCompose is available both as an integration into leading messaging applications and as an API for custom-built messaging interfaces.

For technical instructions on how to implement the service for each approach, refer to the deployment guides below:

## Get Started

Integrate AutoCompose into your applications and upscale your agent response rates.

### Integrate AutoCompose

AutoCompose is available both as an integration into leading messaging applications and as an API for custom-built messaging interfaces.

For technical instructions on how to implement the service for each approach, refer to the deployment guides below:

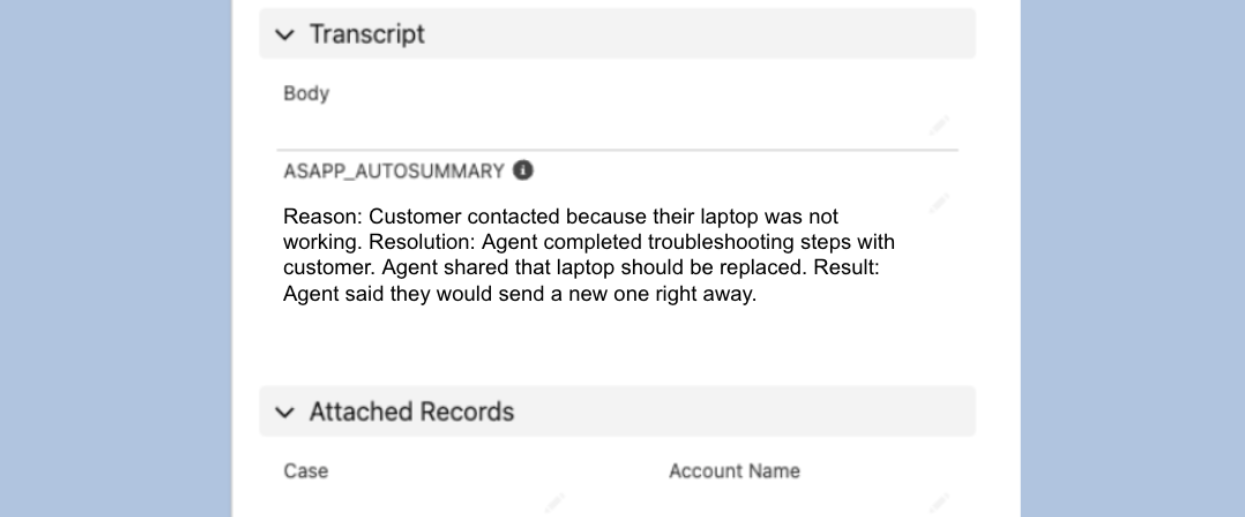

ASAPP AutoSummary is a recommended pairing with AutoCompose, generating conversation summaries of key events for 100% of customer interactions.

Note-taking and disposition questions take call time and agent focus, both of which can have a negative impact on agent performance. Removing summarization tasks from agents through automation can keep agents focused on messaging with customers and yield higher summary data coverage than manual agent notes.

ASAPP AutoSummary is a recommended pairing with AutoCompose, generating conversation summaries of key events for 100% of customer interactions.

Note-taking and disposition questions take call time and agent focus, both of which can have a negative impact on agent performance. Removing summarization tasks from agents through automation can keep agents focused on messaging with customers and yield higher summary data coverage than manual agent notes.

[Click here to download a global responses template file](https://docs-sdk.asapp.com/product_features/global-responses-template.csv).

[Click here to download a global responses template file](https://docs-sdk.asapp.com/product_features/global-responses-template.csv).

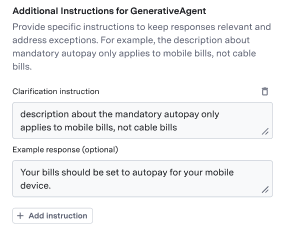

Metadata Inserts

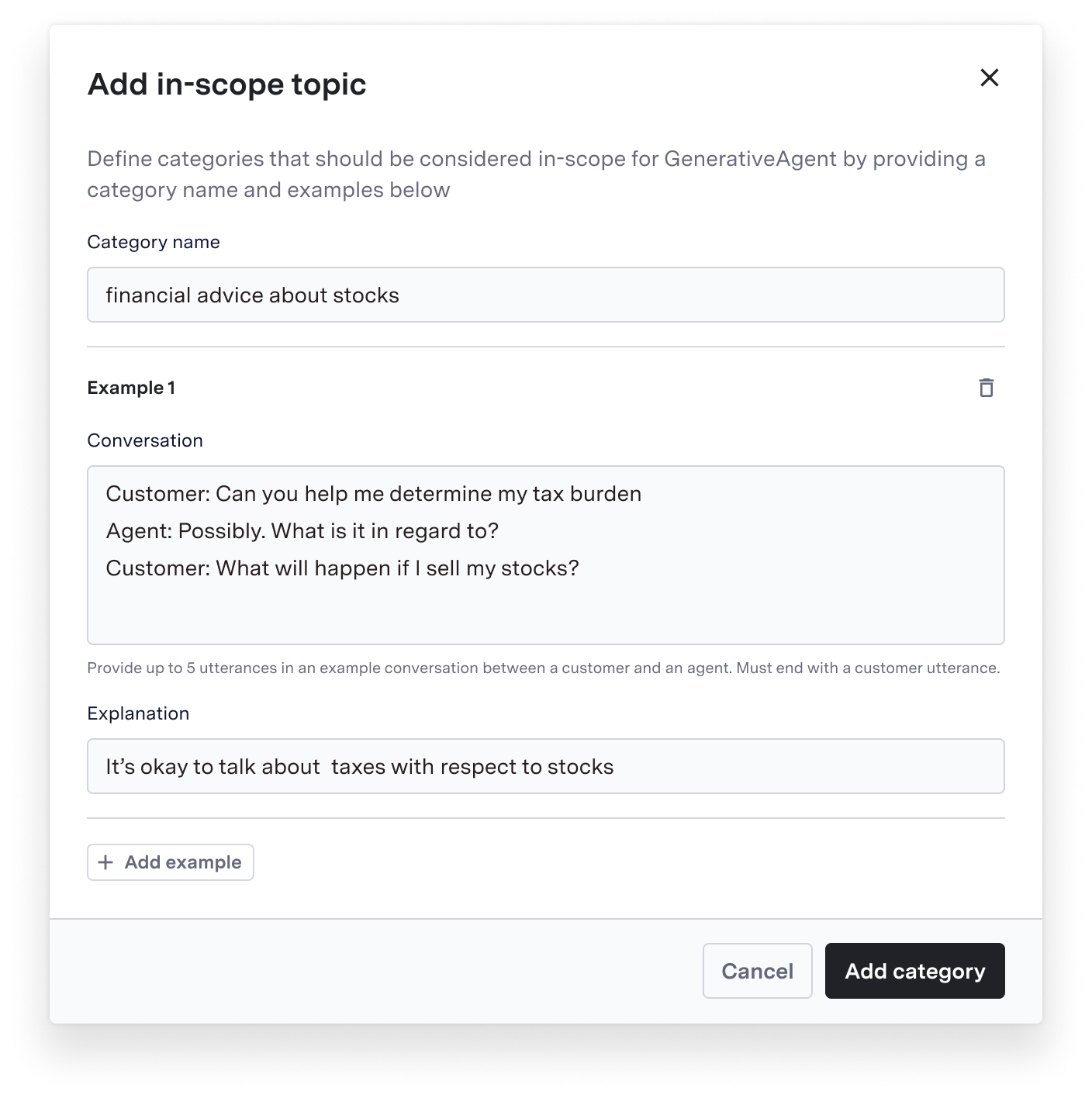

A response that contains a metadata insert is a templated response. When a templated response is suggested, it will be shown to the agent with the metadata insert filled in.

Metadata Inserts

A response that contains a metadata insert is a templated response. When a templated response is suggested, it will be shown to the agent with the metadata insert filled in.

*Adding a templated response in AI-Console*

*Adding a templated response in AI-Console*

*Templated response being suggested to the agent in AutoCompose*

*Templated response being suggested to the agent in AutoCompose*

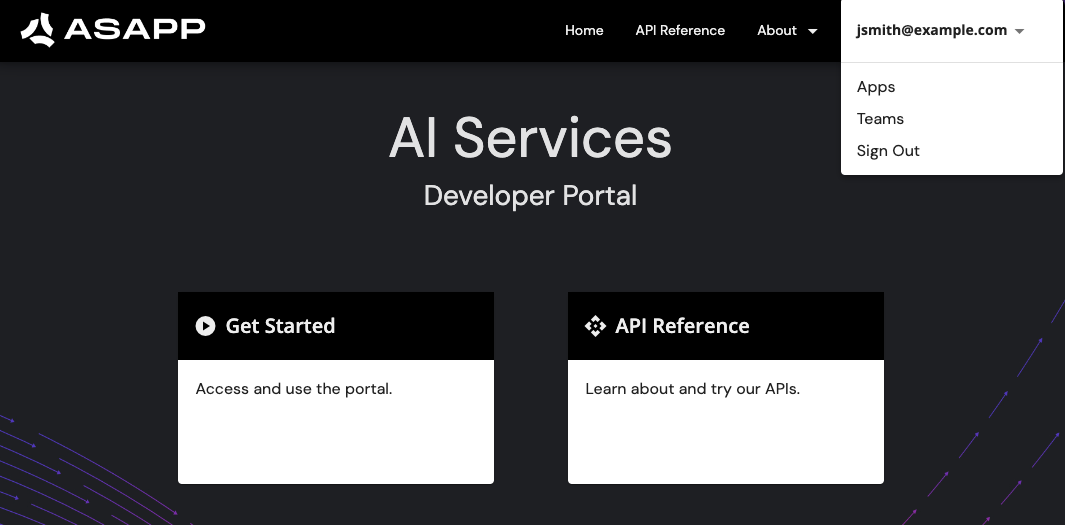

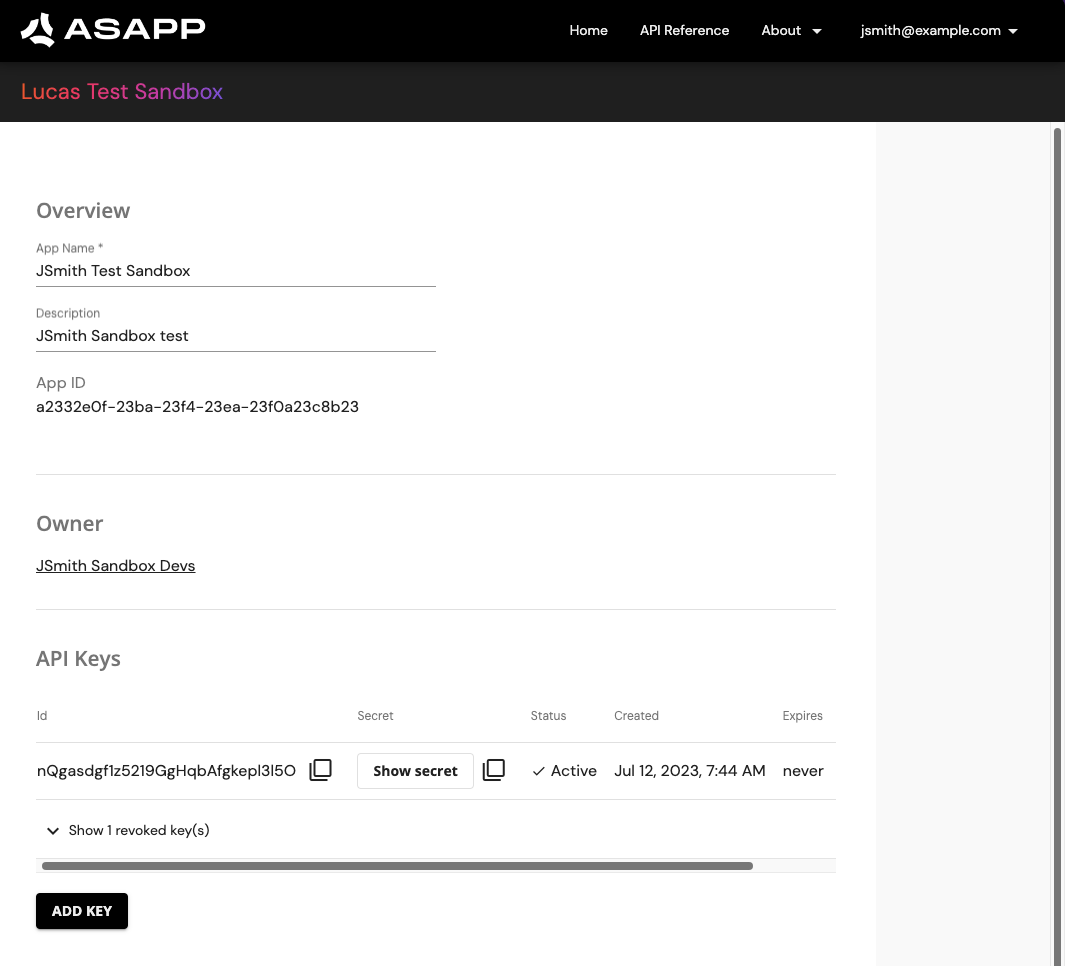

### Setup

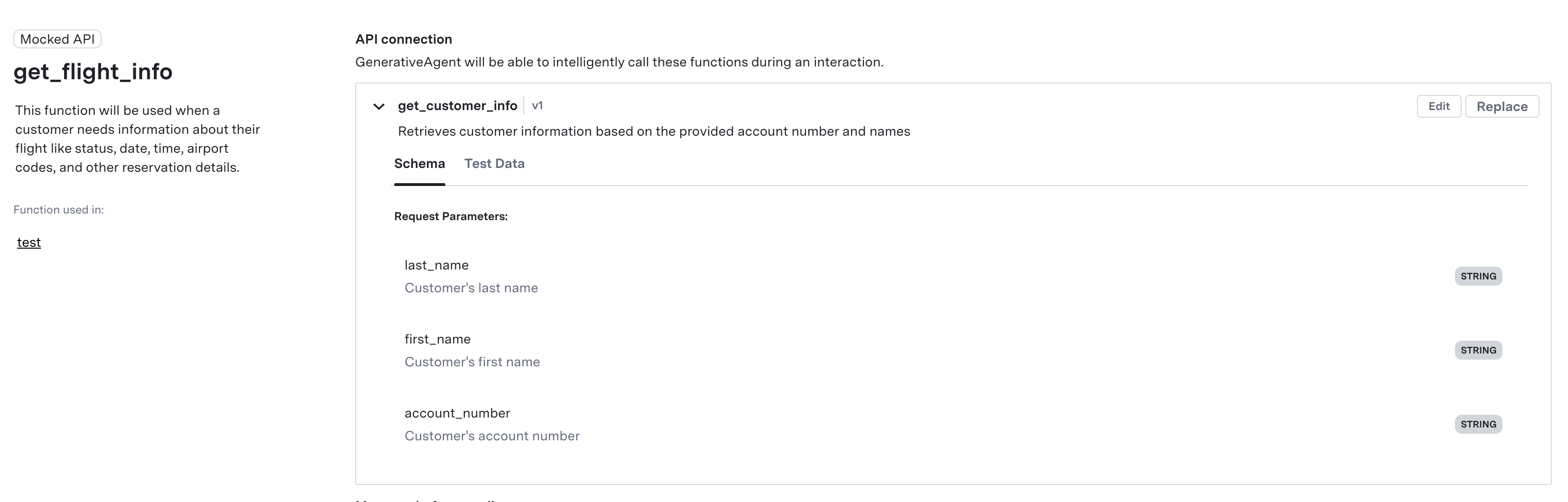

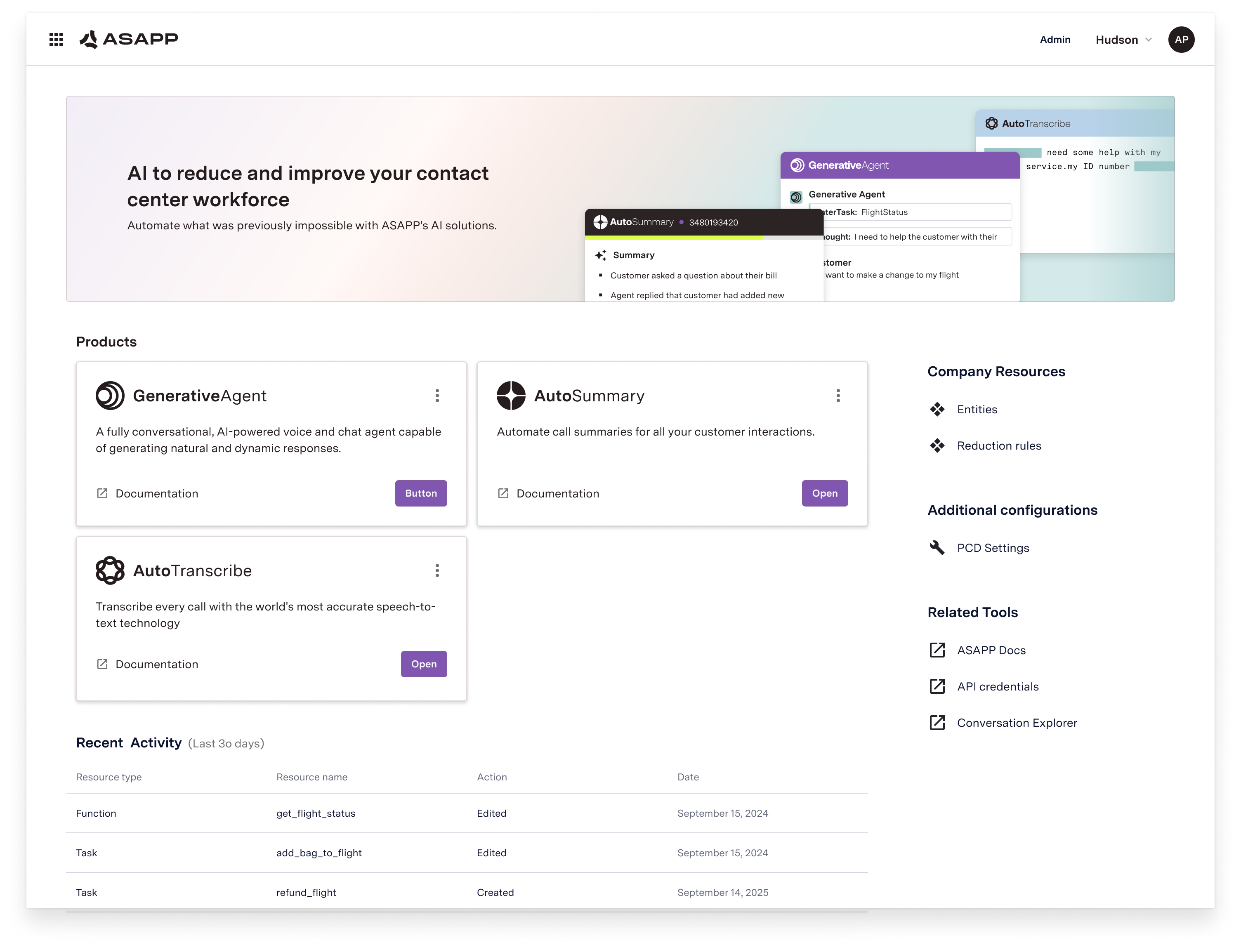

ASAPP provides an AI Services [Developer Portal](/getting-started/developers). Within the portal, developers can do the following:

* Access relevant API documentation (e.g. OpenAPI reference schemas)

* Access API keys for authorization

* Manage user accounts and apps

In order to use ASAPP's APIs, all apps must be registered through the portal. Once registered, each app will be provided unique API keys for ongoing use.

### Setup

ASAPP provides an AI Services [Developer Portal](/getting-started/developers). Within the portal, developers can do the following:

* Access relevant API documentation (e.g. OpenAPI reference schemas)

* Access API keys for authorization

* Manage user accounts and apps

In order to use ASAPP's APIs, all apps must be registered through the portal. Once registered, each app will be provided unique API keys for ongoing use.

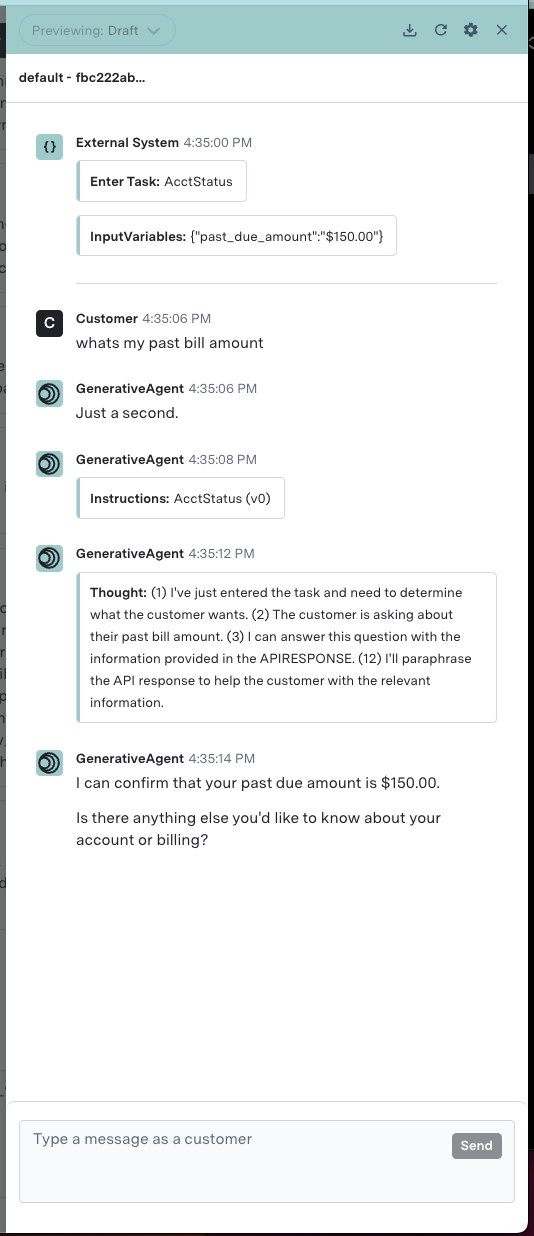

**In this example:**

**In this example:**

Conversation Event |

API Request |

|---|---|

Conversation starts |

1. Create a new ASAPP conversation record 2. Request first set of response suggestions |

Agent keystroke |

1. Request updated response suggestions |

Agent uses the spacebar |

1. Request updated response suggestions 2. Check the spelling of the most recent word |

Agent searches for a response |

1. Get the response list that pertains to their search |

Agent saves a custom response |

1. Add the new response to their personal library |

Agent submits their message |

1. Check if any profanity is present in the message |

Agent message is sent |

1. Add the message to ASAPP’s conversation record 2. Create analytics event for the message that details how the agent used AutoCompose 3. Request updated response suggestions |

Customer message is sent |

1. Add the message to ASAPP’s conversation record 2. Request updated response suggestions |

By promptly sending conversation and message data to this API, you ensure that ASAPP's conversation records match your own and that ASAPP services use the most current information available.

[`POST /conversation/v1/conversations`](/apis/conversations/create-or-update-a-conversation)

Use this endpoint to create a new conversation record or update an existing conversation record.

**When to Call**

This service should be called when a conversation starts or when something about the conversation changes (e.g. a conversation is reassigned to a different agent).

**Request Details**

Requests must include a conversation identifier from your system of record (external to ASAPP) and a timestamp (formatted in RFC3339 micro second date-time expressed in UTC) for when the conversation started.

Requests to create a conversation record must also include identifying information about the human participants. Two types of requests are supported to create a new conversation:

1. **Conversations started with an agent:** Provide both the `agent` and `customer` objects in the request when the conversation begins.

2. **Conversations started with a virtual agent:** Provide only the `customer` object in the initial request when the conversation with the virtual agent begins; you must send a subsequent request that includes both the `agent` and `customer` objects once the agent joins the conversation.

Requests may also include key-value pair metadata for the conversation that can be used either (1) to insert values into templated responses for agents or (2) as filter criteria to determine whether a conversation is eligible for specific response suggestions.

By promptly sending conversation and message data to this API, you ensure that ASAPP's conversation records match your own and that ASAPP services use the most current information available.

[`POST /conversation/v1/conversations`](/apis/conversations/create-or-update-a-conversation)

Use this endpoint to create a new conversation record or update an existing conversation record.

**When to Call**

This service should be called when a conversation starts or when something about the conversation changes (e.g. a conversation is reassigned to a different agent).

**Request Details**

Requests must include a conversation identifier from your system of record (external to ASAPP) and a timestamp (formatted in RFC3339 micro second date-time expressed in UTC) for when the conversation started.

Requests to create a conversation record must also include identifying information about the human participants. Two types of requests are supported to create a new conversation:

1. **Conversations started with an agent:** Provide both the `agent` and `customer` objects in the request when the conversation begins.

2. **Conversations started with a virtual agent:** Provide only the `customer` object in the initial request when the conversation with the virtual agent begins; you must send a subsequent request that includes both the `agent` and `customer` objects once the agent joins the conversation.

Requests may also include key-value pair metadata for the conversation that can be used either (1) to insert values into templated responses for agents or (2) as filter criteria to determine whether a conversation is eligible for specific response suggestions.

Scenario |

Expected Requests |

|

|---|---|---|

A |

Start new chat for agent with pre-existing customer messages |

POST /conversation POST /messages POST /suggestions |

B |

Populate suggestions, select a suggestion and send |

POST /suggestions POST /spellcheck POST /profanity POST /messages POST /message-sent |

C |

Populate suggestions, don’t choose one and type “Hello” and send message |

POST /suggestions POST /suggestions per keystroke POST /spellcheck POST /profanity POST /messages POST /message-sent |

D |

Choose a suggestion and edit suggestion and select a phrase completion |

POST /suggestions POST /suggestions per keystroke POST /spellcheck POST /profanity POST /messages POST /message-sent |

E |

Choose a suggestion and add to it, purposely misspelling a word and undoing the spelling correction |

POST /suggestions POST /suggestions per keystroke POST /spellcheck POST /profanity POST /messages POST /message-sent |

F |

Choose a suggestion and edit with profanity |

POST /suggestions POST /suggestions per keystroke POST /spellcheck POST /profanity POST /messages POST /message-sent |

**SSO Support**

The AutoCompose widget supports SP-initiated SSO with either OIDC (preferred method) or SAML.

**Domain Whitelisting**

In order for AutoCompose to interact with ASAPP's backend and third-party support services, the following domains need to be accessible from end-user environments:

| Domain | Description |

| :----------------------------------------- | :----------------------------------------------------------------- |

| \*.asapp.com | ASAPP service URLs |

| \*.ingest.sentry.io | Application performance monitoring tool |

| fonts.googleapis.com | Fonts |

| google-analytics.com | Page analytics |

| asapp-chat-sdk-production.s3.amazonaws.com | Static ASAPP AWS URL for desktop network connectivity health check |

**Policy Check**

Before proceeding, check the current order of precedence of policies deployed in your organization. Platform-deployed policies (like Group Policy Objects) and cloud-deployed policies (like Google Admin Console) are enforced in a priority order that can lead to lower-priority policies not being enforced.

* If installing the ASAPP browser extension via Group Policy Objects, set platform policies to have precedence over cloud policies.

* If installing the ASAPP browser extension via Google Admin Console, set cloud policies to have precedence over platform policies.

For more on how to check and modify order of precedence, see [policy management guides from Google Enterprise](https://support.google.com/chrome/a/answer/9037717).

## Integrate with LivePerson

### 1. Install the ASAPP Browser Extension

Customers have two options for installing the AutoCompose browser extension:

A. Group Policy Objects (GPO)

B. Google Admin Console

#### A. Install Group Policy Objects (GPO)

Customers can automatically install and manage the ASAPP AutoCompose browser extension via Group Policy Objects (GPO). ASAPP provides an installation server from which the extension can be downloaded and automatically updated.

The Customer's system administrator must configure GPO rules to allow the installation server URL and the software component ID. Through GPO, the administrator can choose to force the installation (i.e., install without requiring human intervention).

The following policies will configure Chrome and Edge to download the AutoCompose browser extension in all on-premise managed devices via GPO:

| **Policy Name** | **Value to Set** |

| :---------------------------------------------------------------------------------------------------------------------- | :------------------------------------------------------------------------------------------------------------------------------------------------------------------------------ |

| [ExtensionInstallSources](https://cloud.google.com/docs/chrome-enterprise/policies/?policy=ExtensionInstallSources) | https\://\*.asapp.com/\* |

| [ExtensionInstallAllowlist](https://cloud.google.com/docs/chrome-enterprise/policies/?policy=ExtensionInstallAllowlist) | bfcmlmledhddbnialbbdopfefoelbbei |

| [ExtensionInstallForcelist](https://cloud.google.com/docs/chrome-enterprise/policies/?policy=ExtensionInstallForcelist) | bfcmlmledhddbnialbbdopfefoelbbei;[https://app.asapp.com/autocompose-liveperson-chrome-extension/updates](https://app.asapp.com/autocompose-liveperson-chrome-extension/updates) |

Each Policy Name above links to documentation that describes how to set the values with the proper format depending on the platform.

**SSO Support**

The AutoCompose widget supports SP-initiated SSO with either OIDC (preferred method) or SAML.

**Domain Whitelisting**

In order for AutoCompose to interact with ASAPP's backend and third-party support services, the following domains need to be accessible from end-user environments:

| Domain | Description |

| :----------------------------------------- | :----------------------------------------------------------------- |

| \*.asapp.com | ASAPP service URLs |

| \*.ingest.sentry.io | Application performance monitoring tool |

| fonts.googleapis.com | Fonts |

| google-analytics.com | Page analytics |

| asapp-chat-sdk-production.s3.amazonaws.com | Static ASAPP AWS URL for desktop network connectivity health check |

**Policy Check**

Before proceeding, check the current order of precedence of policies deployed in your organization. Platform-deployed policies (like Group Policy Objects) and cloud-deployed policies (like Google Admin Console) are enforced in a priority order that can lead to lower-priority policies not being enforced.

* If installing the ASAPP browser extension via Group Policy Objects, set platform policies to have precedence over cloud policies.

* If installing the ASAPP browser extension via Google Admin Console, set cloud policies to have precedence over platform policies.

For more on how to check and modify order of precedence, see [policy management guides from Google Enterprise](https://support.google.com/chrome/a/answer/9037717).

## Integrate with LivePerson

### 1. Install the ASAPP Browser Extension

Customers have two options for installing the AutoCompose browser extension:

A. Group Policy Objects (GPO)

B. Google Admin Console

#### A. Install Group Policy Objects (GPO)

Customers can automatically install and manage the ASAPP AutoCompose browser extension via Group Policy Objects (GPO). ASAPP provides an installation server from which the extension can be downloaded and automatically updated.

The Customer's system administrator must configure GPO rules to allow the installation server URL and the software component ID. Through GPO, the administrator can choose to force the installation (i.e., install without requiring human intervention).

The following policies will configure Chrome and Edge to download the AutoCompose browser extension in all on-premise managed devices via GPO:

| **Policy Name** | **Value to Set** |

| :---------------------------------------------------------------------------------------------------------------------- | :------------------------------------------------------------------------------------------------------------------------------------------------------------------------------ |

| [ExtensionInstallSources](https://cloud.google.com/docs/chrome-enterprise/policies/?policy=ExtensionInstallSources) | https\://\*.asapp.com/\* |

| [ExtensionInstallAllowlist](https://cloud.google.com/docs/chrome-enterprise/policies/?policy=ExtensionInstallAllowlist) | bfcmlmledhddbnialbbdopfefoelbbei |

| [ExtensionInstallForcelist](https://cloud.google.com/docs/chrome-enterprise/policies/?policy=ExtensionInstallForcelist) | bfcmlmledhddbnialbbdopfefoelbbei;[https://app.asapp.com/autocompose-liveperson-chrome-extension/updates](https://app.asapp.com/autocompose-liveperson-chrome-extension/updates) |

Each Policy Name above links to documentation that describes how to set the values with the proper format depending on the platform.

* Click +, then **Add new widget**.

* Click +, then **Add new widget**.

2. **Enter Widget Attributes**

* Fill in the **Widget name** as 'ASAPP'

* Assign the conversation skill(s) to which ASAPP is being deployed in the **Assigned skills** dropdown menu.

2. **Enter Widget Attributes**

* Fill in the **Widget name** as 'ASAPP'

* Assign the conversation skill(s) to which ASAPP is being deployed in the **Assigned skills** dropdown menu.

* Click the **Save** button.

* Click the **Save** button.

* Click the **Done** button

4. **Enable Pop-in Composer**

* In the Agent Workspace, click the nut icon (similar to a gear shape) next to the **+** icon at the bottom of the AutoCompose panel widget.

* Enable the **Pop-in Composer** option.

* Click the **Done** button

4. **Enable Pop-in Composer**

* In the Agent Workspace, click the nut icon (similar to a gear shape) next to the **+** icon at the bottom of the AutoCompose panel widget.

* Enable the **Pop-in Composer** option.

Press the escape key and reload the page to see the changes; the ASAPP widget should now be available across your LivePerson organization

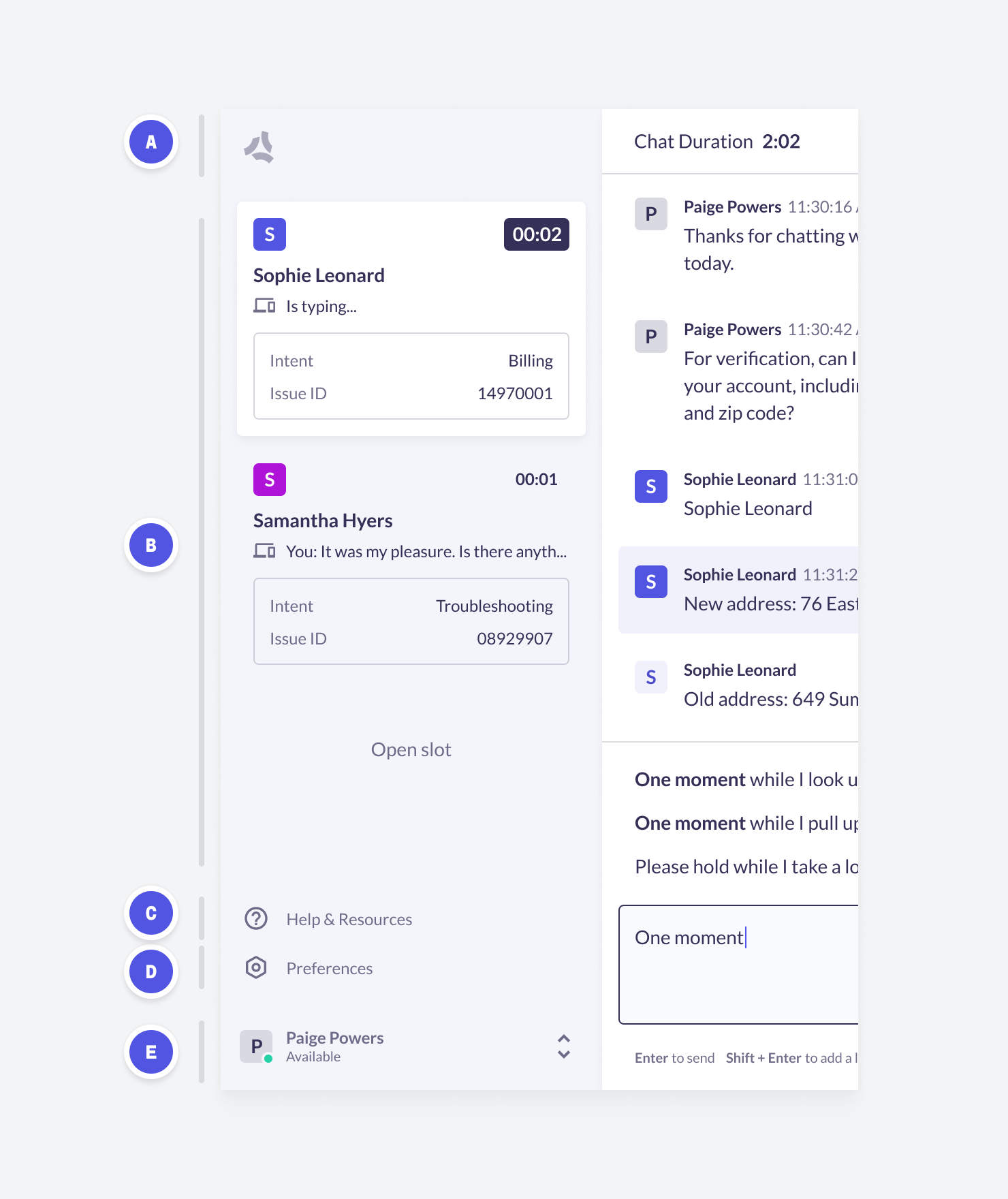

Upon login to the Agent Workspace, the ASAPP widget for AutoCompose will appear in place of the standard LivePerson composer, underneath the conversation transcript. By default, the response panel for AutoCompose will appear to the right of the conversational panel.

Press the escape key and reload the page to see the changes; the ASAPP widget should now be available across your LivePerson organization

Upon login to the Agent Workspace, the ASAPP widget for AutoCompose will appear in place of the standard LivePerson composer, underneath the conversation transcript. By default, the response panel for AutoCompose will appear to the right of the conversational panel.

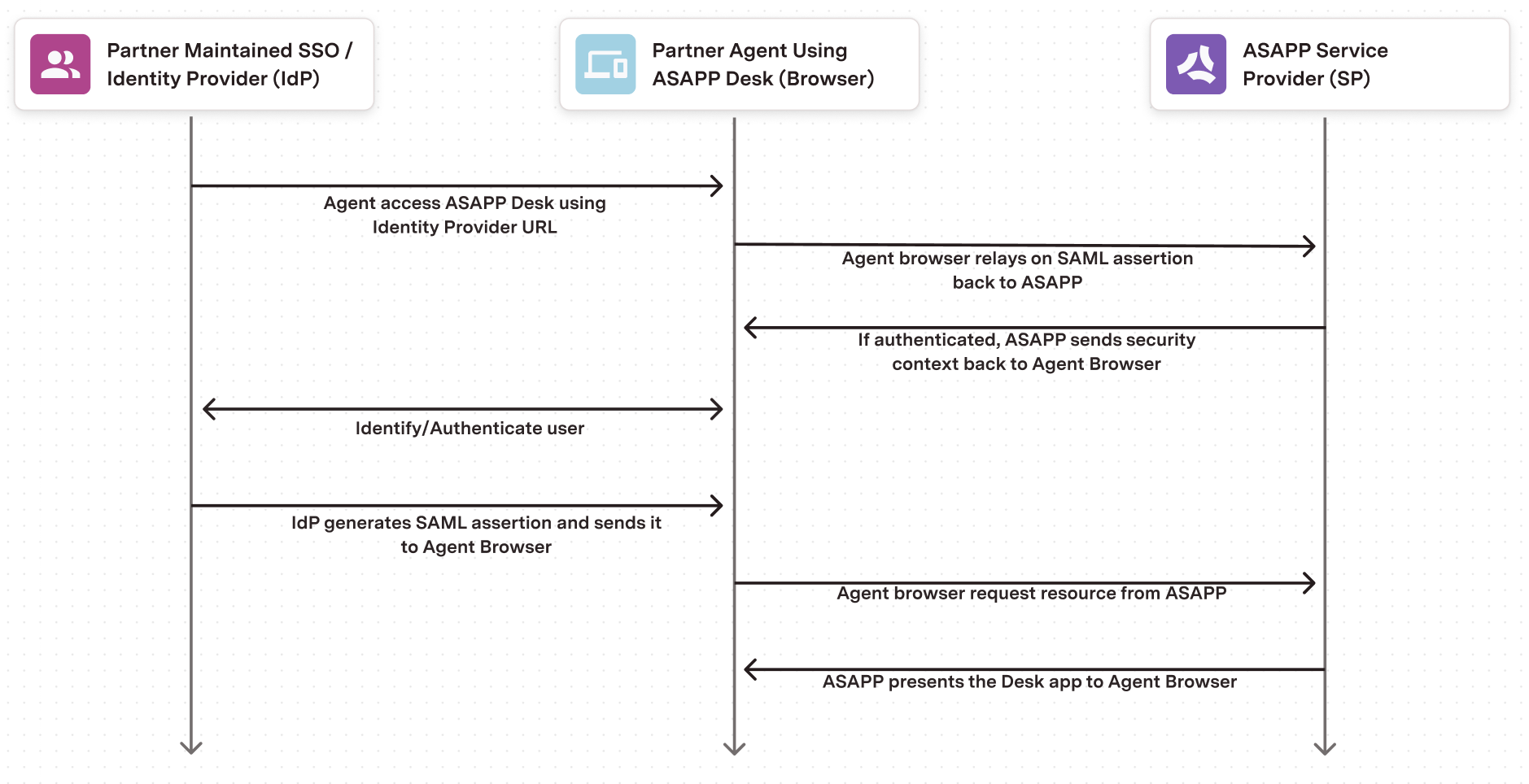

### 3. Set Up Single Sign-On

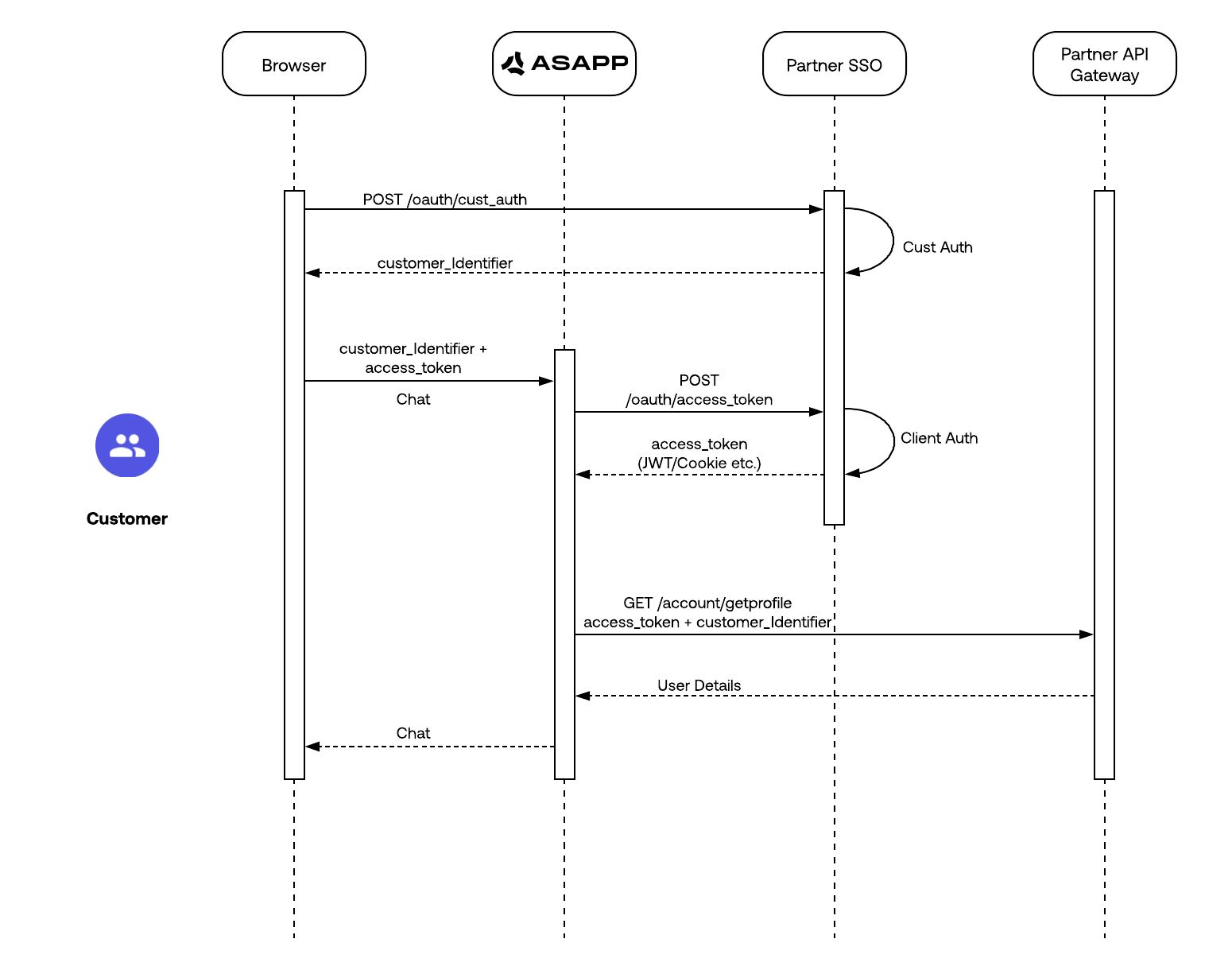

ASAPP handles authentication through the customer's SSO service to confirm the identity of the agent.

ASAPP acts as the Service Provider (SP) with the customer acting as the Identity Provider (IDP). The customer's authentication system performs user authentication using their existing user credentials.

ASAPP supports SP-initiated SSO with either OIDC (preferred method) and SAML. Once the user initiates sign-in, ASAPP detects that the user is authenticated and requests an assertion from the customer's SSO service.

**Configuration Steps for OIDC (preferred method)**

1. Create a new IDP OIDC application with type `Web`

2. Set the following attributes for the app:

### 3. Set Up Single Sign-On

ASAPP handles authentication through the customer's SSO service to confirm the identity of the agent.

ASAPP acts as the Service Provider (SP) with the customer acting as the Identity Provider (IDP). The customer's authentication system performs user authentication using their existing user credentials.

ASAPP supports SP-initiated SSO with either OIDC (preferred method) and SAML. Once the user initiates sign-in, ASAPP detects that the user is authenticated and requests an assertion from the customer's SSO service.

**Configuration Steps for OIDC (preferred method)**

1. Create a new IDP OIDC application with type `Web`

2. Set the following attributes for the app:

Attribute |

Value\* |

|---|---|

Grant Type |

authorization code |

Sign-in Redirect URIs |

Production: [https://api.asapp.com/auth/v1/callback/\\\{company\_marker\\}](https://api.asapp.com/auth/v1/callback/\\\{company_marker\\}) Sandbox: [https://api.sandbox.asapp.com/auth/v1/callback/\\\{company\_marker\\}-sandbox](https://api.sandbox.asapp.com/auth/v1/callback/\\\{company_marker\\}-sandbox) |

Sandbox: [https://sso.asapp.com/auth/realms/standalone-\{company\_marker}-auth/broker/saml-sandbox/endpoint/clients/asapp-saml-sandbox](https://sso.asapp.com/auth/realms/standalone-\{company_marker}-auth/broker/saml-sandbox/endpoint/clients/asapp-saml-sandbox) | | Recipient URL | Production: [https://sso.asapp.com/auth/realms/standalone-\{company\_marker}-auth/broker/saml/endpoint/clients/asapp-saml](https://sso.asapp.com/auth/realms/standalone-\{company_marker}-auth/broker/saml/endpoint/clients/asapp-saml)

Sandbox: [https://sso.asapp.com/auth/realms/standalone-\{company\_marker}-auth/broker/saml-sandbox/endpoint/clients/asapp-saml-sandbox](https://sso.asapp.com/auth/realms/standalone-\{company_marker}-auth/broker/saml-sandbox/endpoint/clients/asapp-saml-sandbox) | | Destination URL | Production: [https://sso.asapp.com/auth/realms/standalone-\{company\_marker}-auth/broker/saml/endpoint/clients/asapp-saml](https://sso.asapp.com/auth/realms/standalone-\{company_marker}-auth/broker/saml/endpoint/clients/asapp-saml)

Sandbox: [https://sso.asapp.com/auth/realms/standalone-\{company\_marker}-auth/broker/saml-sandbox/endpoint/clients/asapp-saml-sandbox](https://sso.asapp.com/auth/realms/standalone-\{company_marker}-auth/broker/saml-sandbox/endpoint/clients/asapp-saml-sandbox) | | Audience Restriction | Production: [https://sso.asapp.com/auth/realms/standalone-\{company\_marker}-auth/broker/saml/endpoint/clients/asapp-saml](https://sso.asapp.com/auth/realms/standalone-\{company_marker}-auth/broker/saml/endpoint/clients/asapp-saml)

Sandbox: [https://sso.asapp.com/auth/realms/standalone-\{company\_marker}-auth/broker/saml-sandbox/endpoint/clients/asapp-saml-sandbox](https://sso.asapp.com/auth/realms/standalone-\{company_marker}-auth/broker/saml-sandbox/endpoint/clients/asapp-saml-sandbox) | | Response | Signed | | Assertion | Signed | | Signature Algorithm | RSA\_SHA256 | | Digest Algorithm | SHA256 | | Attribute Statements | externalUserId: {unique_id_to_identify_the_user} | **\*NOTE:** ASAPP to provide `company_marker` value 3. Save the application and send the Public Certificate to validate Signature for this app SAML payload to ASAPP team 4. Send ASAPP team the URL of the SAML application ### 4. Configure Auto-Pilot Greetings If you so choose, you can work with your ASAPP contact to enable Auto-Pilot Greetings in your AutoCompose installation. Auto-Pilot Greetings automatically generates a greeting at the beginning of a conversation, and that greeting can be automatically sent to a customer on your agent's behalf after a configurable timer elapses. Your ASAPP contact can: * Turn Auto-Pilot Greetings on or off for your organization * Set a countdown timer value after which the Auto-Pilot Greeting is sent if an agent does not cancel Auto-Pilot by typing or clicking a "cancel" button * Set the global default messages that will be provided for Auto-Pilot Greetings across your organization (note that agents can optionally customize their Auto-Pilot Greetings messages within the Auto-Pilot tab of the AutoCompose panel) ## Usage ### Customization #### LivePerson For LivePerson, the standard process is to download ASAPP AutoCompose as a standalone widget. In the case that you already have your own LivePerson custom widget, ASAPP also provides the option for you to embed our custom widget inside your own custom widget, thus economizing on-screen real estate. **Conversation Attributes** Once the ASAPP AutoCompose widget is embedded, LivePerson shares the following conversation attributes with ASAPP: customer name, agent name and skill. ASAPP can use name attributes to populate values into templated responses (e.g. "Hi \[customer name], how can I help you today?") and to selectively filter response lists based on the skill of the conversation. **Conversation Redaction** When message text in the conversation transcript is sent to ASAPP, ASAPP applies redaction to the message text to prevent transmission of sensitive information. Reach out to your ASAPP account contact for information on available redaction capabilities to configure for your implementation. ### Data Security ASAPP's security protocols protect data at each point of transmission from first user authentication, to secure communications, to our auditing and logging system, all the way to securing the environment when data is at rest in the data logging system. Access to data by ASAPP teams is tightly constrained and monitored. Strict security protocols protect both ASAPP and our customers. The following security controls are particularly relevant to AutoCompose: 1. Client sessions are controlled using a time-limited authorization token. Privileges for each active session are controlled server-side to mitigate potential elevation-of-privilege and information disclosure risks. 2. To avoid unauthorized disclosure of information, unique, non-guessable IDs are used to identify conversations. These conversations can only be accessed using a valid client session. 3. Requests to API endpoints that can potentially receive sensitive data are put through a round of redaction to strip the request of sensitive data (like SSNs and phone numbers). ### Additional Considerations #### Historical Conversation Data for Generating a Response List ASAPP uses past agent conversations to generate a customized response list tailored to a given use case. In order to create an accurate and relevant list, ASAPP requires a minimum of 200,000 historical transcripts to be supplied ahead of implementing AutoCompose. For more information on how to transmit the conversation data, reach out to your ASAPP account contact. #### LivePerson ASAPP uses a browser extension to replace the LivePerson composer with the ASAPP composer. In the unlikely event that the DOM of the LivePerson composer or its surrounding area changes, the LivePerson composer may no longer be replaced by the ASAPP composer. In this case, the CSR has the option to toggle the ASAPP composer so that it 'retreats' into the ASAPP Custom Widget. In such a case, the ASAPP composer will continue to be fully functional, even if it is no longer ideally placed just below the LivePerson chat history. In order to quickly restore the placement of the ASAPP composer directly beneath the LivePerson chat log, ASAPP deploys its extension so that the extension's configuration is pulled down from our servers in real-time. If the LivePerson DOM does change, we can deploy a fix rapidly. # Deploying AutoCompose for Salesforce Source: https://docs.asapp.com/autocompose/deploying-autocompose-for-salesforce Use AutoCompose on Salesforce Lightning Experience. ## Overview This page describes how to Integrate AutoCompose in your Salesforce application. ### Integration Steps There are three parts to the AutoCompose setup process. Use the links below to skip to information about a specific part of the process: 1. [Configure the Salesforce organization](#1-configure-the-salesforce-organization-centrally) centrally using an administrator account 2. [Setup agent/user authentication](#2-set-up-single-sign-on) through the existing single sign-on (SSO) service 3. [Work with your ASAPP contact to configure Auto-Pilot Greetings](#3-configure-auto-pilot-greetings), if desired

**SSO Support**

The AutoCompose widget supports SP-initiated SSO with either OIDC (preferred method) or SAML.

**Domain Whitelisting**

In order for AutoCompose to interact with ASAPP's backend and third-party support services, the following domains need to be accessible from end-user environments:

| Domain | Description |

| :----------------------------------------- | :----------------------------------------------------------------- |

| \*.asapp.com | ASAPP service URLs |

| \*.ingest.sentry.io | Application performance monitoring tool |

| fonts.googleapis.com | Fonts |

| google-analytics.com | Page analytics |

| asapp-chat-sdk-production.s3.amazonaws.com | Static ASAPP AWS URL for desktop network connectivity health check |

## Integrate with Salesforce

### 1. Configure the Salesforce Organization Centrally

**Before You Begin**

You will need the following information to configure ASAPP for Salesforce:

* Administrator credentials to login to your Salesforce organization account.

* **NOTE:** Organization and Administrator should be enabled for 'chat'.

* A URL for the ASAPP installation package, which will be provided by ASAPP.

**SSO Support**

The AutoCompose widget supports SP-initiated SSO with either OIDC (preferred method) or SAML.

**Domain Whitelisting**

In order for AutoCompose to interact with ASAPP's backend and third-party support services, the following domains need to be accessible from end-user environments:

| Domain | Description |

| :----------------------------------------- | :----------------------------------------------------------------- |

| \*.asapp.com | ASAPP service URLs |

| \*.ingest.sentry.io | Application performance monitoring tool |

| fonts.googleapis.com | Fonts |

| google-analytics.com | Page analytics |

| asapp-chat-sdk-production.s3.amazonaws.com | Static ASAPP AWS URL for desktop network connectivity health check |

## Integrate with Salesforce

### 1. Configure the Salesforce Organization Centrally

**Before You Begin**

You will need the following information to configure ASAPP for Salesforce:

* Administrator credentials to login to your Salesforce organization account.

* **NOTE:** Organization and Administrator should be enabled for 'chat'.

* A URL for the ASAPP installation package, which will be provided by ASAPP.

* Choose **Install for All Users** (as shown above).

* Check the acknowledgment statement and click the **Install** button:

* Choose **Install for All Users** (as shown above).

* Check the acknowledgment statement and click the **Install** button:

* The Installation runs. An **Installation Complete!** message appears:

* The Installation runs. An **Installation Complete!** message appears:

* Click the **Done** button.

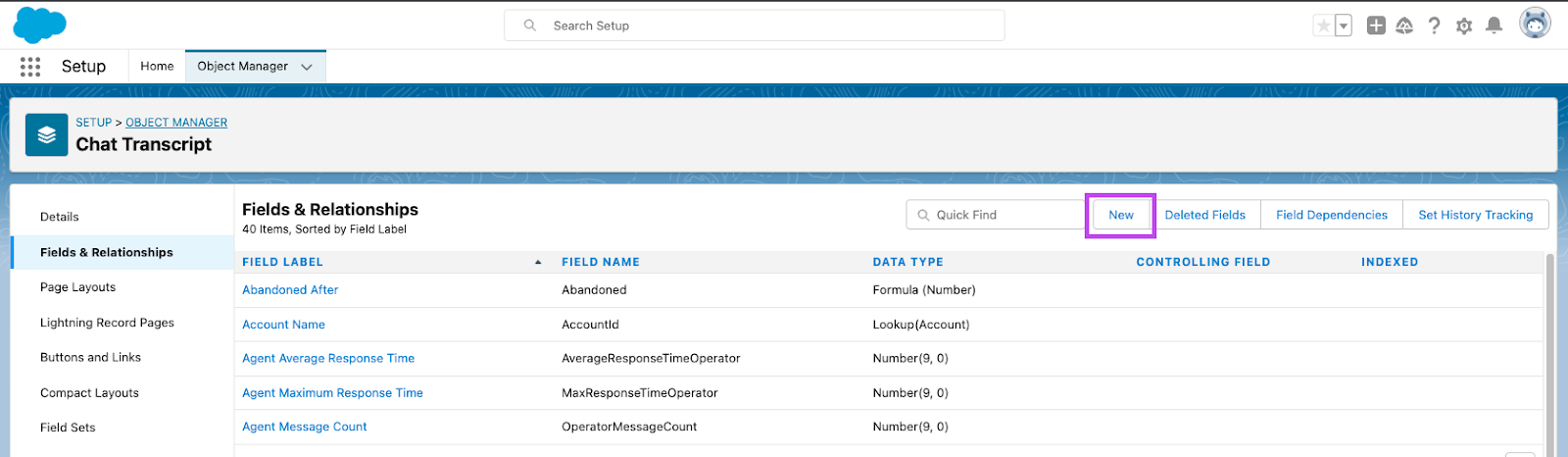

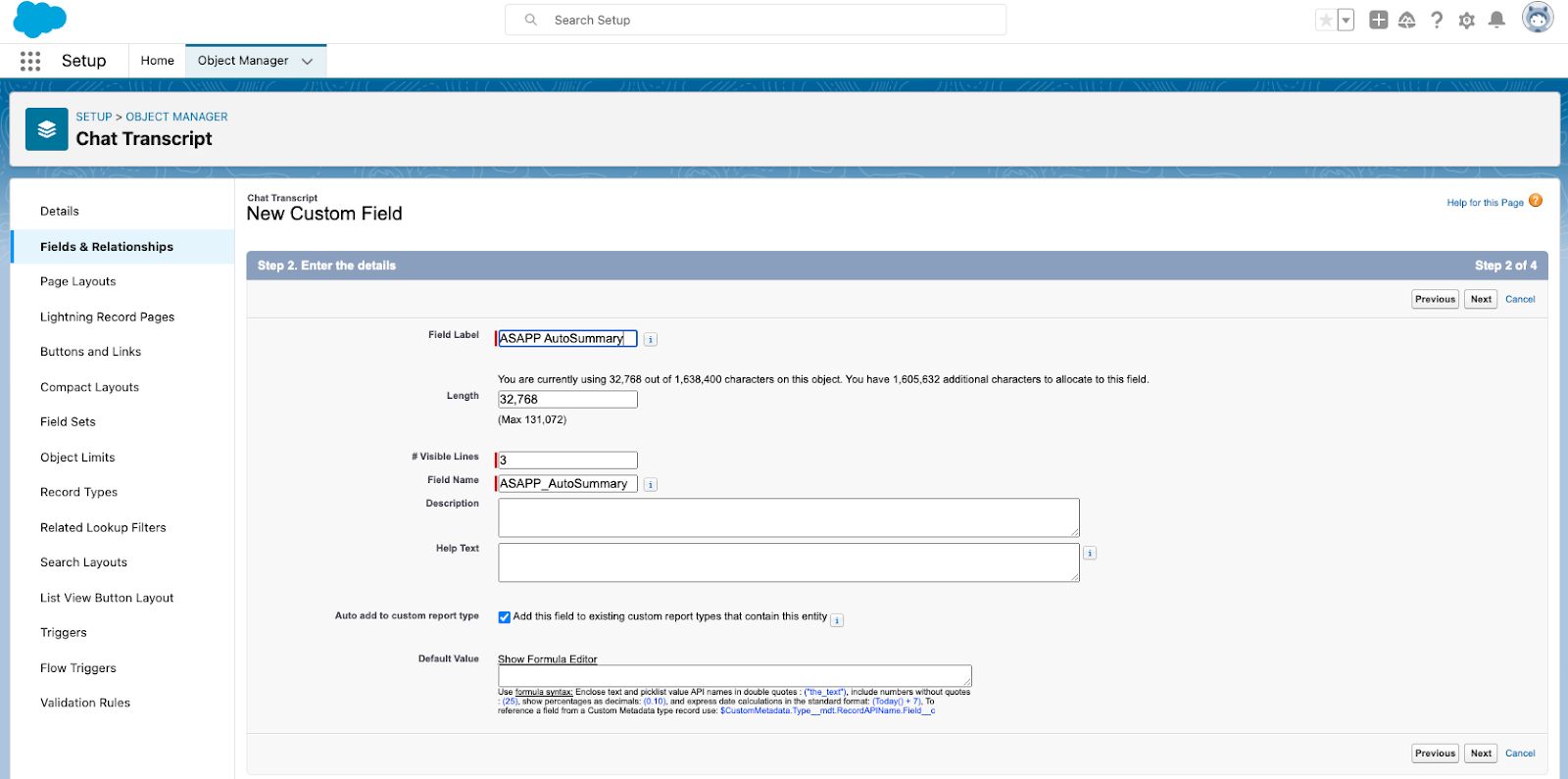

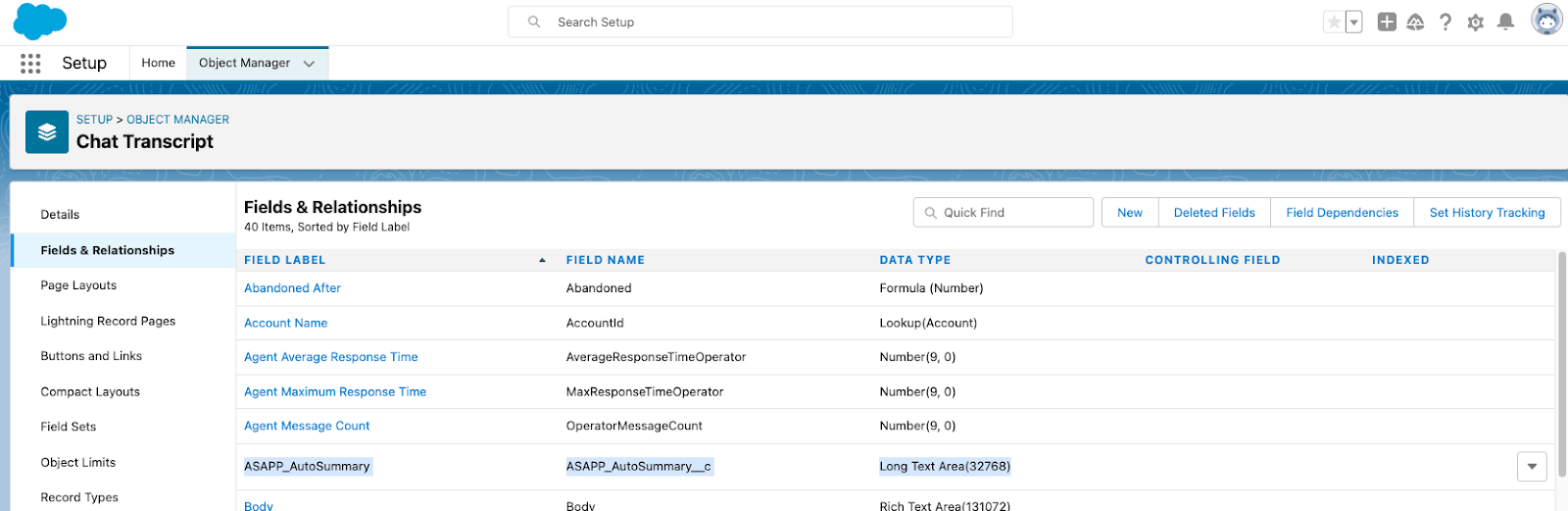

**2. Add ASAPP to the Chat Transcript Page**

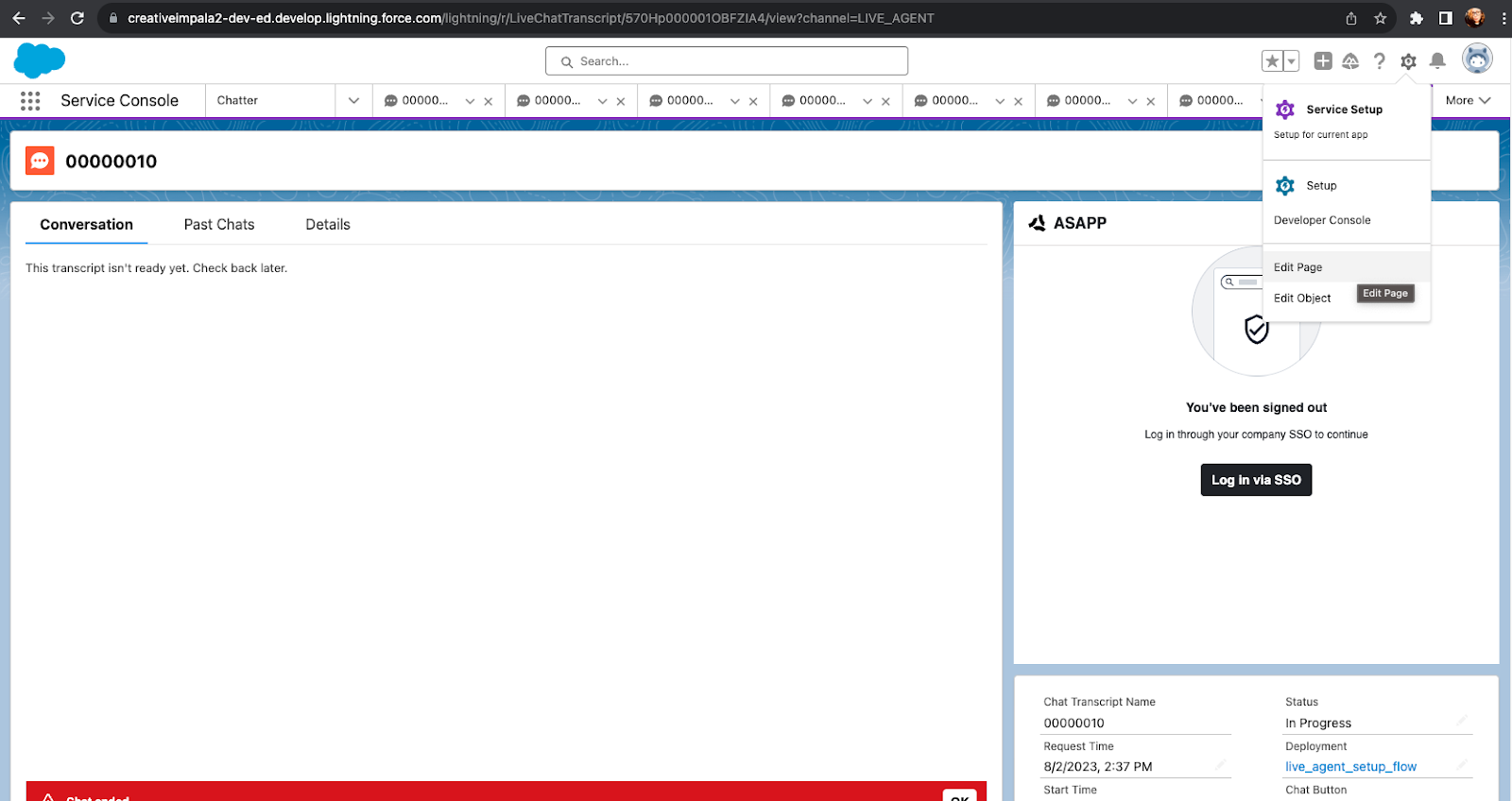

* Open the 'Service Console' page (or your chat page).

* Choose an existing chat session or start a new chat session so that the chat transcript page appears (the exact mechanism is organization-specific).

* In the top-right, click the **gear** icon, then right-click **Edit Page**, and **Open Link in a New Tab**.

* Click the **Done** button.

**2. Add ASAPP to the Chat Transcript Page**

* Open the 'Service Console' page (or your chat page).

* Choose an existing chat session or start a new chat session so that the chat transcript page appears (the exact mechanism is organization-specific).

* In the top-right, click the **gear** icon, then right-click **Edit Page**, and **Open Link in a New Tab**.

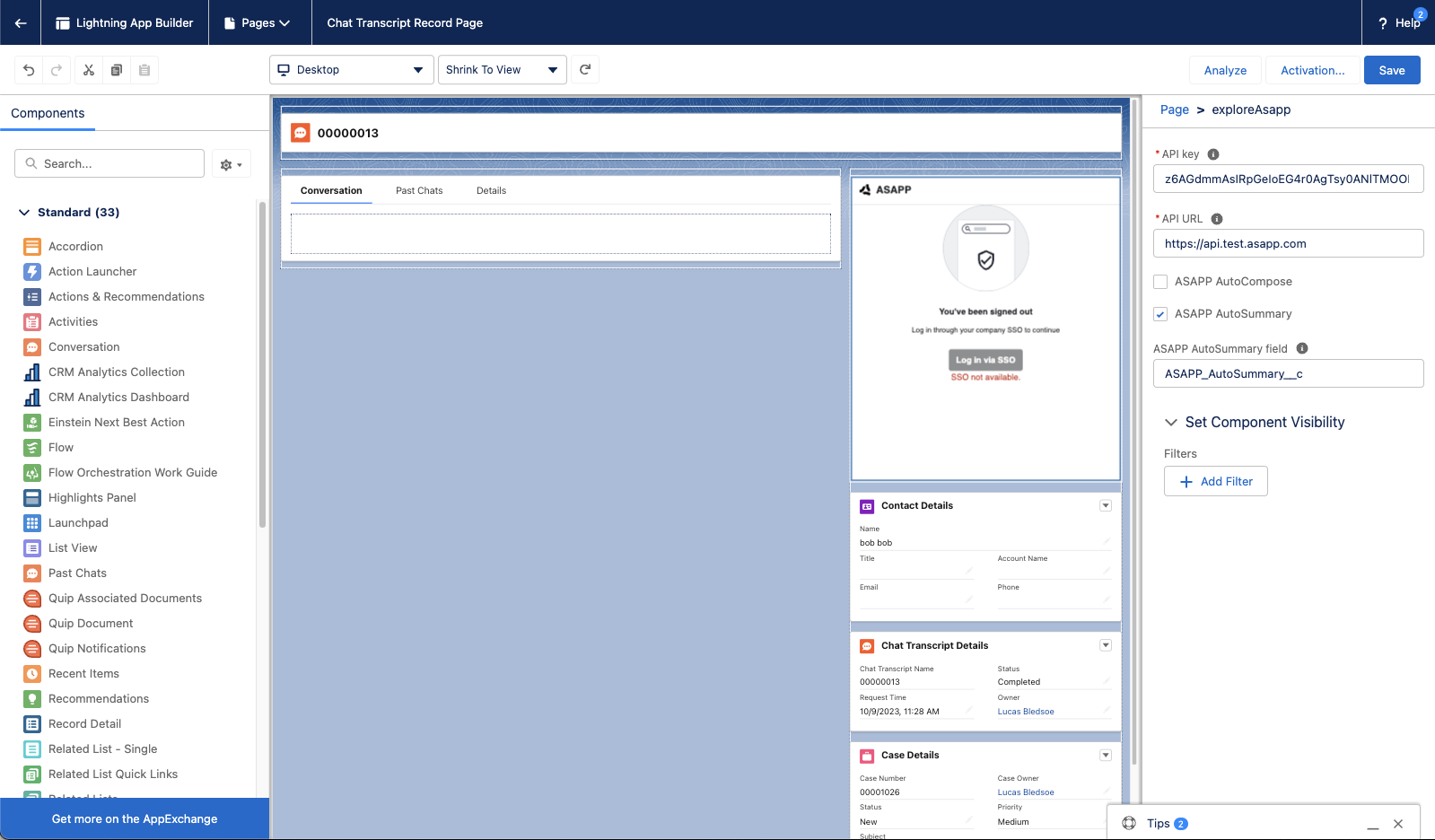

* Navigate to the new tab to see the chat transcript edit page:

* Navigate to the new tab to see the chat transcript edit page:

* Select the conversation panel (middle) and delete it.

* Select the conversation panel (middle) and delete it.

* Drag the **chatAsapp** component (left), inside the conversation panel:

* Drag the **chatAsapp** component (left), inside the conversation panel:

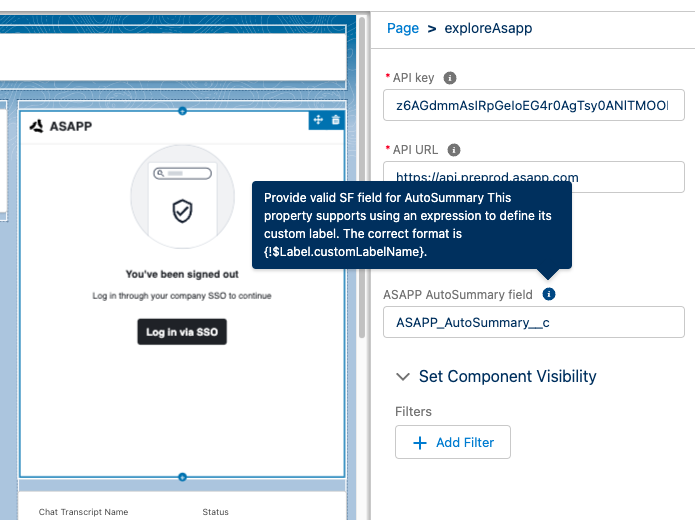

* Drag the **exploreAsapp** component (left), to the right column. Next, add your organization's **API key** and **API URL** (found in the ASAPP Developer Portal) in the rightmost panel:

* Drag the **exploreAsapp** component (left), to the right column. Next, add your organization's **API key** and **API URL** (found in the ASAPP Developer Portal) in the rightmost panel:

* Click **Save**, then click **Activate**

* Click **Save**, then click **Activate**

* Click **Assign as org default**.

* Click **Assign as org default**.

* Choose **Desktop** form factor, then click **Save**.

* Choose **Desktop** form factor, then click **Save**.

* Return to the chat transcript page and refresh - the ASAPP composer should appear.

* Return to the chat transcript page and refresh - the ASAPP composer should appear.

### 2. Set Up Single Sign-On

ASAPP handles authentication through the customer's SSO service to confirm the identity of the agent.

ASAPP acts as the Service Provider (SP) with the customer acting as the Identity Provider (IDP). The customer's authentication system performs user authentication using their existing user credentials.

ASAPP supports SP-initiated SSO with either OIDC (preferred method) and SAML. Once the user initiates sign-in, ASAPP detects that the user is authenticated and requests an assertion from the customer's SSO service.

**Configuration Steps for OIDC (preferred method)**

1. Create a new IDP OIDC application with type `Web`

2. Set the following attributes for the app:

### 2. Set Up Single Sign-On

ASAPP handles authentication through the customer's SSO service to confirm the identity of the agent.

ASAPP acts as the Service Provider (SP) with the customer acting as the Identity Provider (IDP). The customer's authentication system performs user authentication using their existing user credentials.

ASAPP supports SP-initiated SSO with either OIDC (preferred method) and SAML. Once the user initiates sign-in, ASAPP detects that the user is authenticated and requests an assertion from the customer's SSO service.

**Configuration Steps for OIDC (preferred method)**

1. Create a new IDP OIDC application with type `Web`

2. Set the following attributes for the app:

Attribute |

Value\* |

|---|---|

Grant Type |

authorization code |

Sign-in Redirect URIs |

Production: `https://api.asapp.com/auth/v1/callback/\{company_marker\}` Sandbox: `https://api.sandbox.asapp.com/auth/v1/callback/\{company_marker\}-sandbox` |

Sandbox: `https://sso.asapp.com/auth/realms/standalone-{company_marker}-auth/broker/saml-sandbox/endpoint/clients/asapp-saml-sandbox` | | Recipient URL | Production: `https://sso.asapp.com/auth/realms/standalone-{company_marker}-auth/broker/saml/endpoint/clients/asapp-saml`

Sandbox: `https://sso.asapp.com/auth/realms/standalone-{company_marker}-auth/broker/saml-sandbox/endpoint/clients/asapp-saml-sandbox` | | Destination URL | Production: `https://sso.asapp.com/auth/realms/standalone-{company_marker}-auth/broker/saml/endpoint/clients/asapp-saml`

Sandbox: `https://sso.asapp.com/auth/realms/standalone-{company_marker}-auth/broker/saml-sandbox/endpoint/clients/asapp-saml-sandbox` | | Audience Restriction | Production: `https://sso.asapp.com/auth/realms/standalone-{company_marker}-auth/broker/saml/endpoint/clients/asapp-saml`

Sandbox: `https://sso.asapp.com/auth/realms/standalone-{company_marker}-auth/broker/saml-sandbox/endpoint/clients/asapp-saml-sandbox` | | Response | Signed | | Assertion | Signed | | Signature Algorithm | RSA\_SHA256 | | Digest Algorithm | SHA256 | | Attribute Statements | externalUserId: `{unique_id_to_identify_the_user}` | **\*NOTE:** ASAPP to provide `company_marker` value 3. Save the application and send the Public Certificate to validate Signature for this app SAML payload to ASAPP team 4. Send ASAPP team the URL of the SAML application ### 3. Configure Auto-Pilot Greetings If you so choose, you can work with your ASAPP contact to enable Auto-Pilot Greetings in your AutoCompose installation. Auto-Pilot Greetings automatically generates a greeting at the beginning of a conversation, and that greeting can be automatically sent to a customer on your agent's behalf after a configurable timer elapses. Your ASAPP contact can: * Turn Auto-Pilot Greetings on or off for your organization * Set a countdown timer value after which the Auto-Pilot Greeting is sent if an agent does not cancel Auto-Pilot by typing or clicking a "cancel" button * Set the global default messages that will be provided for Auto-Pilot Greetings across your organization (note thatagents can optionally customize their Auto-Pilot Greetings messages within the Auto-Pilot tab of the AutoCompose panel) ## Usage ### Customization #### Conversation Attributes Once the ASAPP AutoCompose widget is embedded, Salesforce shares the following conversation attributes with ASAPP: customer name, agent name and skill. ASAPP can use name attributes to populate values into templated responses (e.g. "Hi \[customer name], how can I help you today?") and to selectively filter response lists based on the skill of the conversation. #### Conversation Redaction When message text in the conversation transcript is sent to ASAPP, ASAPP applies redaction to the message text to prevent transmission of sensitive information. Reach out to your ASAPP account contact for information on available redaction capabilities to configure for your implementation. #### Composer Placement ASAPP currently targets Lightning desktops. Within Lightning-based desktops, you are free to place our composer wherever you choose. However, we suggest placing it immediately below the Salesforce conversation widget, such that the chat log appears above the ASAPP composer. ### Data Security ASAPP's security protocols protect data at each point of transmission from first user authentication, to secure communications, to our auditing and logging system, all the way to securing the environment when data is at rest in the data logging system. Access to data by ASAPP teams is tightly constrained and monitored. Strict security protocols protect both ASAPP and our customers. The following security controls are particularly relevant to AutoCompose: 1. Client sessions are controlled using a time-limited authorization token. Privileges for each active session are controlled server-side to mitigate potential elevation-of-privilege and information disclosure risks. 2. To avoid unauthorized disclosure of information, unique, non-guessable IDs are used to identify conversations. These conversations can only be accessed using a valid client session. 3. Requests to API endpoints that can potentially receive sensitive data are put through a round of redaction to strip the request of sensitive data (like SSNs and phone numbers). ### Additional Considerations #### Historical Conversation Data for Generating a Response List ASAPP uses past agent conversations to generate a customized response list tailored to a given use case. In order to create an accurate and relevant list, ASAPP requires a minimum of 200,000 historical transcripts to be supplied ahead of implementing AutoCompose. For more information on how to transmit the conversation data, reach out to your ASAPP account contact. # Feature Releases Overview Source: https://docs.asapp.com/autocompose/feature-releases | Feature Name | Feature Release Details | Additional Relevant Information (if available) | | :------------------------------ | :------------------------------------------------------------------------------------------------------------------------------------------------ | :---------------------------------------------------- | | **Tooling** | [Tooling for AutoCompose](/autocompose/feature-releases/tooling-for-autocompose "Tooling for AutoCompose") | | | **Auto-Pilot Greetings** | [Auto-Pilot Greetings for AutoCompose](/autocompose/feature-releases/auto-pilot-greetings-for-autocompose "Auto-Pilot Greetings for AutoCompose") | Available for all implementation types of AutoCompose | | **Health Check API** | [Health Check API](/autocompose/feature-releases/health-check-api "Health Check API") | | | ****Sandbox for AutoCompose**** | [Sandbox for AutoCompose](/autocompose/feature-releases/sandbox-for-autocompose "Sandbox for AutoCompose") | | # Auto-Pilot Greetings for AutoCompose Source: https://docs.asapp.com/autocompose/feature-releases/auto-pilot-greetings-for-autocompose ## Feature Release This is the announcement for an upcoming ASAPP feature. Your ASAPP account team will provide a target release date and can direct you to more detailed information as needed. ## Overview Sending a prompt and personalized greeting message is critical to making a great first impression with a newly assigned customer. However, at the moment of assignment, agents are often in the middle of other important tasks. Switching context to get caught up on the conversation can be disruptive to the agent's productivity. With Auto-Pilot Greetings for AutoCompose, agents can configure an adaptive greeting message which will auto-send upon issue assignment following a configurable timeout period. The greeting can optionally use the customer's first name when it's available. Agents retain control of the feature --- they can turn Auto-Pilot Greetings on/off for themselves, individualize their automatically composed greeting messages, and intervene to either cancel or send the message immediately.

## Use and Impact

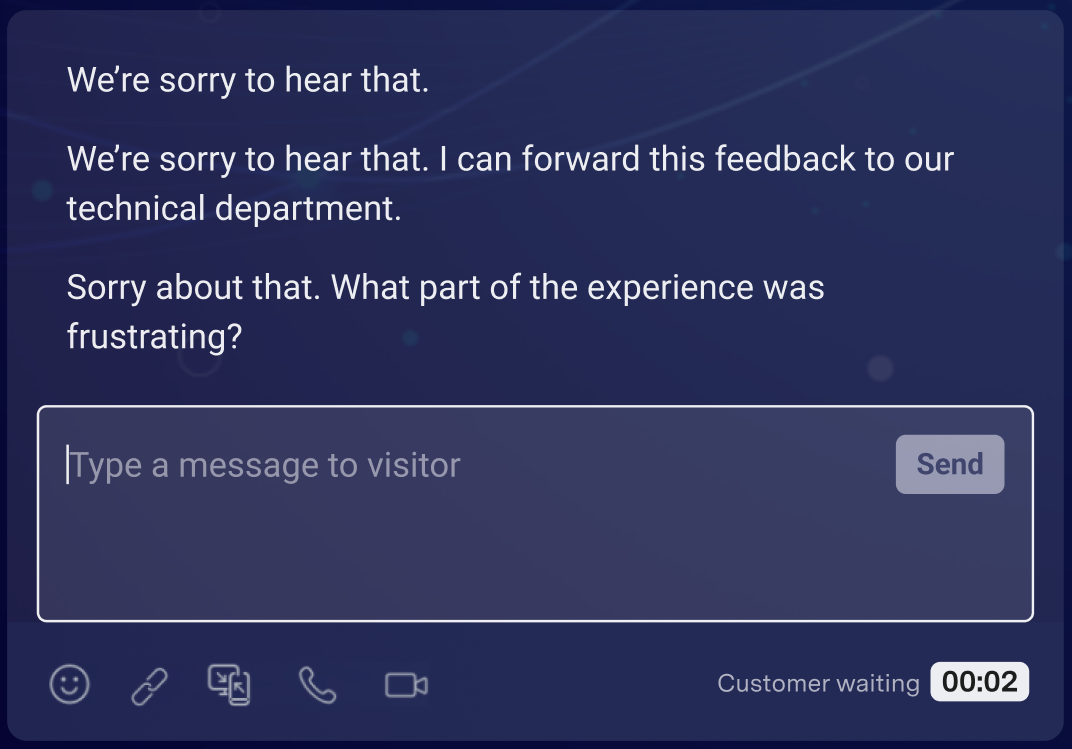

Auto-Pilot Greetings automates agents' initial interaction with customers, freeing them for higher value tasks.

AutoCompose's Auto-Pilot Greetings is intended to reduce an agent's average response time across concurrent issues by freeing up their attention, and ultimately reduce average handle time.

## How It Works

For each conversation, Auto-Pilot Greetings follows a simple sequence:

1. Customer enters the chat

2. Auto-Pilot Greeting message and countdown timer appears above the composer

3. Timer counts down to zero

4. Greeting message is sent

### Configurations

**Global On/Off**

* This setting configures whether Auto-Pilot Greetings will be enabled or disabled for all agents.

* Customers must reach out to their ASAPP contact to configure Auto-Pilot Greetings globally for their program.

**Global Default Auto-Pilot Greeting Messages**

* Two default Auto-Pilot Greetings messages must be configured: one version where the customer's name is known and one where the customer's name is not known.

* Customers must reach out to their ASAPP contact to configure Global Default Auto-Pilot Greeting messages.

**Agent Specific Auto-Pilot Greeting Messages and On / Off Settings**

* Agents can optionally customize the Auto-Pilot Greeting messages composed on their behalf, and use their individualized messages in lieu of the global default messages; often agents include their name in their customized messages. Additionally, they can toggle whether Auto-Pilot Greetings is on or off for themselves by default.

* If using AutoCompose for Salesforce or LivePerson, agents configure customized messages and the on / off setting themselves through the Auto-Pilots tab of their AutoCompose panel.

This agent functionality can also be enabled via API for custom implementations of AutoCompose.

**Countdown Timer**

* The countdown timer is the amount of time after a customer enters the chat before an Auto-Pilot Greeting is sent. A countdown timer will display to the agent notifying them of the time remaining before auto-sending.

* Customers must reach out to their ASAPP contact to set an appropriate countdown timer value, which will apply across their AutoCompose implementation.

## FAQs

* **What are best practices for Auto-Pilot Greetings?**

ASAPP recommends setting the default message to include questions that agents often need to ask in the beginning of a call to resolve a dispute. For example:

> \*"Hi, \[NAME]. This is Robert and I'm happy to assist you today. So I can best help you, can you please provide me with your account number and a description of your issue?"

Furthermore, ASAPP recommends setting a countdown timer value in the range of 10-15 seconds. Too long of a default may increase handle times. Too short of a default may not give an agent a chance to cancel the Auto-Pilot Greeting should they so desire.

# Health Check API

Source: https://docs.asapp.com/autocompose/feature-releases/health-check-api

## Feature Release

This is the announcement for an upcoming ASAPP feature. Your ASAPP account team will provide a target release date and can direct you to more detailed information as needed.

## Overview

ASAPP provides a means for our customers to check the operational status of our API platform. Developers can ping this endpoint to verify that the ASAPP infrastructure is working as expected.

## Use and Impact

Developers can either check ad hoc if the ASAPP infrastructure is up at a given time or implement automated API monitoring to send a request to the Health Check API at a preset interval.

This feature is intended to improve developer confidence when integrating with ASAPP services. It also removes the need for developers to send requests to other ASAPP services to check their status, which may trigger errors unnecessarily.

## How It Works

Developers can run a `GET https://api.sandbox.asapp.com/v1/health` operation and inspect for a 200 response with either the SUCCESS or FAILED value for the status of the core ASAPP platform.

**Configuration**

Developers must request access to the API endpoint in the Developer Portal:

1. Access the Developer Portal.

2. Navigate to **Apps**, select your application, and authorize the Health Check API.

3. Reach out to your ASAPP account team to authorize access.

# Sandbox for AutoCompose

Source: https://docs.asapp.com/autocompose/feature-releases/sandbox-for-autocompose

## Feature Release

This is the announcement for an upcoming ASAPP feature. Your ASAPP account team will provide a target release date and can direct you to more detailed information as needed.

## Overview

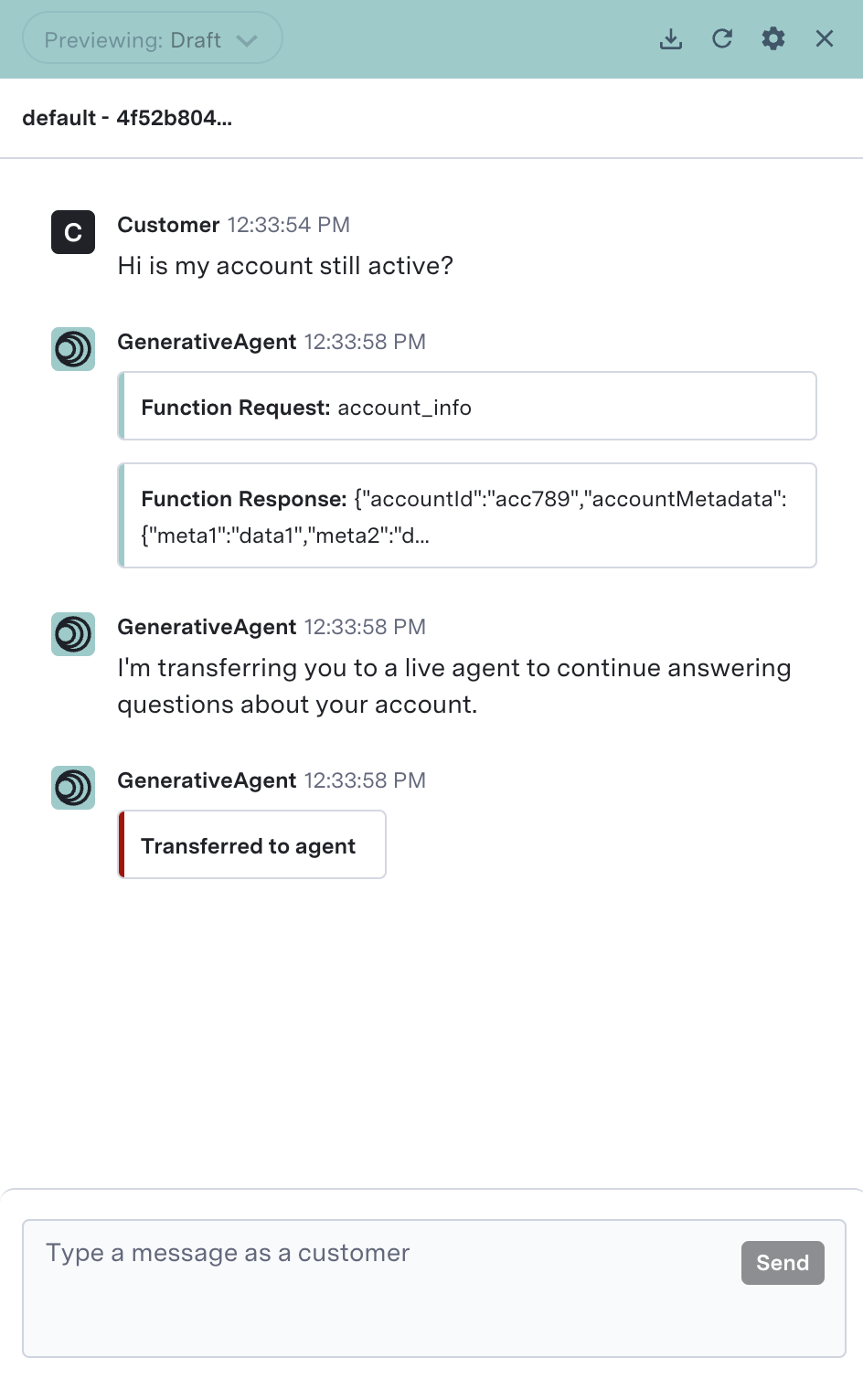

AutoCompose Sandbox is a playground designed to preview an agent's experience with AutoCompose without having to wait for an integration to complete. AutoCompose Sandbox enables administrators to step into the role of an agent and experience the real-time suggestions that AutoCompose provides.

## Use and Impact

Auto-Pilot Greetings automates agents' initial interaction with customers, freeing them for higher value tasks.

AutoCompose's Auto-Pilot Greetings is intended to reduce an agent's average response time across concurrent issues by freeing up their attention, and ultimately reduce average handle time.

## How It Works

For each conversation, Auto-Pilot Greetings follows a simple sequence:

1. Customer enters the chat

2. Auto-Pilot Greeting message and countdown timer appears above the composer

3. Timer counts down to zero

4. Greeting message is sent

### Configurations

**Global On/Off**

* This setting configures whether Auto-Pilot Greetings will be enabled or disabled for all agents.

* Customers must reach out to their ASAPP contact to configure Auto-Pilot Greetings globally for their program.

**Global Default Auto-Pilot Greeting Messages**

* Two default Auto-Pilot Greetings messages must be configured: one version where the customer's name is known and one where the customer's name is not known.

* Customers must reach out to their ASAPP contact to configure Global Default Auto-Pilot Greeting messages.

**Agent Specific Auto-Pilot Greeting Messages and On / Off Settings**

* Agents can optionally customize the Auto-Pilot Greeting messages composed on their behalf, and use their individualized messages in lieu of the global default messages; often agents include their name in their customized messages. Additionally, they can toggle whether Auto-Pilot Greetings is on or off for themselves by default.

* If using AutoCompose for Salesforce or LivePerson, agents configure customized messages and the on / off setting themselves through the Auto-Pilots tab of their AutoCompose panel.

This agent functionality can also be enabled via API for custom implementations of AutoCompose.

**Countdown Timer**

* The countdown timer is the amount of time after a customer enters the chat before an Auto-Pilot Greeting is sent. A countdown timer will display to the agent notifying them of the time remaining before auto-sending.

* Customers must reach out to their ASAPP contact to set an appropriate countdown timer value, which will apply across their AutoCompose implementation.

## FAQs

* **What are best practices for Auto-Pilot Greetings?**

ASAPP recommends setting the default message to include questions that agents often need to ask in the beginning of a call to resolve a dispute. For example:

> \*"Hi, \[NAME]. This is Robert and I'm happy to assist you today. So I can best help you, can you please provide me with your account number and a description of your issue?"

Furthermore, ASAPP recommends setting a countdown timer value in the range of 10-15 seconds. Too long of a default may increase handle times. Too short of a default may not give an agent a chance to cancel the Auto-Pilot Greeting should they so desire.

# Health Check API

Source: https://docs.asapp.com/autocompose/feature-releases/health-check-api

## Feature Release

This is the announcement for an upcoming ASAPP feature. Your ASAPP account team will provide a target release date and can direct you to more detailed information as needed.

## Overview

ASAPP provides a means for our customers to check the operational status of our API platform. Developers can ping this endpoint to verify that the ASAPP infrastructure is working as expected.

## Use and Impact

Developers can either check ad hoc if the ASAPP infrastructure is up at a given time or implement automated API monitoring to send a request to the Health Check API at a preset interval.

This feature is intended to improve developer confidence when integrating with ASAPP services. It also removes the need for developers to send requests to other ASAPP services to check their status, which may trigger errors unnecessarily.

## How It Works

Developers can run a `GET https://api.sandbox.asapp.com/v1/health` operation and inspect for a 200 response with either the SUCCESS or FAILED value for the status of the core ASAPP platform.

**Configuration**

Developers must request access to the API endpoint in the Developer Portal:

1. Access the Developer Portal.

2. Navigate to **Apps**, select your application, and authorize the Health Check API.

3. Reach out to your ASAPP account team to authorize access.

# Sandbox for AutoCompose

Source: https://docs.asapp.com/autocompose/feature-releases/sandbox-for-autocompose

## Feature Release

This is the announcement for an upcoming ASAPP feature. Your ASAPP account team will provide a target release date and can direct you to more detailed information as needed.

## Overview

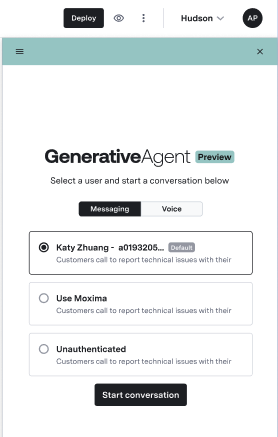

AutoCompose Sandbox is a playground designed to preview an agent's experience with AutoCompose without having to wait for an integration to complete. AutoCompose Sandbox enables administrators to step into the role of an agent and experience the real-time suggestions that AutoCompose provides.

## Use and Impact

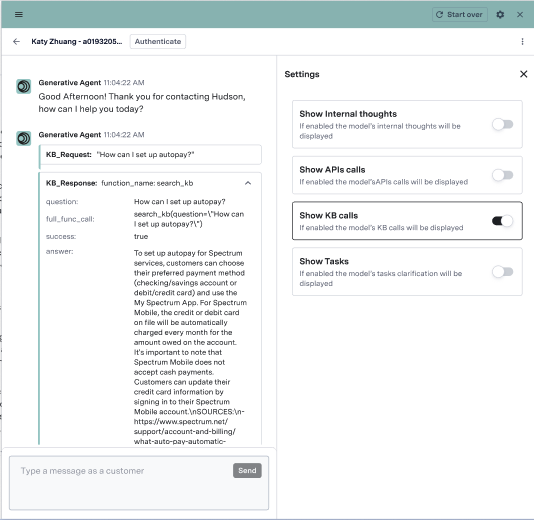

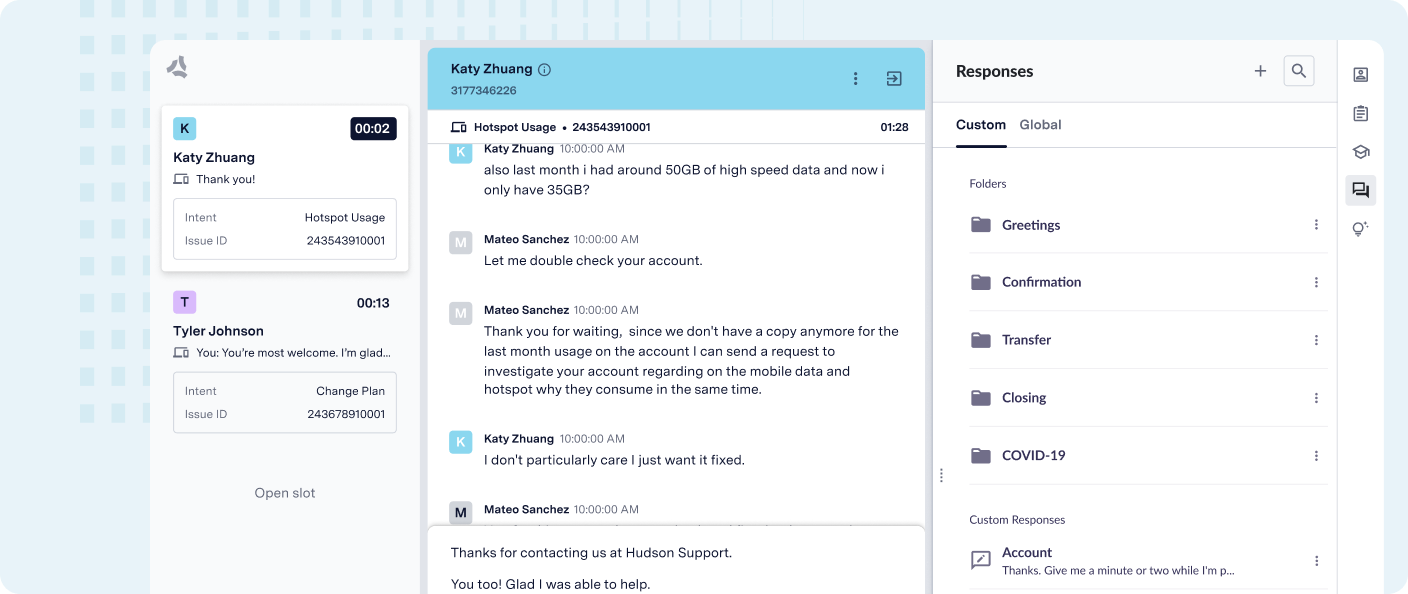

AutoCompose Sandbox enables administrators to visualize the experience that AutoCompose gives to agents presented in two areas of the screen: in the composer, which is where the agent types, and in the AutoCompose panel on the right-hand side.

Beyond showcasing the operational features of AutoCompose, the sandbox environment also delivers a proposed UI design. This design is rooted in thorough research on agent experience, resulting in increased product adoption and time savings.

AutoCompose provides both complete response suggestions above the composer and in-line suggestions while typing. The sandbox replicates the experience an agent would get, receiving suggestions from the response library. As AutoCompose learns from agents' most common responses, the response library will grow, which will be reflected in the suggestions provided in the sandbox.

## How It Works

Watch the following video walkthrough to learn how to use the AutoCompose Sandbox:

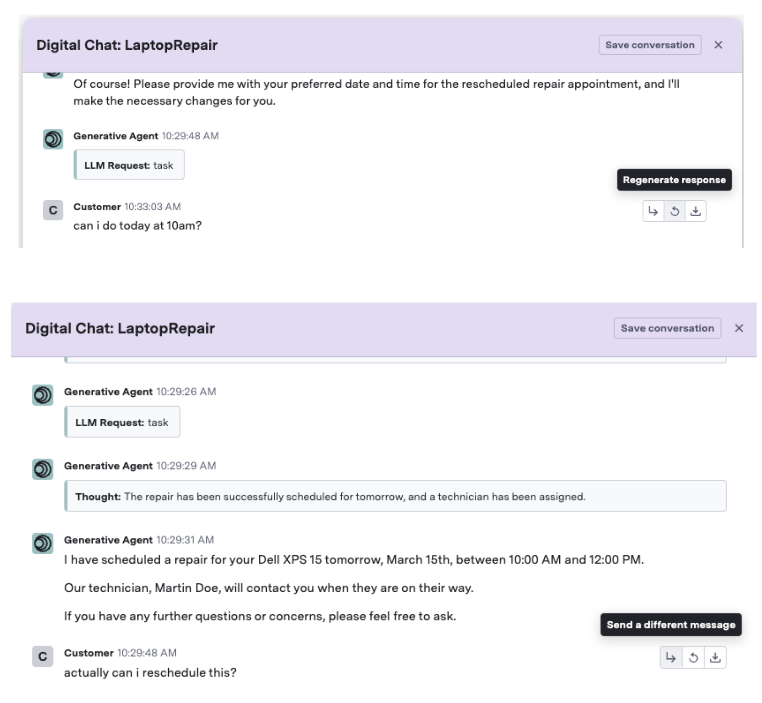

AutoCompose Sandbox enables you to play both sides of the conversation. AutoCompose won't suggest anything while simulating a customer, but suggestions will populate for the agent role.

The AutoCompose panel situated on the right side allows you to define and browse custom responses, which can then be accessed as suggestions in the composer. It also enables browsing of the global response list that has been defined globally. Finally, it allows an agent to customize the behavior of AutoPilot.

As agents use the suggestions provided by AutoCompose, the response library will grow, which will be reflected in the suggestions produced by AutoCompose.

## FAQs

* **Is AutoCompose Sandbox using the same sandbox environment we have access to?**

Yes. Developers building an AutoCompose integration can use AutoCompose Sandbox to easily create conversations and later retrieve them via the unique conversation ID available in the header of each conversation.

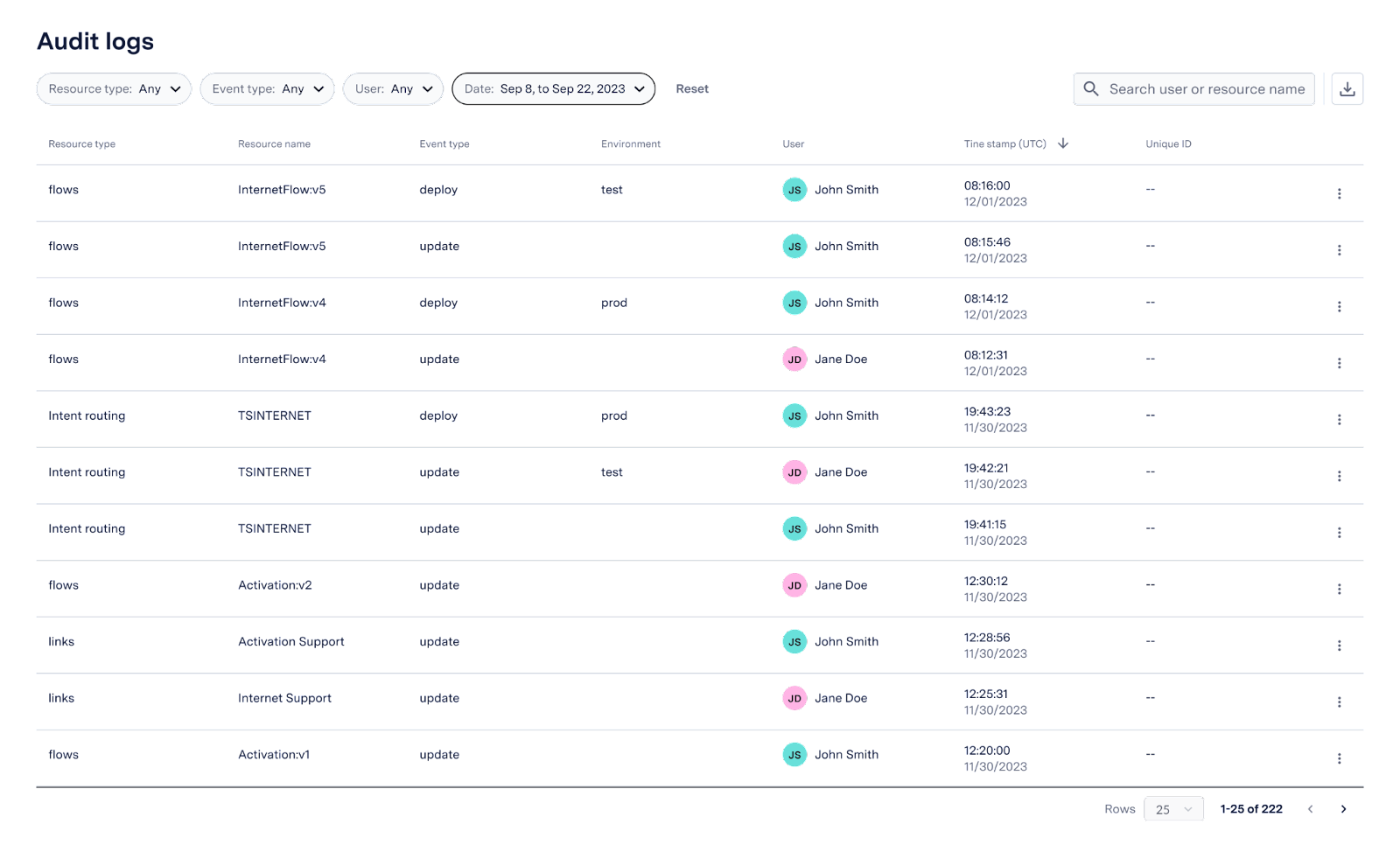

# Tooling for AutoCompose

Source: https://docs.asapp.com/autocompose/feature-releases/tooling-for-autocompose

## Feature Release

This is the announcement for an upcoming ASAPP feature. Your ASAPP account team will provide a target release date and can direct you to more detailed information as needed.

## Overview

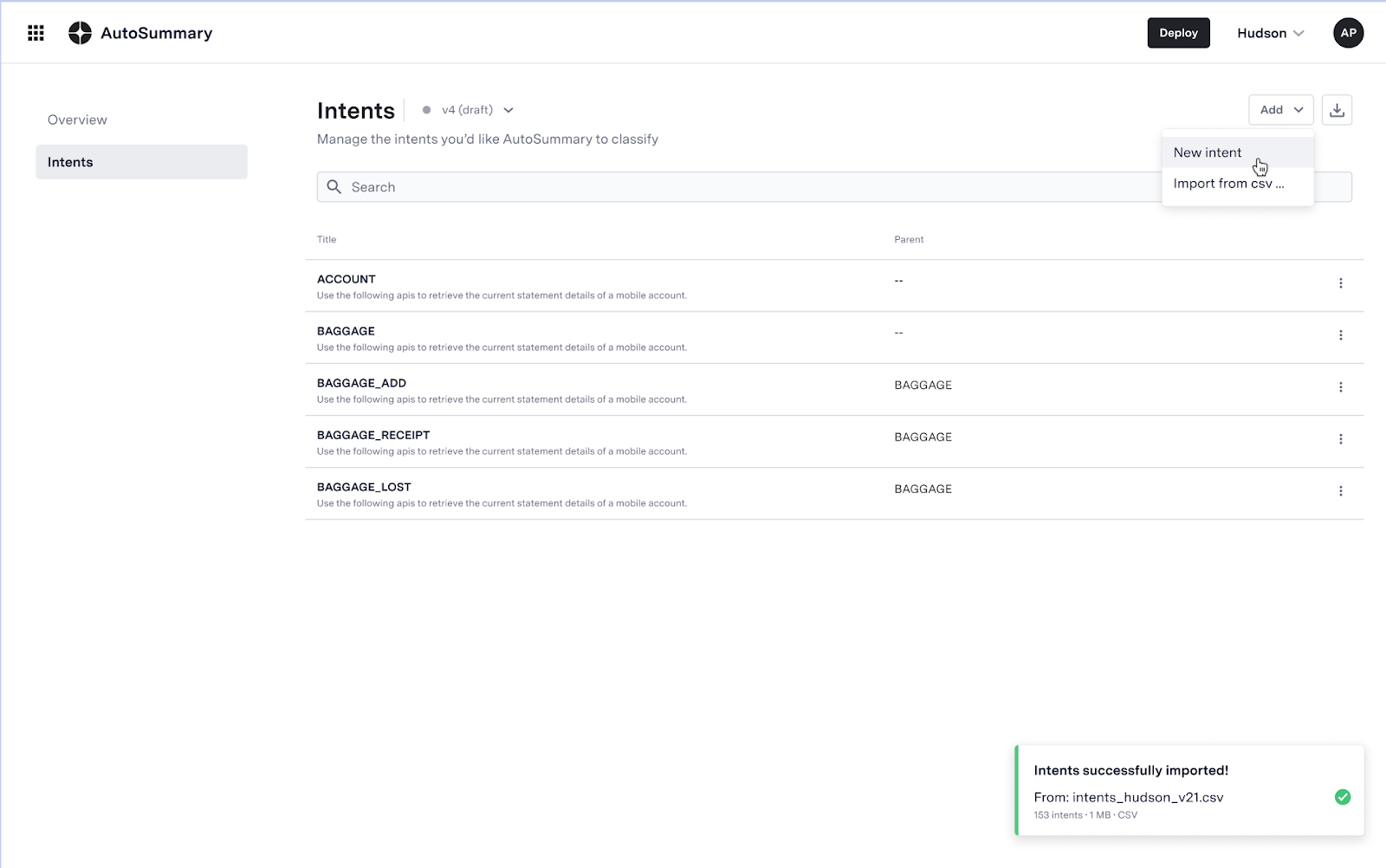

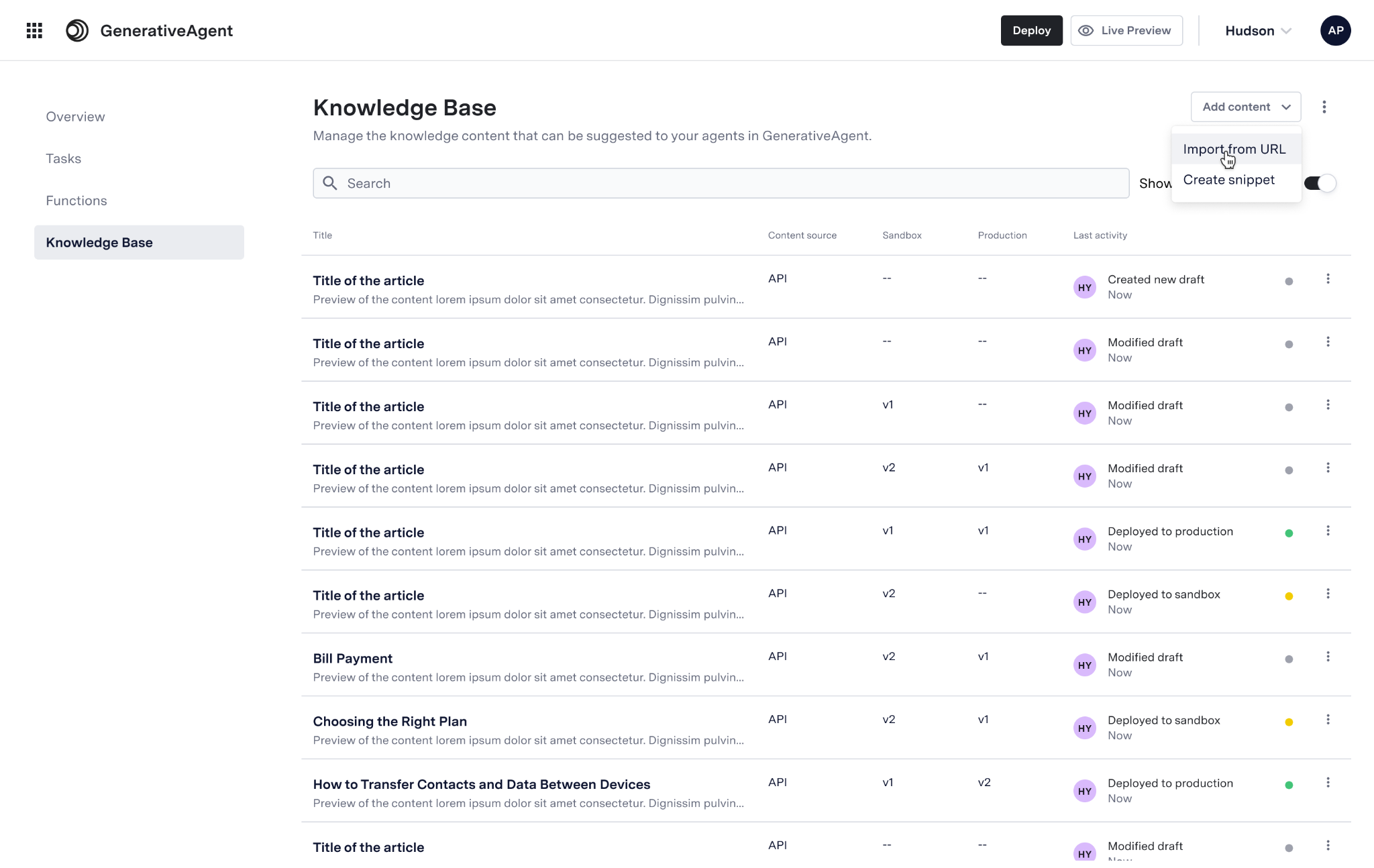

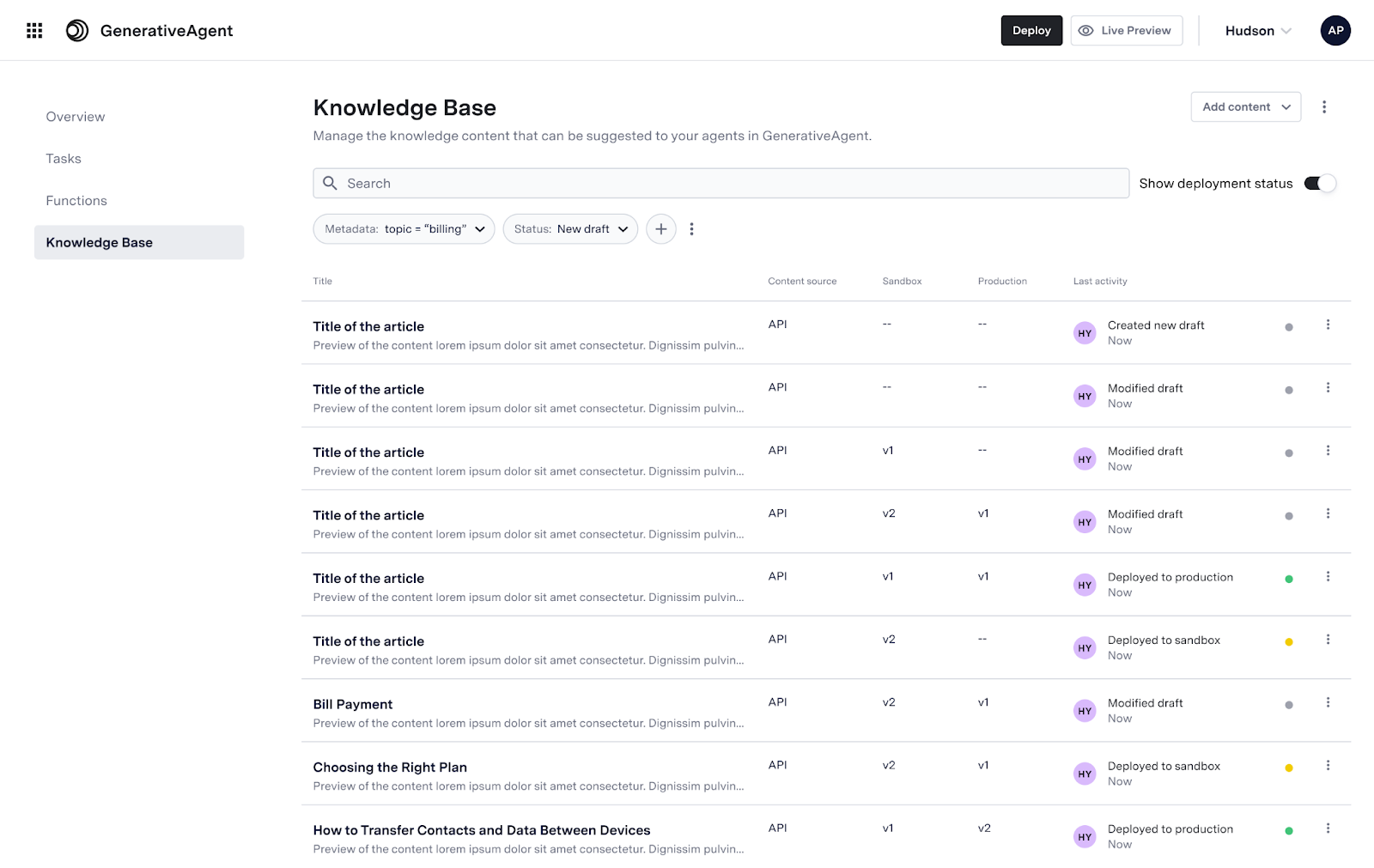

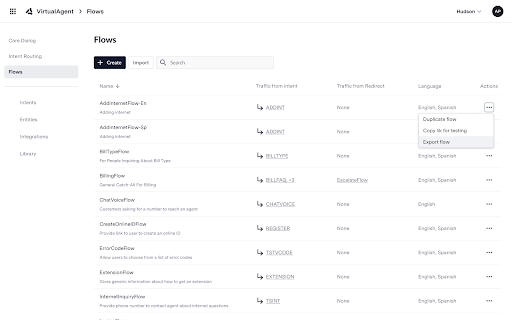

AutoCompose now supports configuration of the global response list in ASAPP's AI-Console. Users will be able to import responses in bulk, edit individual responses, and deploy responses to testing and production environments.

## Use and Impact

AutoCompose Sandbox enables administrators to visualize the experience that AutoCompose gives to agents presented in two areas of the screen: in the composer, which is where the agent types, and in the AutoCompose panel on the right-hand side.

Beyond showcasing the operational features of AutoCompose, the sandbox environment also delivers a proposed UI design. This design is rooted in thorough research on agent experience, resulting in increased product adoption and time savings.

AutoCompose provides both complete response suggestions above the composer and in-line suggestions while typing. The sandbox replicates the experience an agent would get, receiving suggestions from the response library. As AutoCompose learns from agents' most common responses, the response library will grow, which will be reflected in the suggestions provided in the sandbox.

## How It Works

Watch the following video walkthrough to learn how to use the AutoCompose Sandbox:

AutoCompose Sandbox enables you to play both sides of the conversation. AutoCompose won't suggest anything while simulating a customer, but suggestions will populate for the agent role.

The AutoCompose panel situated on the right side allows you to define and browse custom responses, which can then be accessed as suggestions in the composer. It also enables browsing of the global response list that has been defined globally. Finally, it allows an agent to customize the behavior of AutoPilot.

As agents use the suggestions provided by AutoCompose, the response library will grow, which will be reflected in the suggestions produced by AutoCompose.

## FAQs

* **Is AutoCompose Sandbox using the same sandbox environment we have access to?**

Yes. Developers building an AutoCompose integration can use AutoCompose Sandbox to easily create conversations and later retrieve them via the unique conversation ID available in the header of each conversation.

# Tooling for AutoCompose

Source: https://docs.asapp.com/autocompose/feature-releases/tooling-for-autocompose

## Feature Release

This is the announcement for an upcoming ASAPP feature. Your ASAPP account team will provide a target release date and can direct you to more detailed information as needed.

## Overview

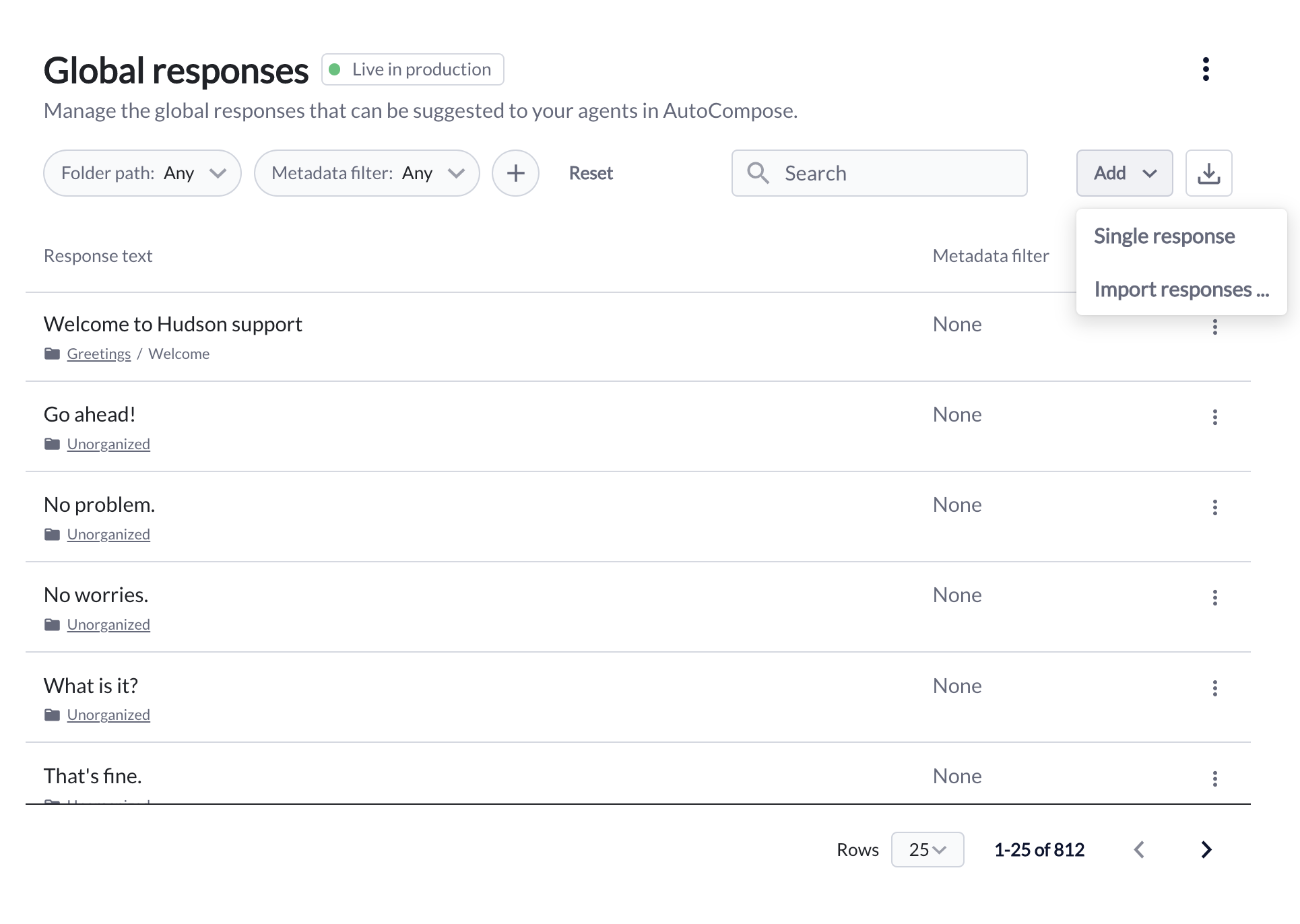

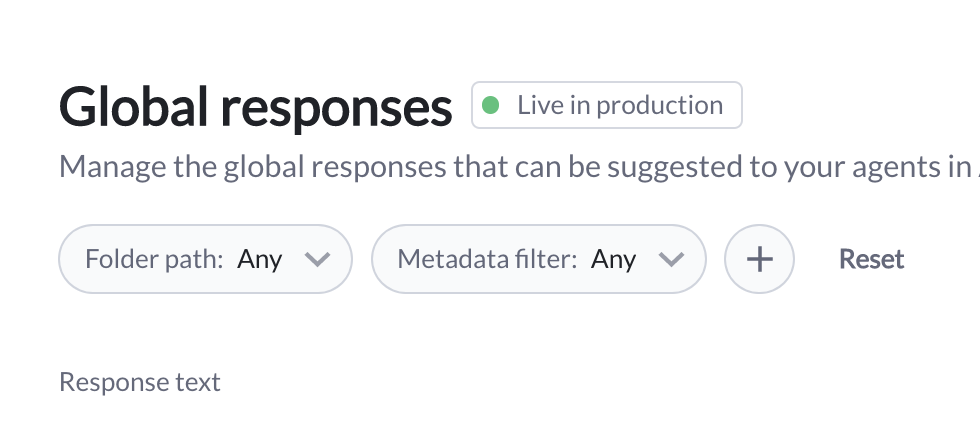

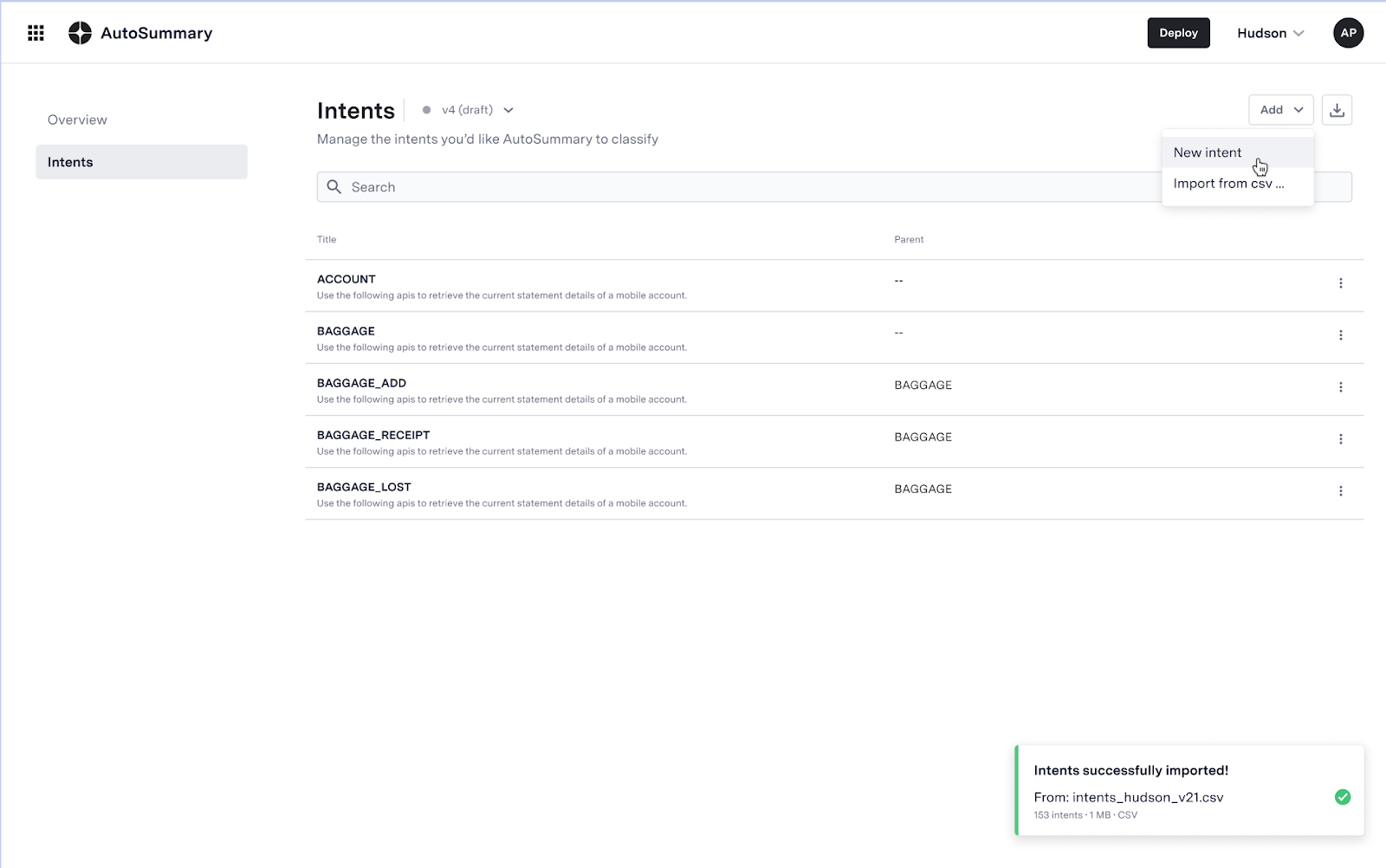

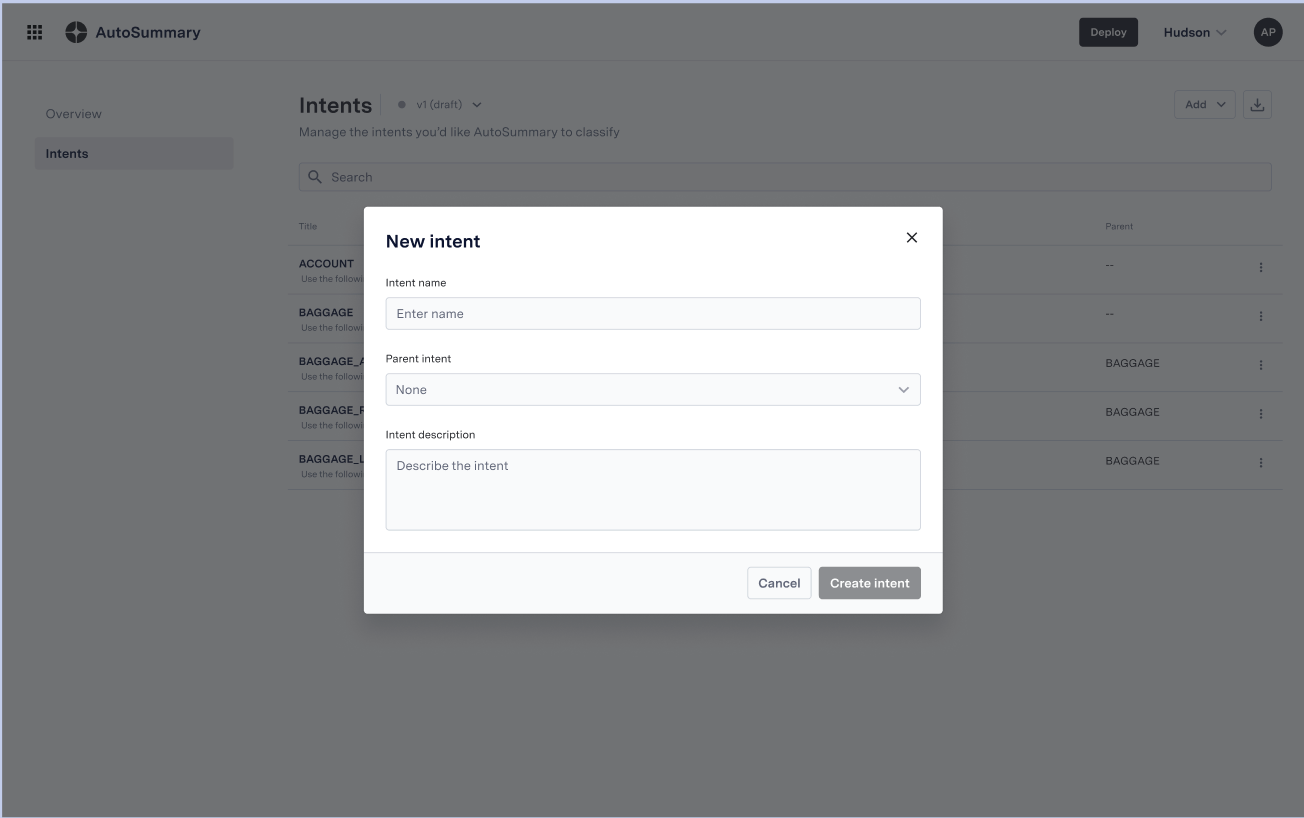

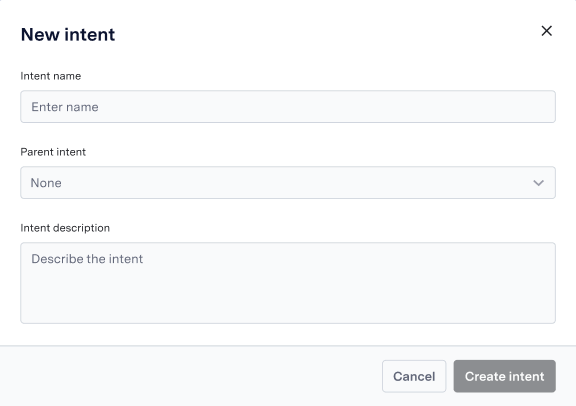

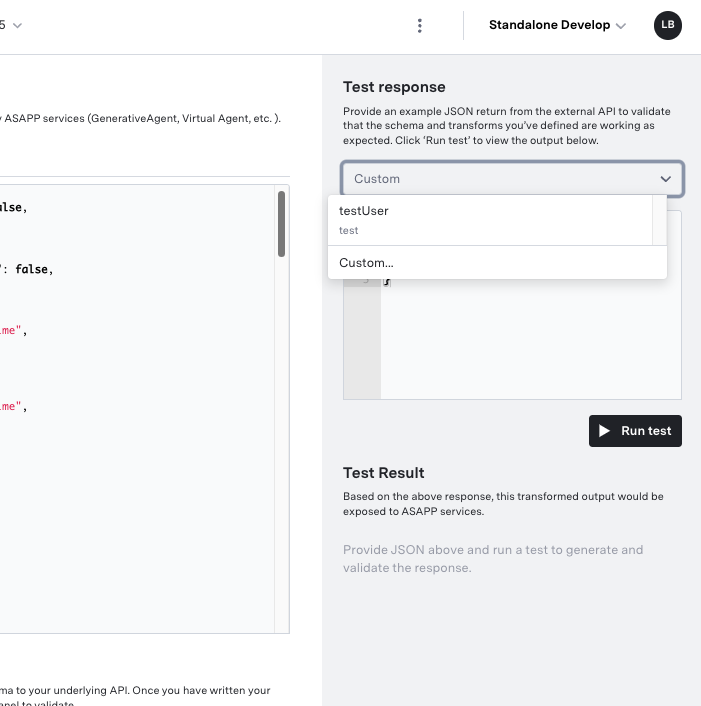

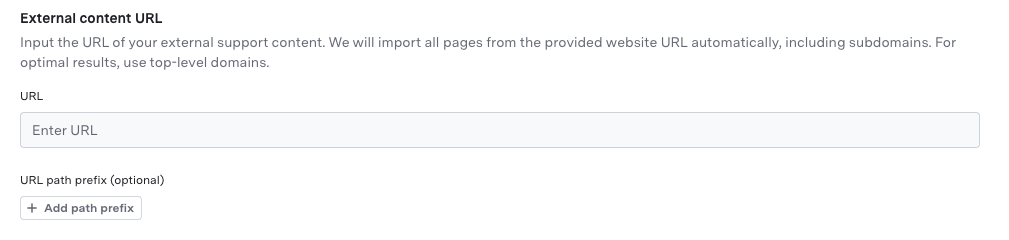

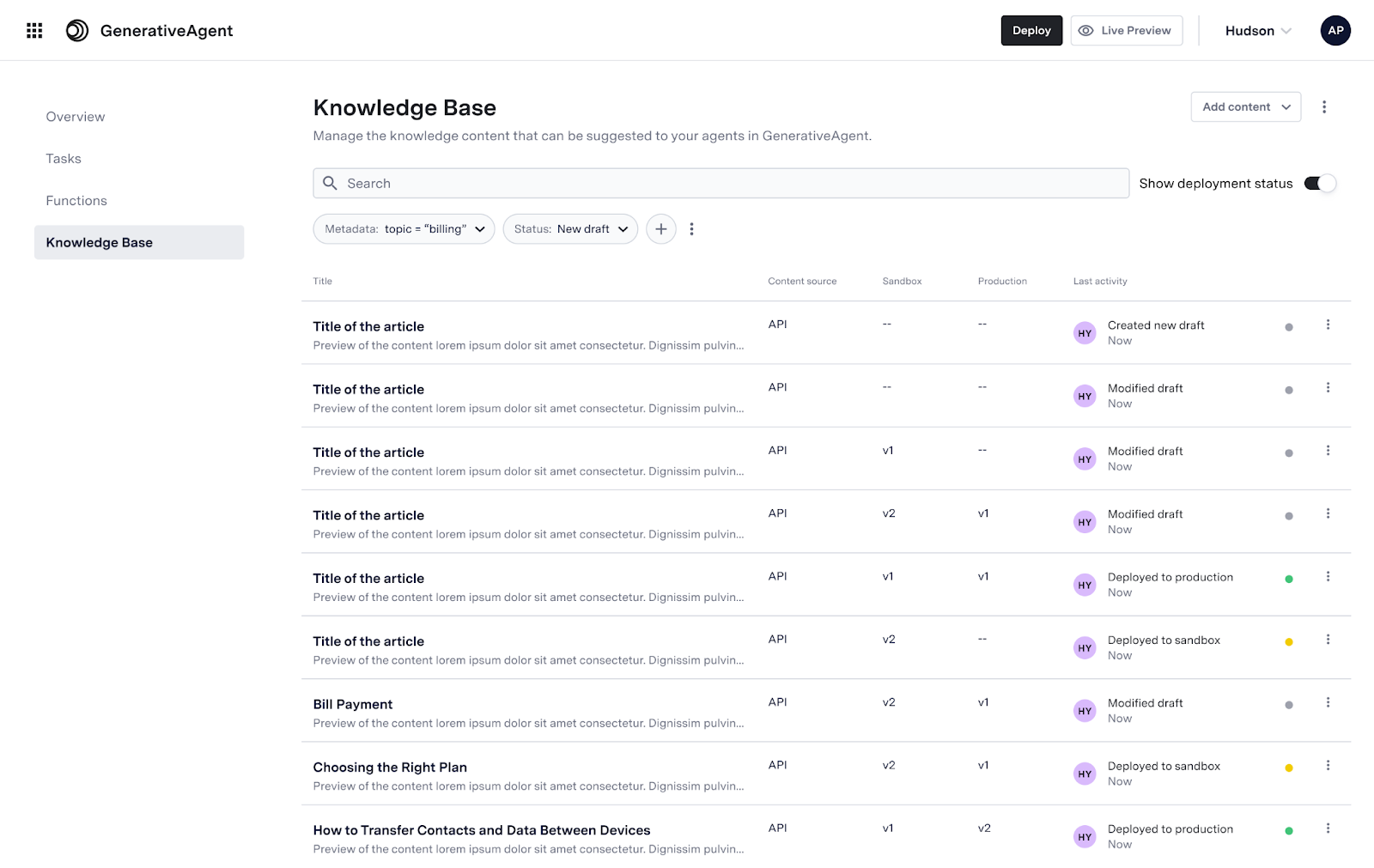

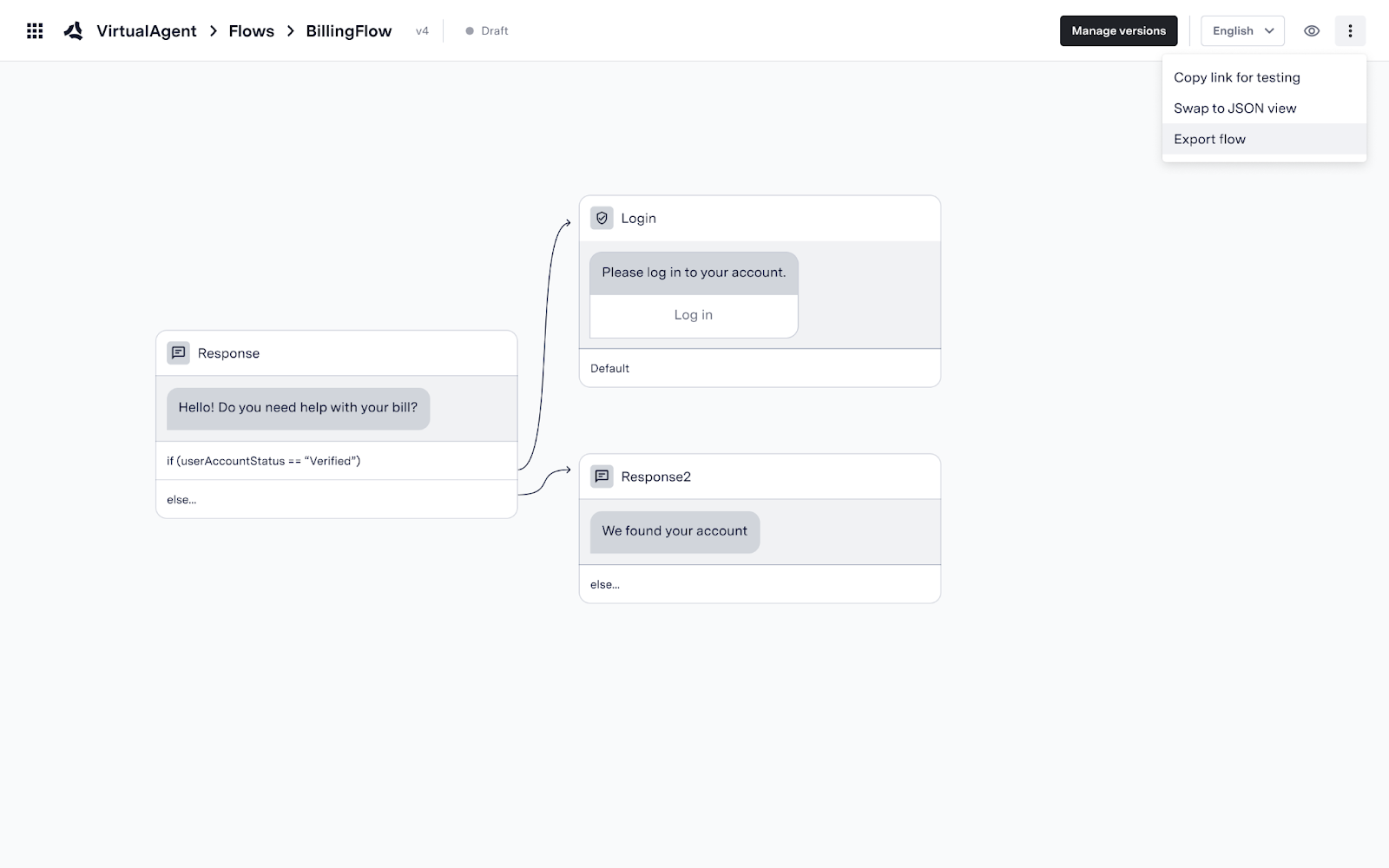

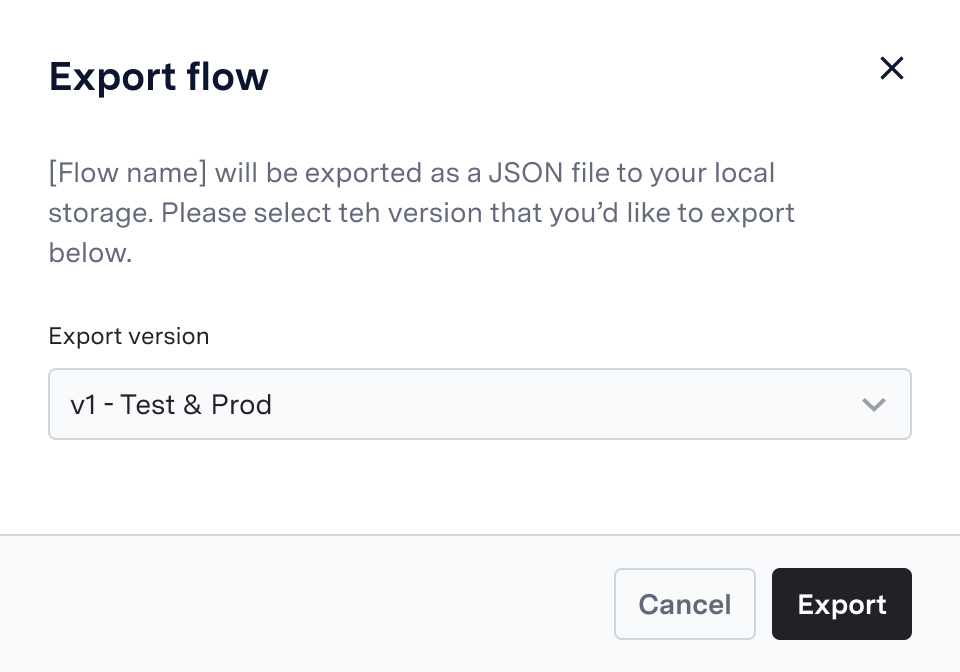

AutoCompose now supports configuration of the global response list in ASAPP's AI-Console. Users will be able to import responses in bulk, edit individual responses, and deploy responses to testing and production environments.

## Use and Impact

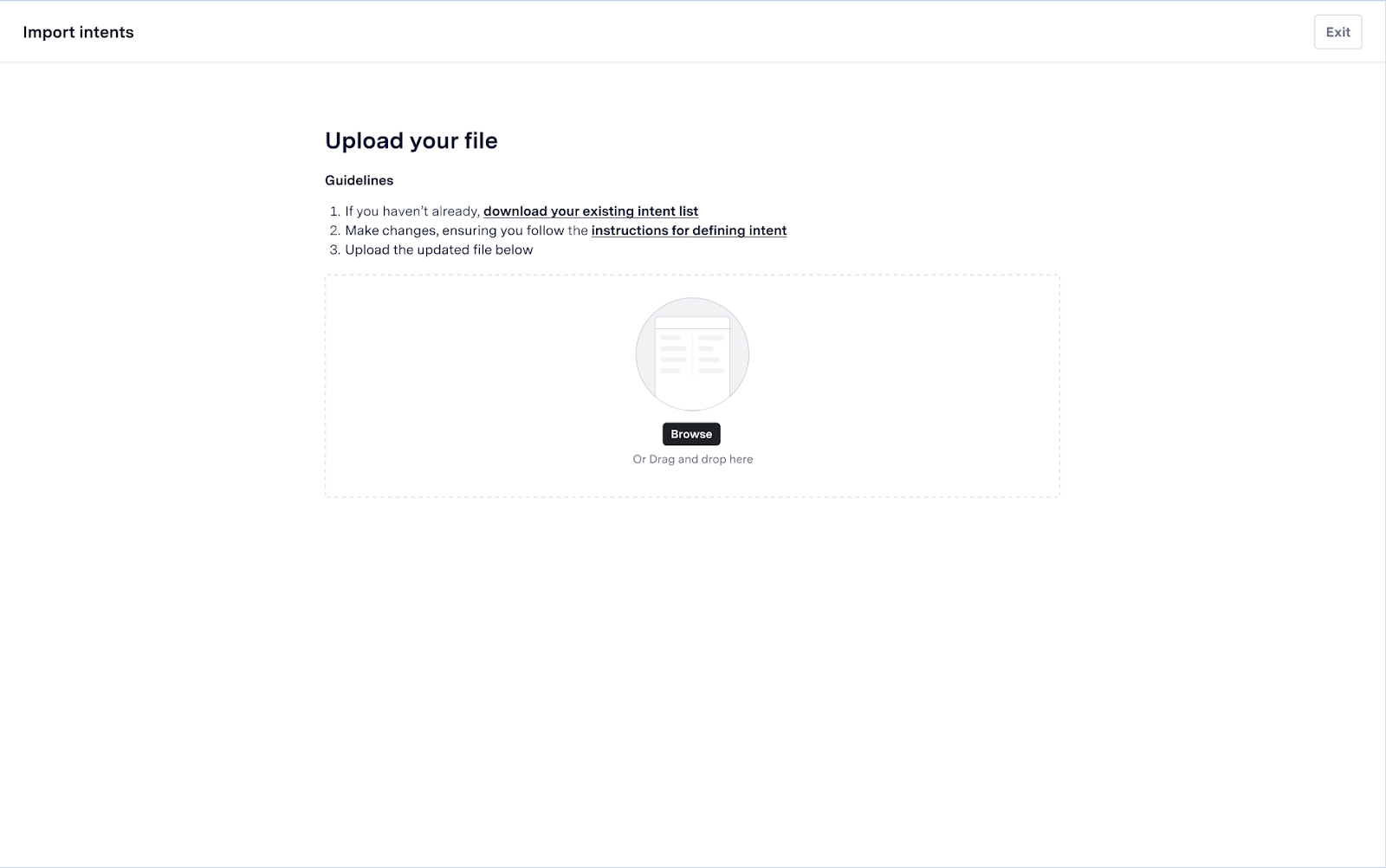

ASAPP's machine learning models powering AutoCompose use the global response list (in addition to custom responses and organic responses) to select the response that is most likely to be said by the agent. Making ongoing updates to the global response list is essential to ensuring agents are receiving relevant suggestions.Features

Global responses can be configured at two levels:

1. **Bulk uploads**: This method is best suited for large volumes of changes. Users first make edits to a .csv file offline containing the full global response list, then upload the file as a new version of the list.

2. **Targeted edits**: This method is best suited for small volumes of changes. Users make edits directly in the AI-Console UI for each individual response they wish to change.

By providing ASAPP customers this self-serve capability, teams can iterate more quickly on the response list, ensuring improved coverage of situations encountered by agents and timely updates that keep pace with ongoing changes in the business.

## How It Works

Watch the video walkthrough below to learn how to manage global responses in AI-Console:

**Configurable Response Elements**

Users can configure four data elements for each global response:

* **Response text**: The text of the response (required)

* **Folder path**: The hierarchy that dictates where the response resides

* **Title**: The short-form title of the response

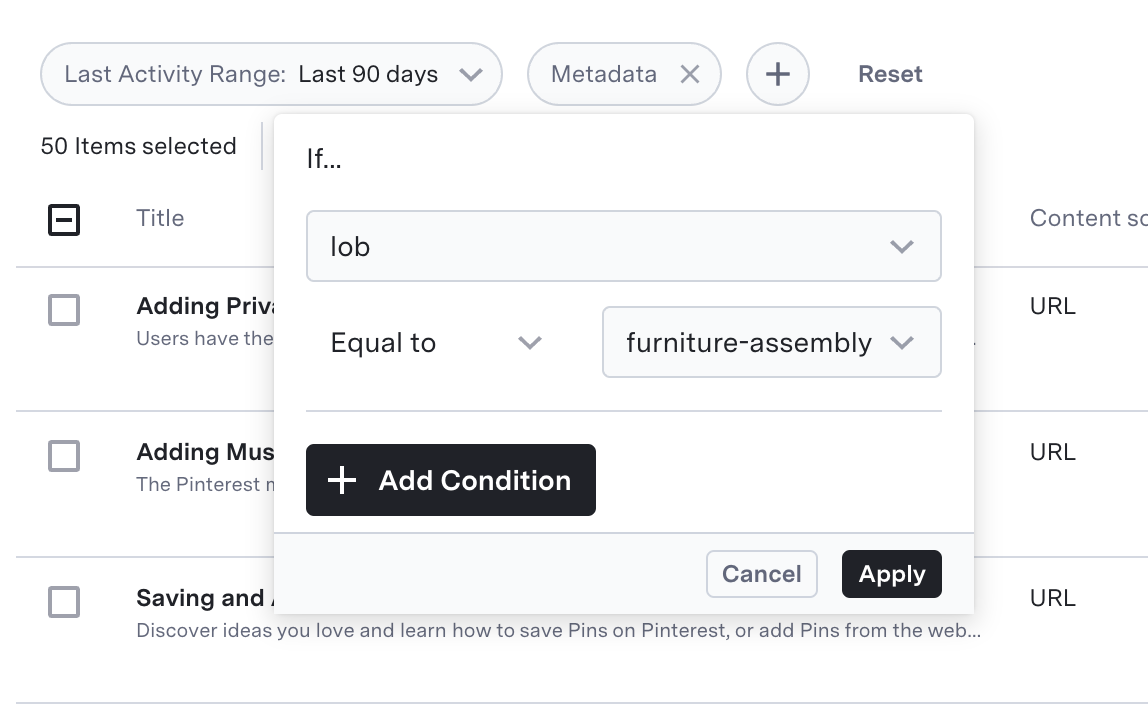

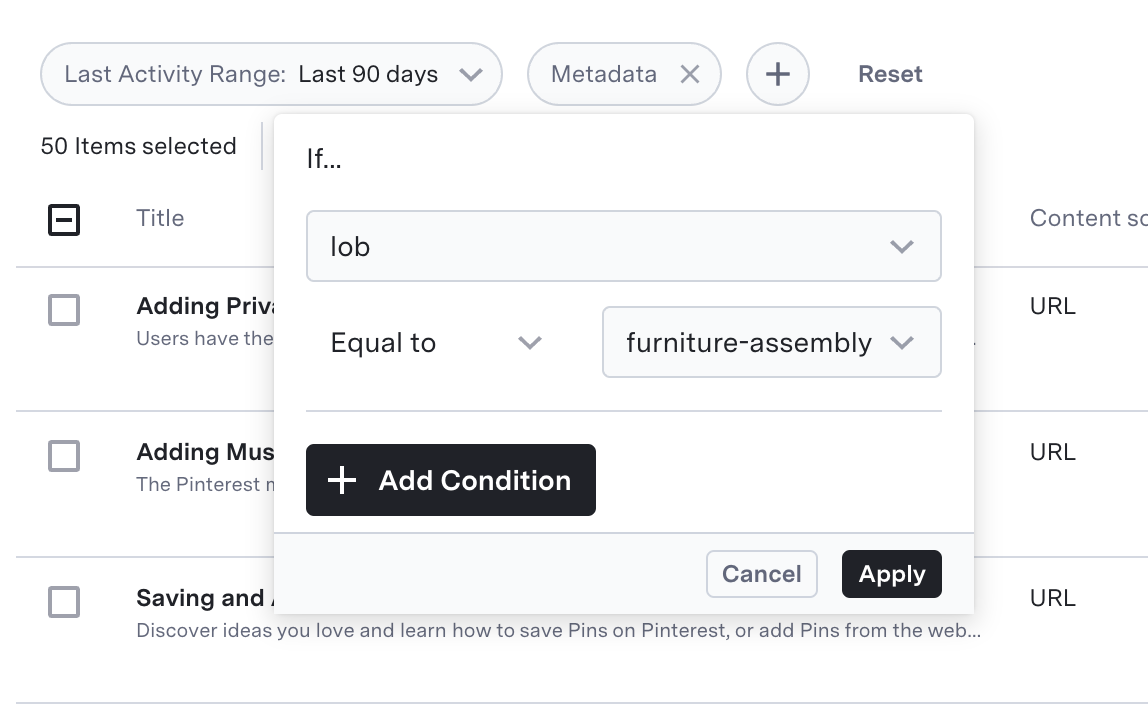

* **Metadata filters**: A key and value used to specify the set of conversations for which a response is available

## Use and Impact

ASAPP's machine learning models powering AutoCompose use the global response list (in addition to custom responses and organic responses) to select the response that is most likely to be said by the agent. Making ongoing updates to the global response list is essential to ensuring agents are receiving relevant suggestions.Features

Global responses can be configured at two levels:

1. **Bulk uploads**: This method is best suited for large volumes of changes. Users first make edits to a .csv file offline containing the full global response list, then upload the file as a new version of the list.

2. **Targeted edits**: This method is best suited for small volumes of changes. Users make edits directly in the AI-Console UI for each individual response they wish to change.

By providing ASAPP customers this self-serve capability, teams can iterate more quickly on the response list, ensuring improved coverage of situations encountered by agents and timely updates that keep pace with ongoing changes in the business.

## How It Works

Watch the video walkthrough below to learn how to manage global responses in AI-Console:

**Configurable Response Elements**

Users can configure four data elements for each global response:

* **Response text**: The text of the response (required)

* **Folder path**: The hierarchy that dictates where the response resides

* **Title**: The short-form title of the response

* **Metadata filters**: A key and value used to specify the set of conversations for which a response is available

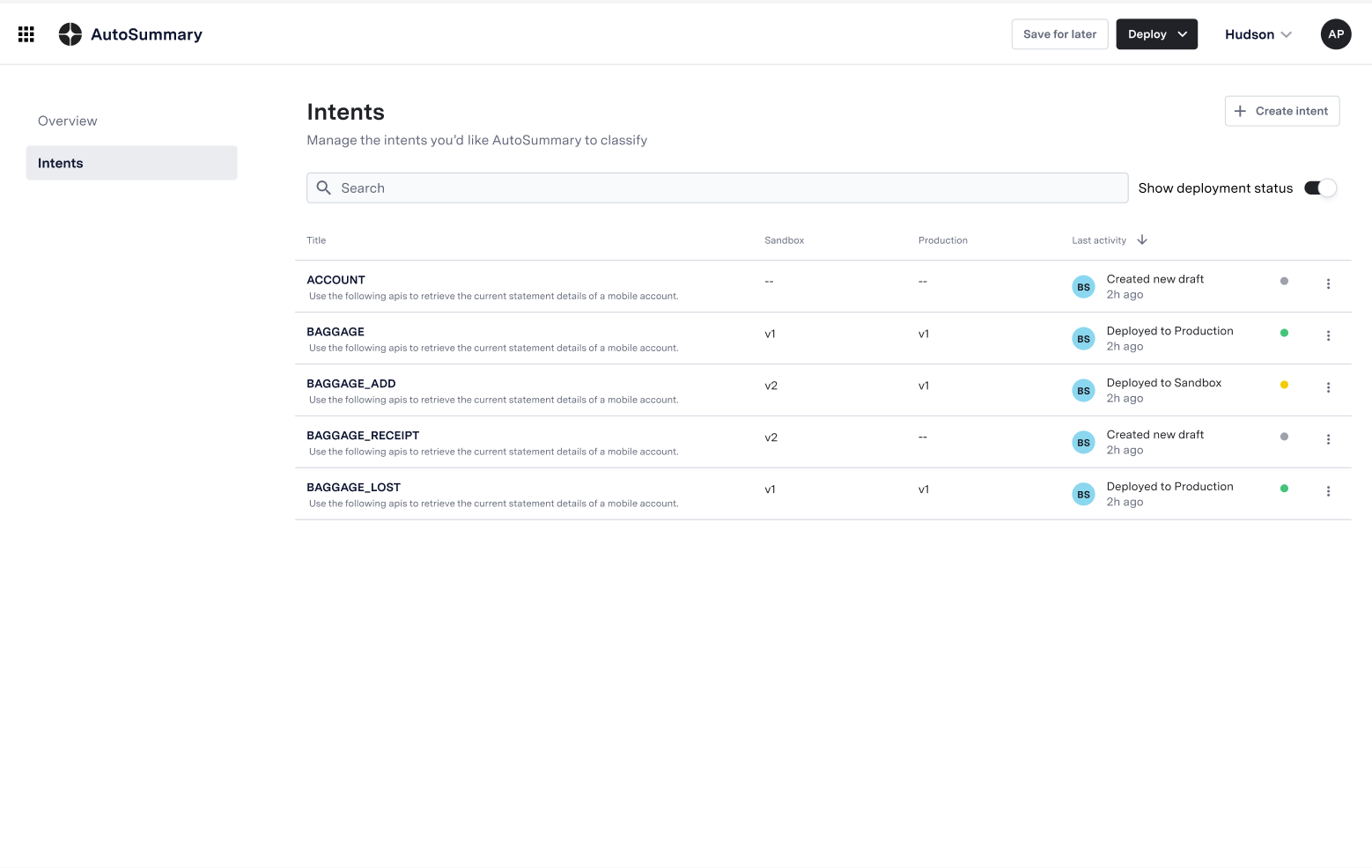

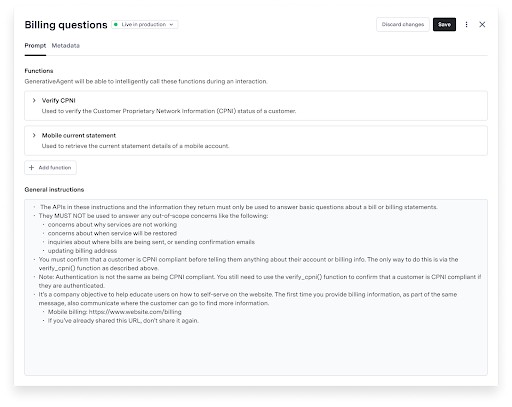

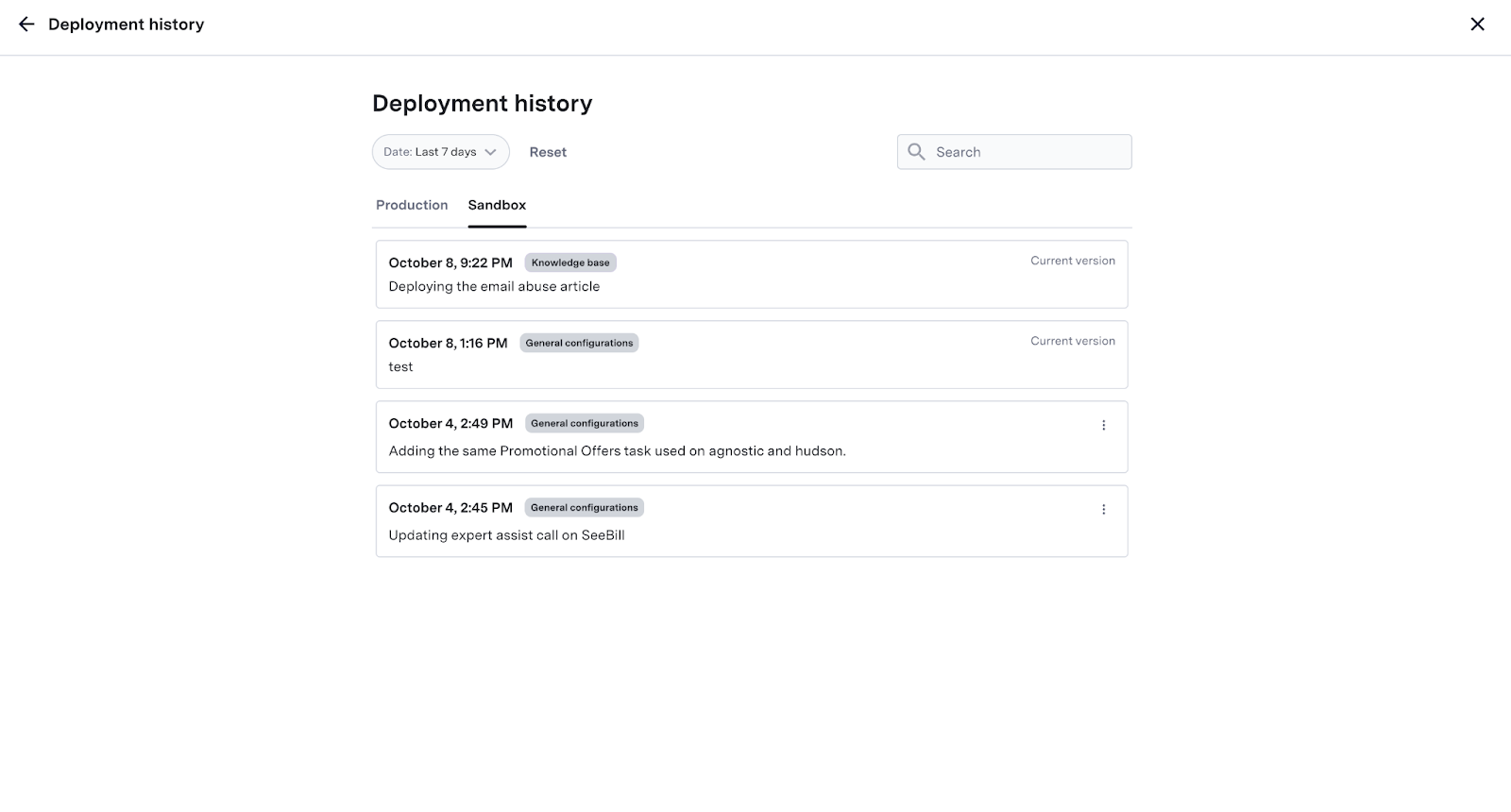

**Saving and Deploying**

Saving changes to the global response list or uploading a new list creates a new version. Past versions can also be viewed and restored as needed.

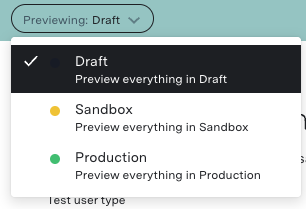

The global response list can be easily deployed into testing or production environments, with an indicator at the top of each version showing the status of the response list (e.g. Live in production).

**Saving and Deploying**

Saving changes to the global response list or uploading a new list creates a new version. Past versions can also be viewed and restored as needed.

The global response list can be easily deployed into testing or production environments, with an indicator at the top of each version showing the status of the response list (e.g. Live in production).

Visit the [Tooling Guide](/autocompose/autocompose-tooling-guide "AutoCompose Tooling Guide") for more information on using AI-Console to manage the AutoCompose global response list.

## FAQs

1. **How do you access AutoCompose in AI-Console?**

Provided that you have permission to access AutoCompose in AI-Console, it will appear in the AI Services section of your homepage.

To access AutoCompose from any other AI-Console page, select the menu icon in the top left corner and then select AutoCompose.

2. **How does response metadata work?**

AutoCompose uses response metadata in two main ways:

* **As a data insert:** Variable metadata such as customer name or time of day is dynamically inserted into templated response text when a suggestion is made to the agent. Read more about templated responses in the AutoCompose Product Guide Features

* **As a filter:** Responses are only made available for suggestion when the conversation's metadata matches the attribute set for a given response (e.g. a response only being available when `queue` = `general`).

Visit the [Tooling Guide](/autocompose/autocompose-tooling-guide "AutoCompose Tooling Guide") for more information on using AI-Console to manage the AutoCompose global response list.

## FAQs

1. **How do you access AutoCompose in AI-Console?**

Provided that you have permission to access AutoCompose in AI-Console, it will appear in the AI Services section of your homepage.

To access AutoCompose from any other AI-Console page, select the menu icon in the top left corner and then select AutoCompose.

2. **How does response metadata work?**

AutoCompose uses response metadata in two main ways:

* **As a data insert:** Variable metadata such as customer name or time of day is dynamically inserted into templated response text when a suggestion is made to the agent. Read more about templated responses in the AutoCompose Product Guide Features

* **As a filter:** Responses are only made available for suggestion when the conversation's metadata matches the attribute set for a given response (e.g. a response only being available when `queue` = `general`).

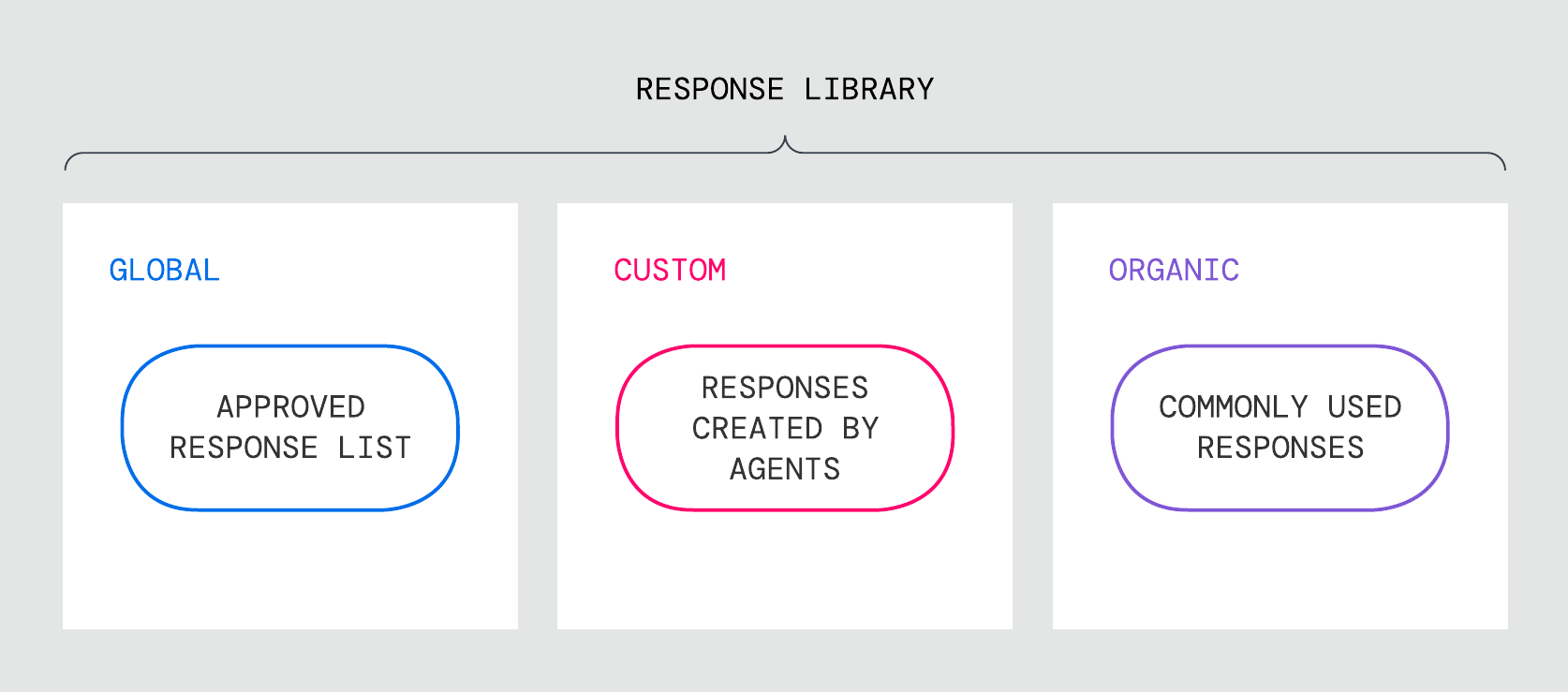

### Response Library

AutoCompose suggests responses from a library curated from a wide range of domain-specific conversation topics. The response library is a combination of three lists:

### Response Library

AutoCompose suggests responses from a library curated from a wide range of domain-specific conversation topics. The response library is a combination of three lists:

1. **Global response list:** Messages created and maintained by program administrators available to a designated full agent population.

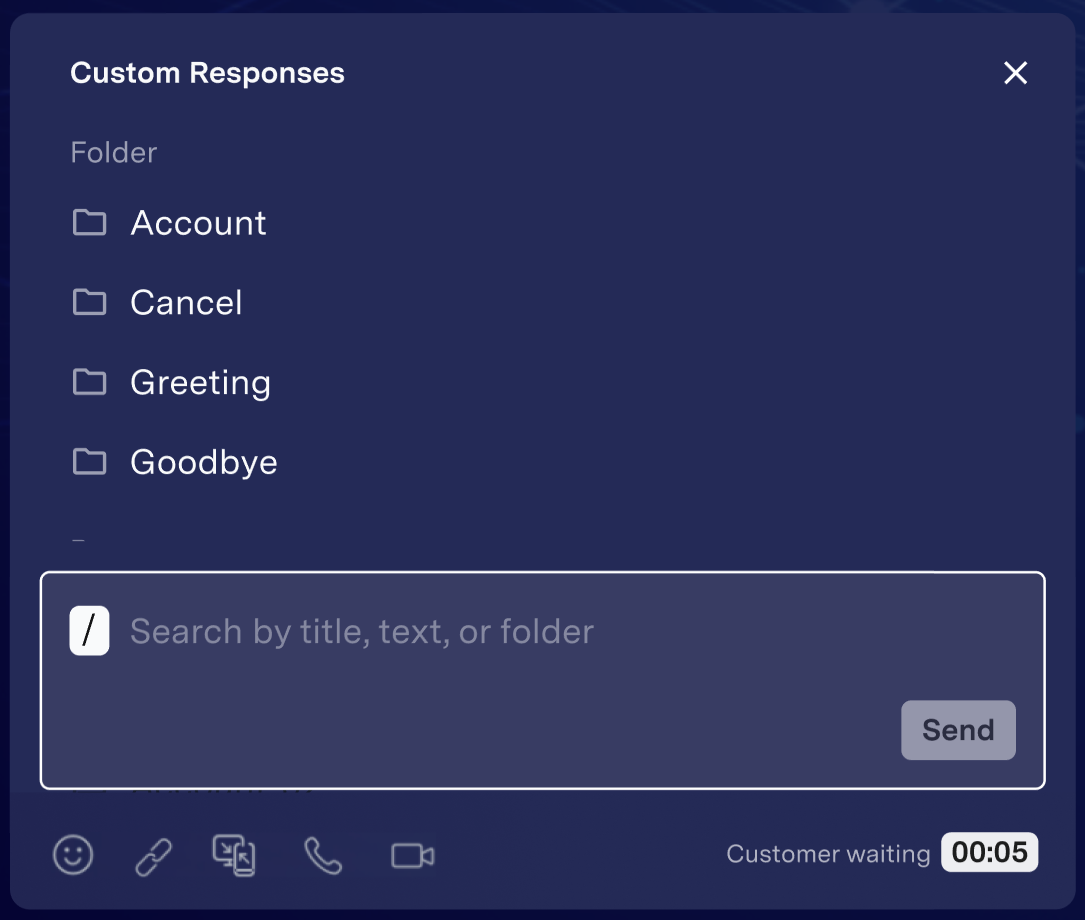

2. **Custom response list:** Messages created and maintained directly in AutoCompose by individual agents; only available to the agent that created the message.

3. **Organically growing response list:** Messages automatically created by ASAPP for each agent based on their most commonly used messages that do not already exist in the global response list or the agent's curated custom response list.

1. **Global response list:** Messages created and maintained by program administrators available to a designated full agent population.

2. **Custom response list:** Messages created and maintained directly in AutoCompose by individual agents; only available to the agent that created the message.

3. **Organically growing response list:** Messages automatically created by ASAPP for each agent based on their most commonly used messages that do not already exist in the global response list or the agent's curated custom response list.

2. **Response panel:** In the response panel, agents can browse both the global and custom response lists, either using a folder hierarchy or with the provided search field.

2. **Response panel:** In the response panel, agents can browse both the global and custom response lists, either using a folder hierarchy or with the provided search field.

Phrase completions are generated from common, high-frequency phrases used in each implementation's production conversations. AutoCompose only makes phrase suggestions when a sufficiently high-confidence phrase is available and only uses language found in the global and custom response library.

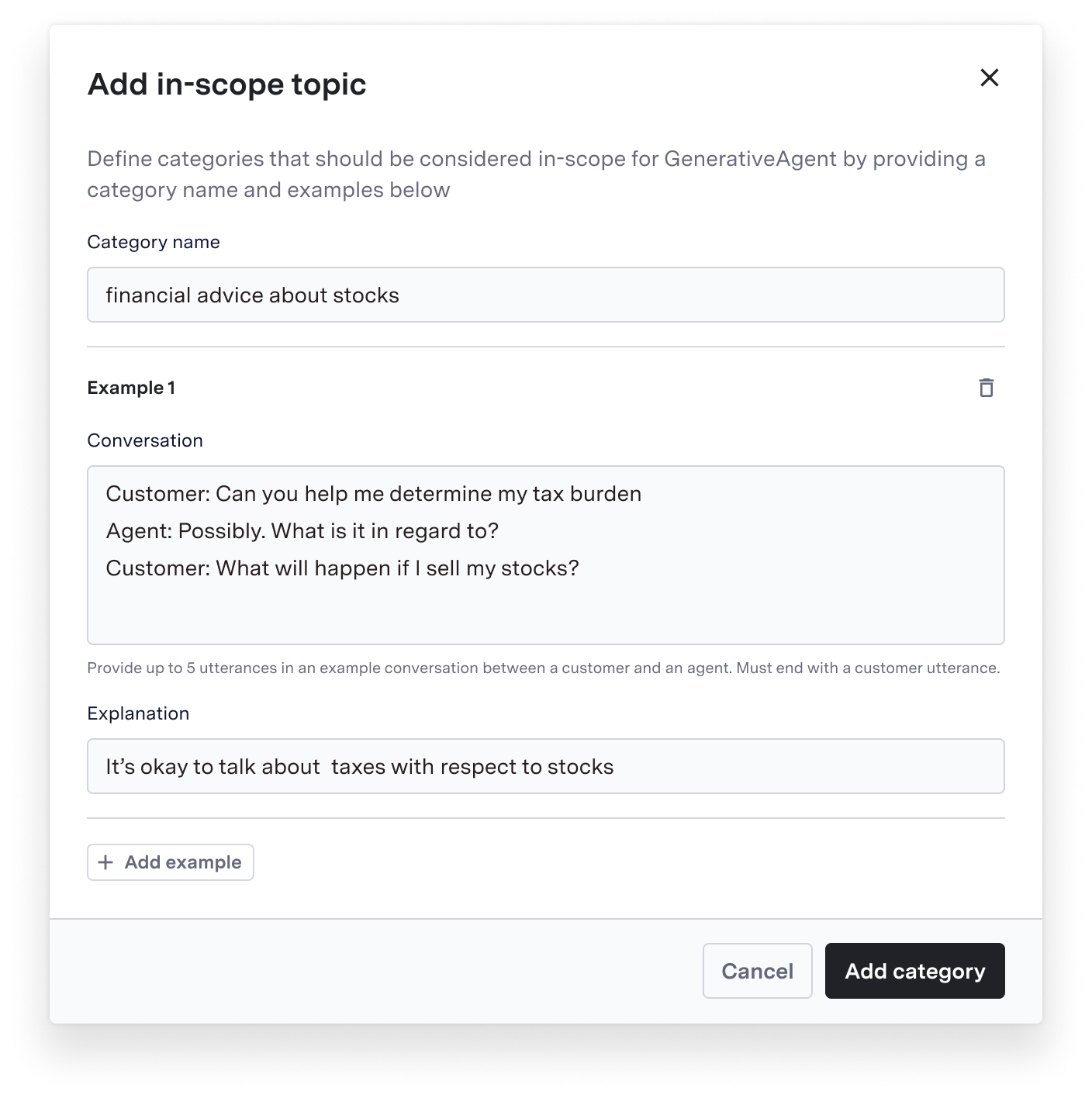

### Templated Responses

AutoCompose can dynamically insert metadata into designated templated responses in the global response list.

For example, a customer's first name can be automatically populated into this templated response: "Hi *\{name}*, how can I help you today?".

By default, AutoCompose supports inserting customer first name, agent first name and the customer's time of day (morning, afternoon, evening) into templated responses. Time of day can be set to a single zone or be dynamically determined for each conversation.

AutoCompose also supports inserting custom conversation-specific metadata passed to ASAPP. For more information on custom inserts, reach out to your ASAPP account team.

Phrase completions are generated from common, high-frequency phrases used in each implementation's production conversations. AutoCompose only makes phrase suggestions when a sufficiently high-confidence phrase is available and only uses language found in the global and custom response library.

### Templated Responses

AutoCompose can dynamically insert metadata into designated templated responses in the global response list.

For example, a customer's first name can be automatically populated into this templated response: "Hi *\{name}*, how can I help you today?".

By default, AutoCompose supports inserting customer first name, agent first name and the customer's time of day (morning, afternoon, evening) into templated responses. Time of day can be set to a single zone or be dynamically determined for each conversation.

AutoCompose also supports inserting custom conversation-specific metadata passed to ASAPP. For more information on custom inserts, reach out to your ASAPP account team.

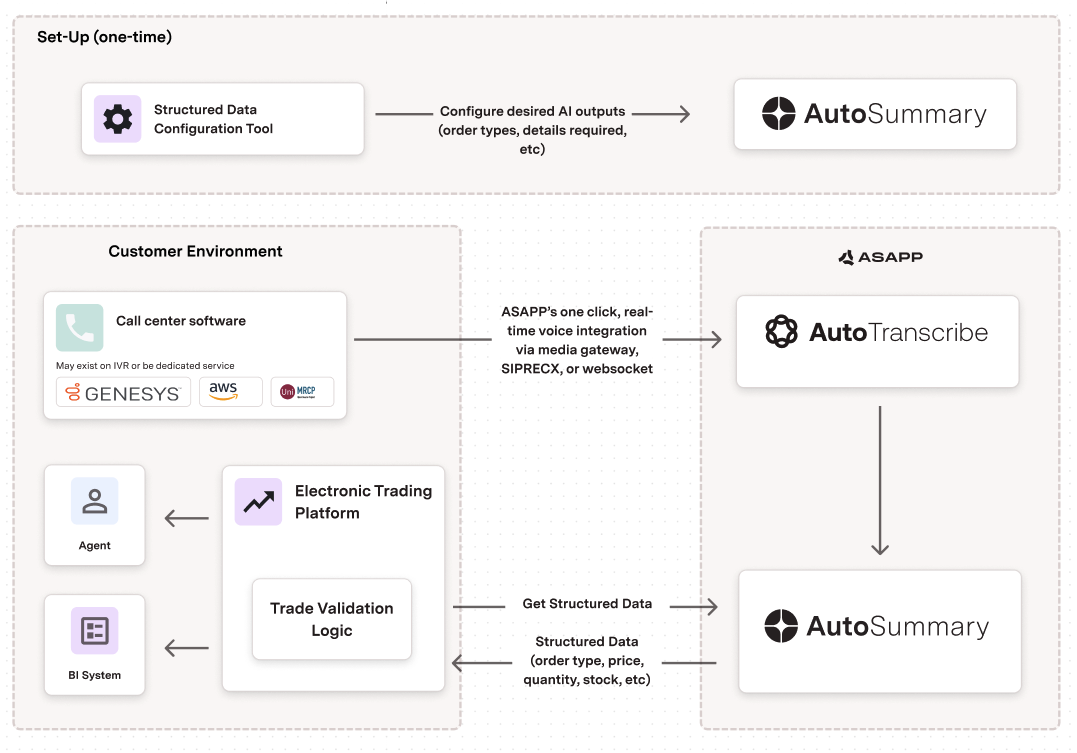

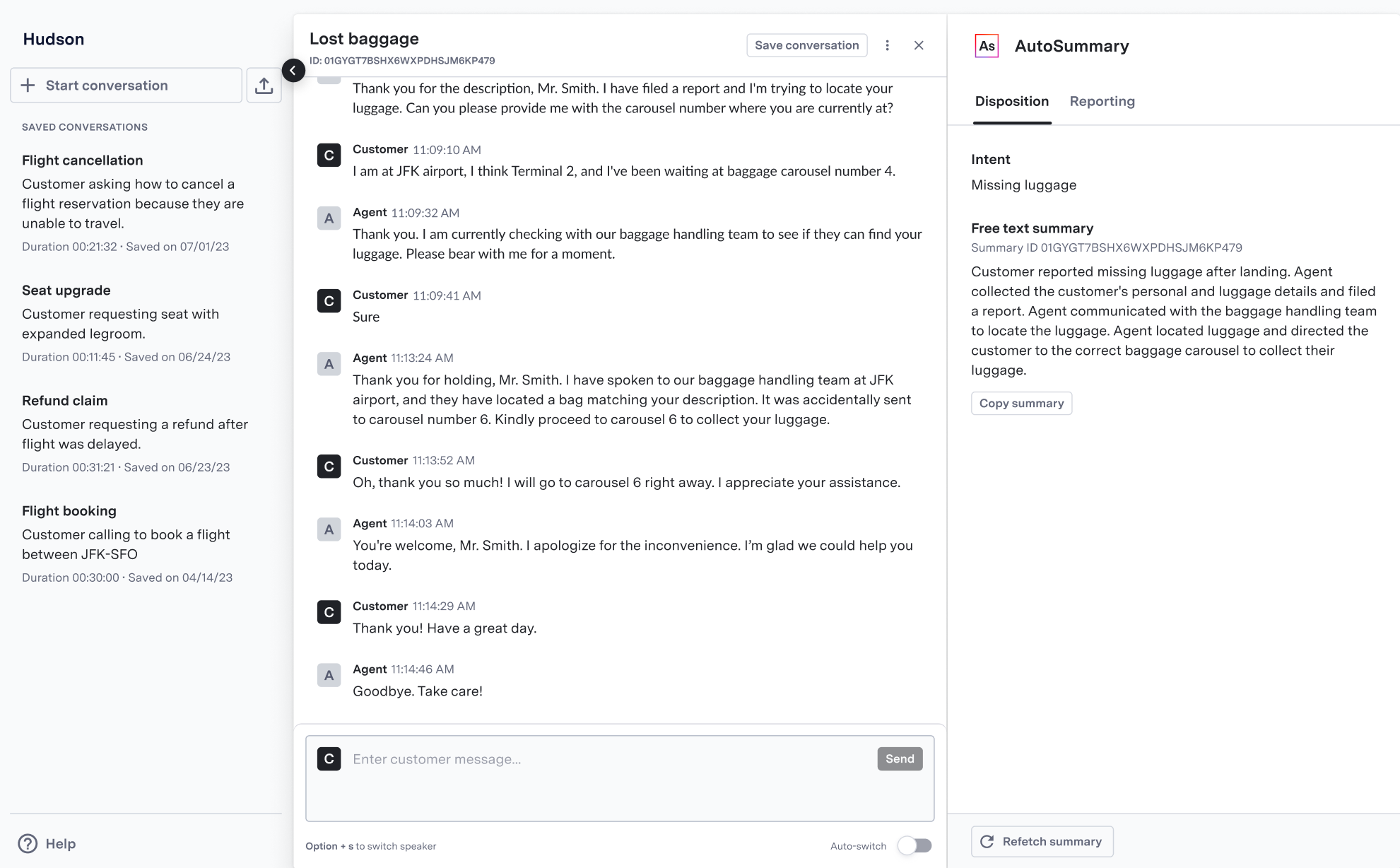

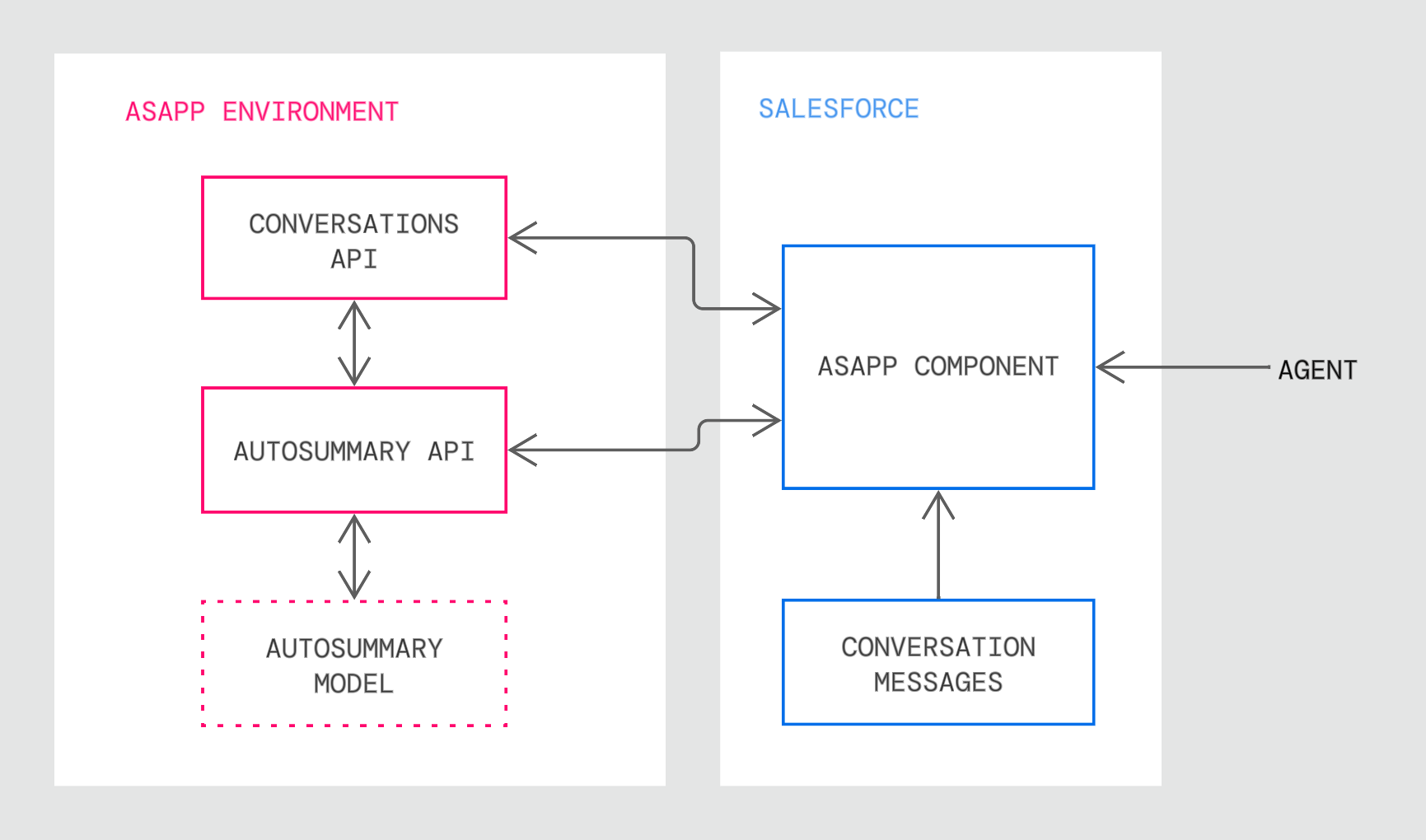

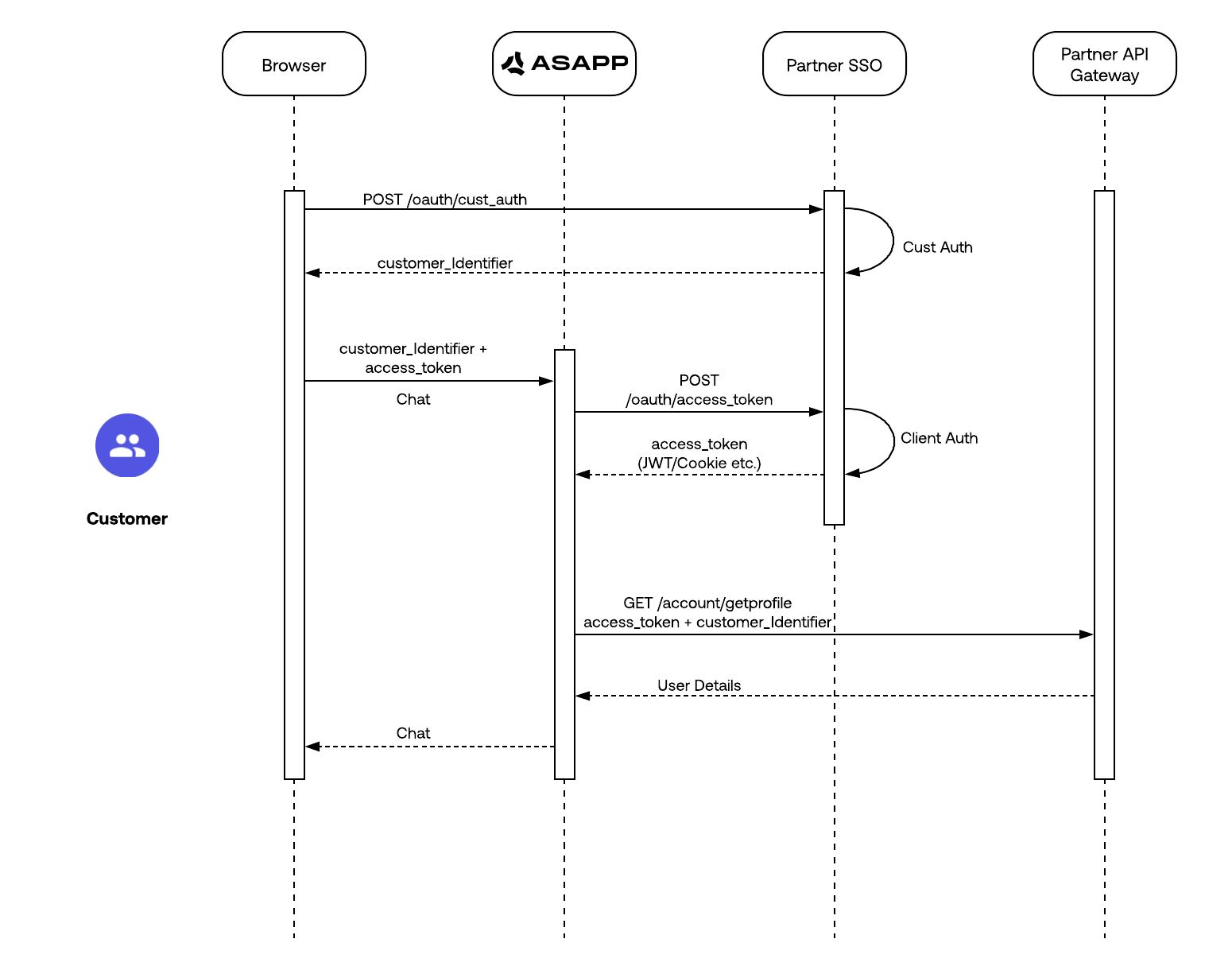

AutoSummary provides a set of APIs to enable you to extract insights from the wealth of data generated when your agents talk to your customers.

AutoSummary insights are powered by ASAPP's Generative AI (LLMs). Organizations use these insights to identify custom data, intents, topics, entities, sentiment drivers, and other structured data from every voice or chat (message) interaction between a customer and an agent.

AutoSummary can be customized to your specific use cases such as workflows optimizations, trade confirmations, compliance monitoring and quality assurance etc.

## Insights and Data

With AutoSummary, you can extract the following information:

| Insight | Description | This enables you to |

| :-------------------------------------------------- | :------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------ | :------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- |

| [Free text summary](/autosummary/free-text-summary) | Generates a concise text summary of each conversation |

AutoSummary provides a set of APIs to enable you to extract insights from the wealth of data generated when your agents talk to your customers.

AutoSummary insights are powered by ASAPP's Generative AI (LLMs). Organizations use these insights to identify custom data, intents, topics, entities, sentiment drivers, and other structured data from every voice or chat (message) interaction between a customer and an agent.

AutoSummary can be customized to your specific use cases such as workflows optimizations, trade confirmations, compliance monitoring and quality assurance etc.

## Insights and Data

With AutoSummary, you can extract the following information:

| Insight | Description | This enables you to |

| :-------------------------------------------------- | :------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------ | :------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- |

| [Free text summary](/autosummary/free-text-summary) | Generates a concise text summary of each conversation | - Reduces average handle time by eliminating post-call summarization.

- Improves customer experience by allowing agents to focus on customers.

- Optimizes operations by analyzing contact reasons.

- Improves customer experience through better conversation routing.

- Question: Answers to predefined queries (e.g., "Was the customer issue resolved?", "Did the agent follow the script?")

- Entities: Key information said in the conversation such as claim numbers, account details, approval dates, monetary amount, and more.

- Automates data collection for analytics and reporting.

- Facilitates compliance monitoring and quality assurance

- Enables rapid population of CRMs and other business tools

- Supports data-driven decision making and process improvement

- Structured Data extraction

- Intent identification

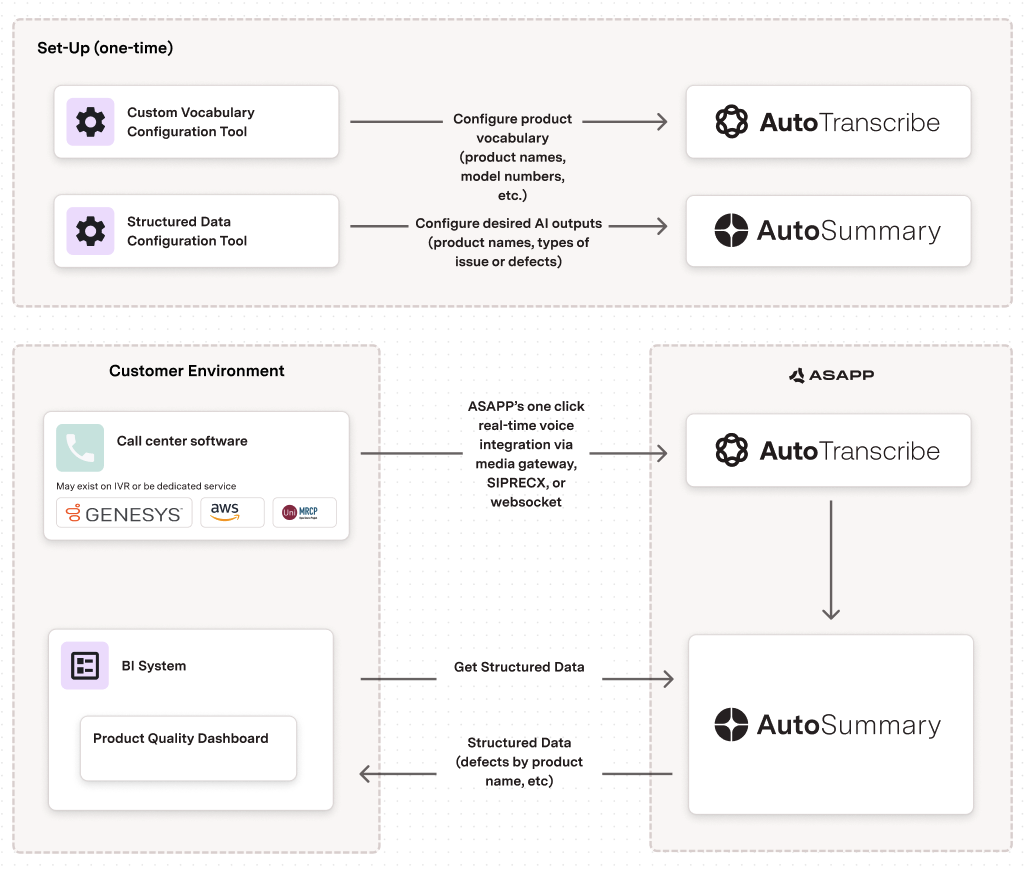

## Real-time Product Quality Monitoring (Retail, Telecommunications)

AutoSummary can generate free-text summaries of customer complaints about product quality, allowing for real-time identification of defects and issues. This could be data such as specific products, complaint or issue types.

| Industry | Category | AutoSummary Features |

| :------------------------ | :---------------- | :------------------- |

| Retail Telecommunications | Quality Assurance | Entity Extraction |

### Implementation

1. Configure Entity Extraction to identify product names and specific defect or issue descriptions.

2. Integrate with call center software for real-time processing.

3. Connect outputs to business intelligence systems for analysis and reporting.

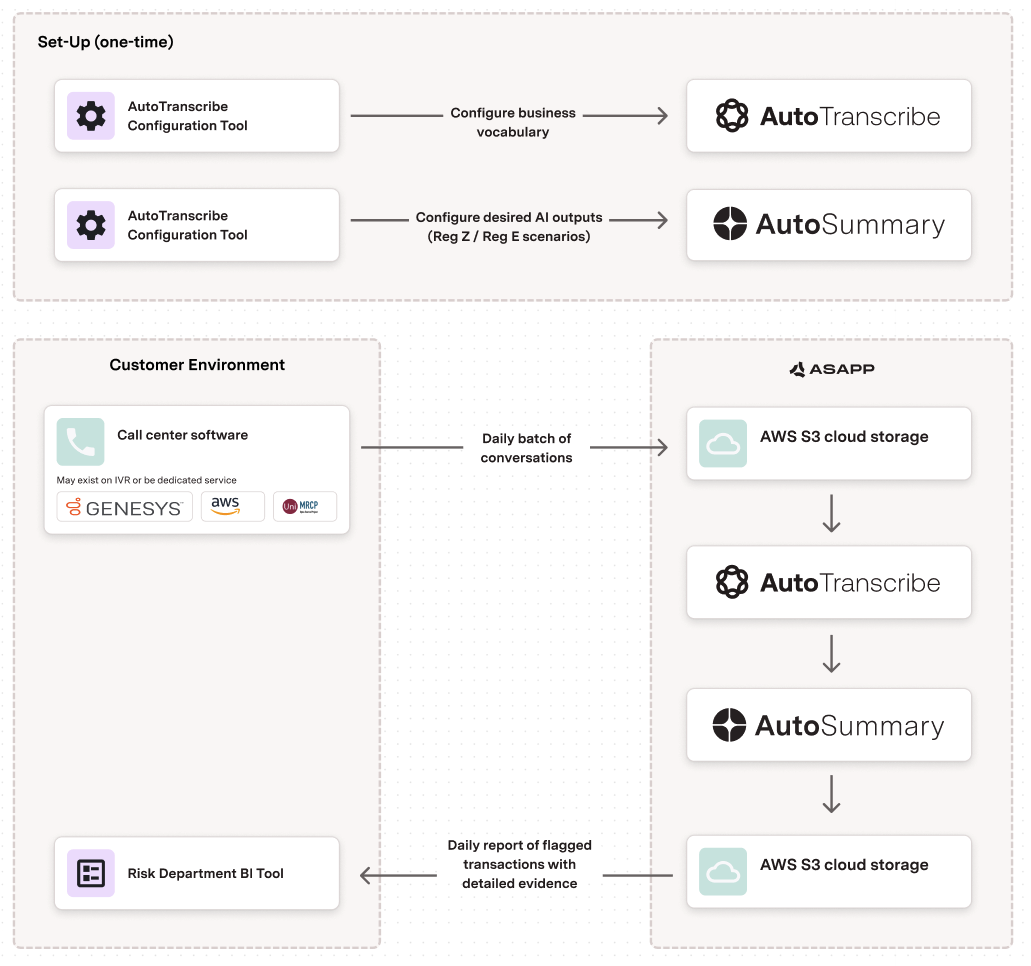

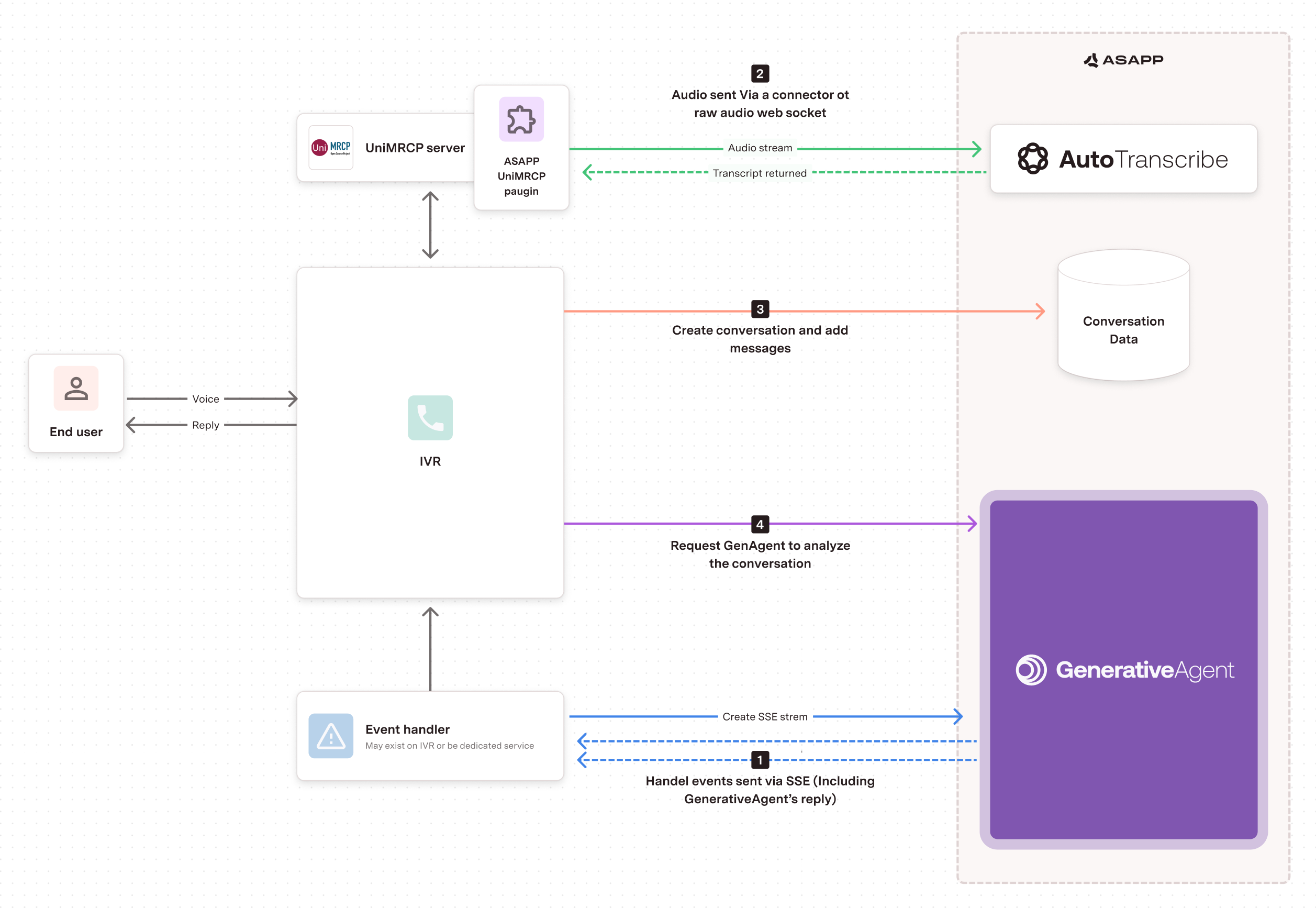

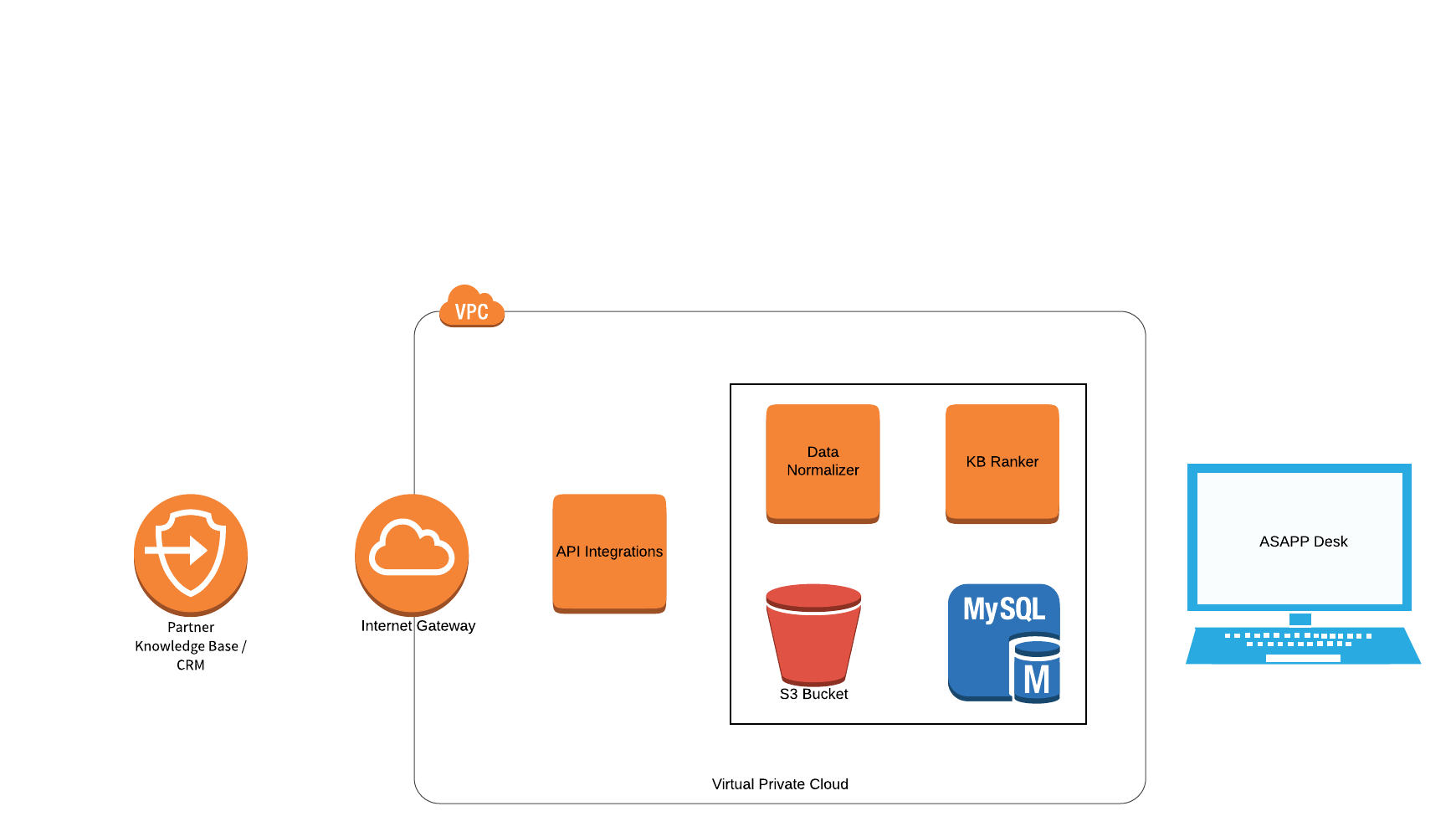

### Architecture

## Real-time Product Quality Monitoring (Retail, Telecommunications)

AutoSummary can generate free-text summaries of customer complaints about product quality, allowing for real-time identification of defects and issues. This could be data such as specific products, complaint or issue types.

| Industry | Category | AutoSummary Features |

| :------------------------ | :---------------- | :------------------- |

| Retail Telecommunications | Quality Assurance | Entity Extraction |

### Implementation

1. Configure Entity Extraction to identify product names and specific defect or issue descriptions.

2. Integrate with call center software for real-time processing.

3. Connect outputs to business intelligence systems for analysis and reporting.

### Architecture

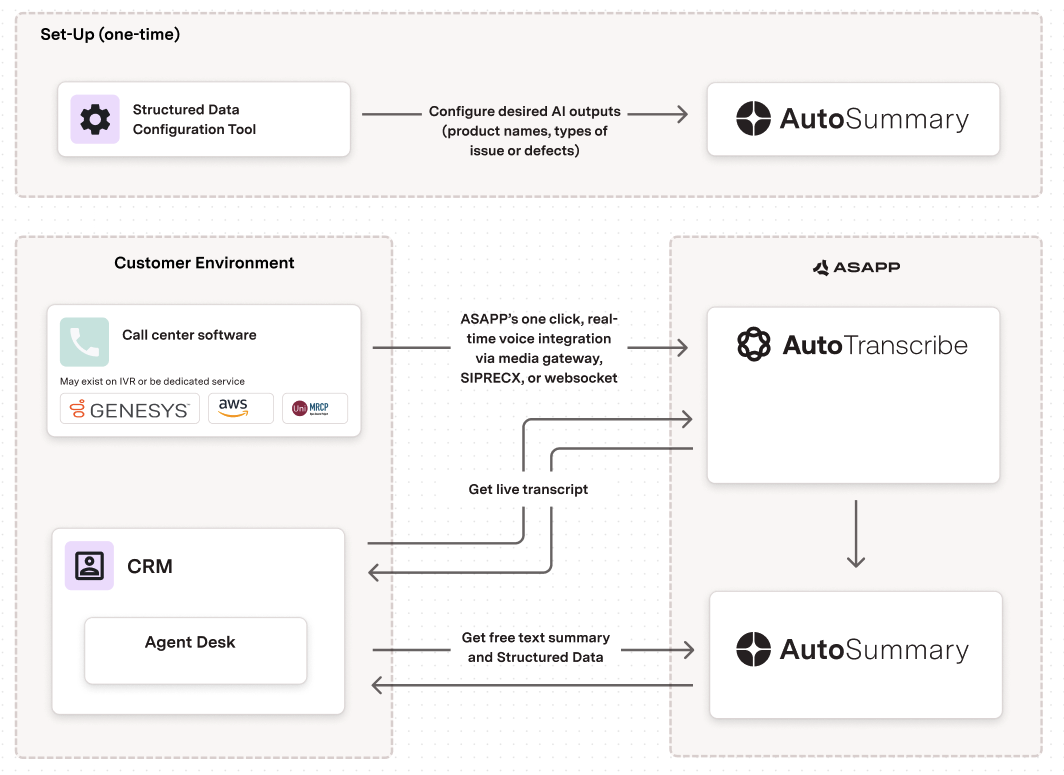

## Automated Call Wrap-up (Multiple Industries)

AutoSummary can automate the process of summarizing customer interactions, eliminating the need for manual note-taking by agents and providing consistent, high-quality call summaries.

The summary and specific data elements can be directly inserted into your contact center or CRM tool to remove manual steps.

| Industry | Category | AutoSummary Features |

| :------------------------------------------------------------------------------------------------------------- | :------------------------------------------------------------------ | :-------------------------------------------------------------------------------------------------------------------- |

|

## Automated Call Wrap-up (Multiple Industries)

AutoSummary can automate the process of summarizing customer interactions, eliminating the need for manual note-taking by agents and providing consistent, high-quality call summaries.

The summary and specific data elements can be directly inserted into your contact center or CRM tool to remove manual steps.

| Industry | Category | AutoSummary Features |

| :------------------------------------------------------------------------------------------------------------- | :------------------------------------------------------------------ | :-------------------------------------------------------------------------------------------------------------------- |

| - Retail

- Telco

- Insurance Travel

- Financial Services

- \*Any\*

- Call Center Operations

- Quality Assurance

- Free Text Summary generation